Guide to configuring and integrating OpenIDM into identity management solutions. The OpenIDM project offers flexible, open source services for automating management of the identity life cycle.

Preface

This guide shows you how to integrate OpenIDM as part of a complete identity management solution.

1. Who Should Use this Guide

This guide is written for systems integrators building identity management solutions based on OpenIDM services. This guide describes OpenIDM, and shows you how to set up OpenIDM as part of your identity management solution.

You do not need to be an OpenIDM wizard to learn something from this guide, though a background in identity management and building identity management solutions can help.

2. Formatting Conventions

Most examples in the documentation are created in GNU/Linux or Mac OS X

operating environments.

If distinctions are necessary between operating environments,

examples are labeled with the operating environment name in parentheses.

To avoid repetition file system directory names are often given

only in UNIX format as in /path/to/server,

even if the text applies to C:\path\to\server as well.

Absolute path names usually begin with the placeholder

/path/to/.

This path might translate to /opt/,

C:\Program Files\, or somewhere else on your system.

Command-line, terminal sessions are formatted as follows:

$ echo $JAVA_HOME /path/to/jdk

Command output is sometimes formatted for narrower, more readable output even though formatting parameters are not shown in the command.

Program listings are formatted as follows:

class Test {

public static void main(String [] args) {

System.out.println("This is a program listing.");

}

}3. Accessing Documentation Online

ForgeRock publishes comprehensive documentation online:

The ForgeRock Knowledge Base offers a large and increasing number of up-to-date, practical articles that help you deploy and manage ForgeRock software.

While many articles are visible to community members, ForgeRock customers have access to much more, including advanced information for customers using ForgeRock software in a mission-critical capacity.

ForgeRock product documentation, such as this document, aims to be technically accurate and complete with respect to the software documented. It is visible to everyone and covers all product features and examples of how to use them.

4. Using the ForgeRock.org Site

The ForgeRock.org site has links to source code for ForgeRock open source software, as well as links to the ForgeRock forums and technical blogs.

If you are a ForgeRock customer, raise a support ticket instead of using the forums. ForgeRock support professionals will get in touch to help you.

Chapter 1. Architectural Overview

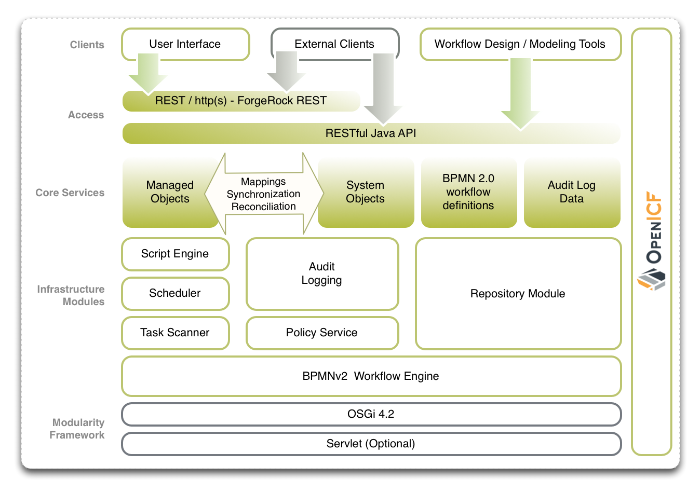

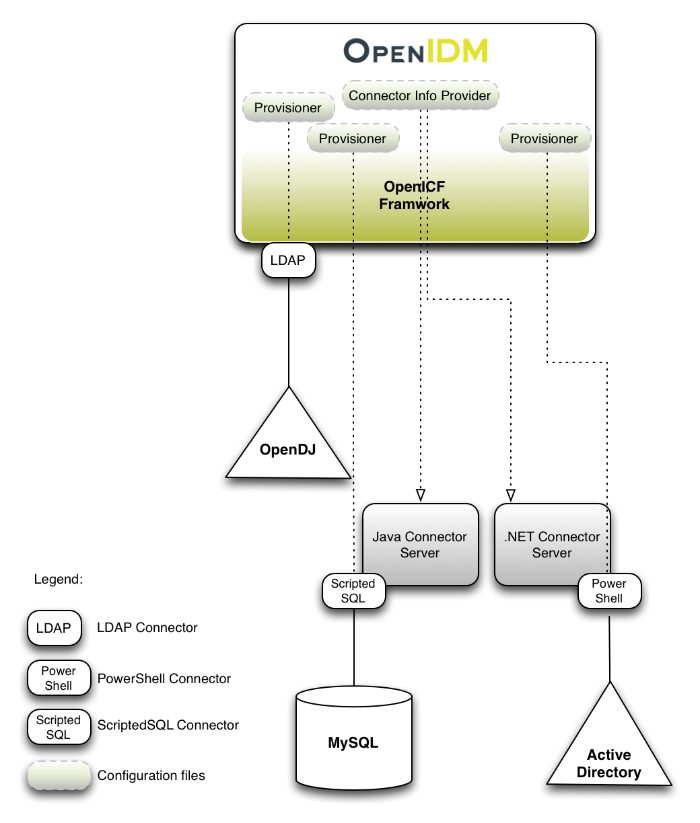

The following figure provides an overview of the OpenIDM architecture, which is covered in more detail in subsequent sections of this chapter.

1.1. OpenIDM Modular Framework

The OpenIDM framework is based on OSGi.

- OSGi

OSGi is a module system and service platform for the Java programming language that implements a complete and dynamic component model. For a good introduction, see the OSGi site. While OpenIDM services are designed to run in any OSGi container, OpenIDM currently runs in Apache Felix.

- Servlet

The optional Servlet layer provides RESTful HTTP access to the managed objects and services. While the Servlet layer can be provided by many different engines, OpenIDM embeds Jetty by default.

1.2. Infrastructure Modules

OpenIDM infrastructure modules provide the underlying features needed for core services.

- BPMN 2.0 Workflow Engine

OpenIDM provides an embedded workflow and business process engine based on Activiti and the Business Process Model and Notation (BPMN) 2.0 standard.

For more information, see Chapter 17, "Integrating Business Processes and Workflows".

- Task Scanner

OpenIDM provides a task scanning mechanism that enables you to perform a batch scan for a specified date in OpenIDM data, on a scheduled interval, and then to execute a task when this date is reached.

For more information, see Section 13.5, "Scanning Data to Trigger Tasks".

- Scheduler

The scheduler provides a cron-like scheduling component implemented using the Quartz library. Use the scheduler, for example, to enable regular synchronizations and reconciliations.

For details, see Chapter 13, "Scheduling Tasks and Events".

- Script Engine

The script engine is a pluggable module that provides the triggers and plugin points for OpenIDM. OpenIDM currently supports JavaScript and Groovy.

- Policy Service

OpenIDM provides an extensible policy service that enables you to apply specific validation requirements to various components and properties.

For more information, see Chapter 9, "Using Policies to Validate Data".

- Audit Logging

Auditing logs all relevant system activity to the configured log stores. This includes the data from reconciliation as a basis for reporting, as well as detailed activity logs to capture operations on the internal (managed) and external (system) objects.

For details, see Chapter 18, "Using Audit Logs".

- Repository

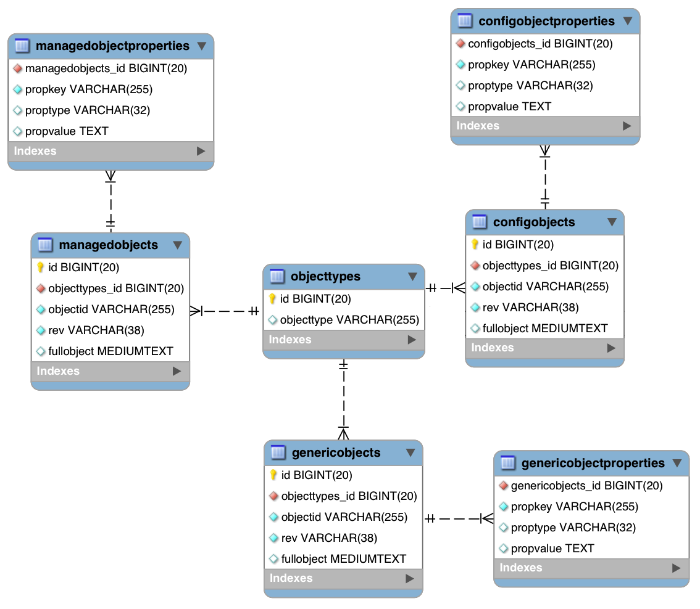

The repository provides a common abstraction for a pluggable persistence layer. OpenIDM 3.1 supports use of MySQL to back the repository. Yet, plugin repositories can include NoSQL and relational databases, LDAP, and even flat files. The repository API operates using a JSON-based object model with RESTful principles consistent with the other OpenIDM services. The default, embedded implementation for the repository is the NoSQL database OrientDB, making it easy to evaluate OpenIDM out of the box before using MySQL in your production environment.

1.3. Core Services

The core services are the heart of the OpenIDM resource oriented unified object model and architecture.

- Object Model

Artifacts handled by OpenIDM are Java object representations of the JavaScript object model as defined by JSON. The object model supports interoperability and potential integration with many applications, services and programming languages. As OpenIDM is a Java-based product, these representations are instances of classes:

Map,List,String,Number,Boolean, andnull.OpenIDM can serialize and deserialize these structures to and from JSON as required. OpenIDM also exposes a set of triggers and functions that system administrators can define, in either JavaScript or Groovy, which can natively read and modify these JSON-based object model structures. OpenIDM is designed to support other scripting and programming languages.

- Managed Objects

A managed object is an object that represents the identity-related data managed by OpenIDM. Managed objects are configurable, JSON-based data structures that OpenIDM stores in its pluggable repository. The default configuration of a managed object is that of a user, but you can define any kind of managed object, for example, groups or roles.

You can access managed objects over the REST interface with a query similar to the following:

$ curl \ --cacert self-signed.crt \ --header "X-OpenIDM-Username: openidm-admin" \ --header "X-OpenIDM-Password: openidm-admin" \ --request GET \ "https://localhost:8443/openidm/managed/..."

- System Objects

System objects are pluggable representations of objects on external systems. For example, a user entry that is stored in an external LDAP directory is represented as a system object in OpenIDM.

System objects follow the same RESTful resource-based design principles as managed objects. They can be accessed over the REST interface with a query similar to the following:

$ curl \ --cacert self-signed.crt \ --header "X-OpenIDM-Username: openidm-admin" \ --header "X-OpenIDM-Password: openidm-admin" \ --request GET \ "https://localhost:8443/openidm/system/..."There is a default implementation for the OpenICF framework, that allows any connector object to be represented as a system object.

- Mappings

Mappings define policies between source and target objects and their attributes during synchronization and reconciliation. Mappings can also define triggers for validation, customization, filtering, and transformation of source and target objects.

For details, see Chapter 12, "Configuring Synchronization".

- Synchronization & Reconciliation

Reconciliation enables on-demand and scheduled resource comparisons between the OpenIDM managed object repository and source or target systems. Comparisons can result in different actions, depending on the mappings defined between the systems.

Synchronization enables creating, updating, and deleting resources from a source to a target system, either on demand or according to a schedule.

For details, see Chapter 12, "Configuring Synchronization".

1.4. Secure Commons REST Commands

As noted in Appendix E, "REST API Reference", Representational State Transfer (REST) is a software architecture style for exposing resources, using the technologies and protocols of the World Wide Web.

REST interfaces are commonly tested with a curl command. Many of these commands are used in this document. They work with the standard ports associated with Java EE communications, 8080 and 8443.

To run curl over the secure port, 8443, you must include

either the --insecure option, or follow the instructions

shown in Section 16.2.2, "Restrict REST Access to the HTTPS Port". You can use those

instructions with the self-signed certificate generated when OpenIDM

starts, or with a *.crt file provided by a

certificate authority.

In many cases in this guide, curl commands to the secure

port are depicted with a --cacert self-signed.crt option.

Instructions for creating that self-signed.crt file

are shown in Section 16.2.2, "Restrict REST Access to the HTTPS Port".

1.5. Access Layer

The access layer provides the user interfaces and public APIs for accessing and managing the OpenIDM repository and its functions.

- RESTful Interfaces

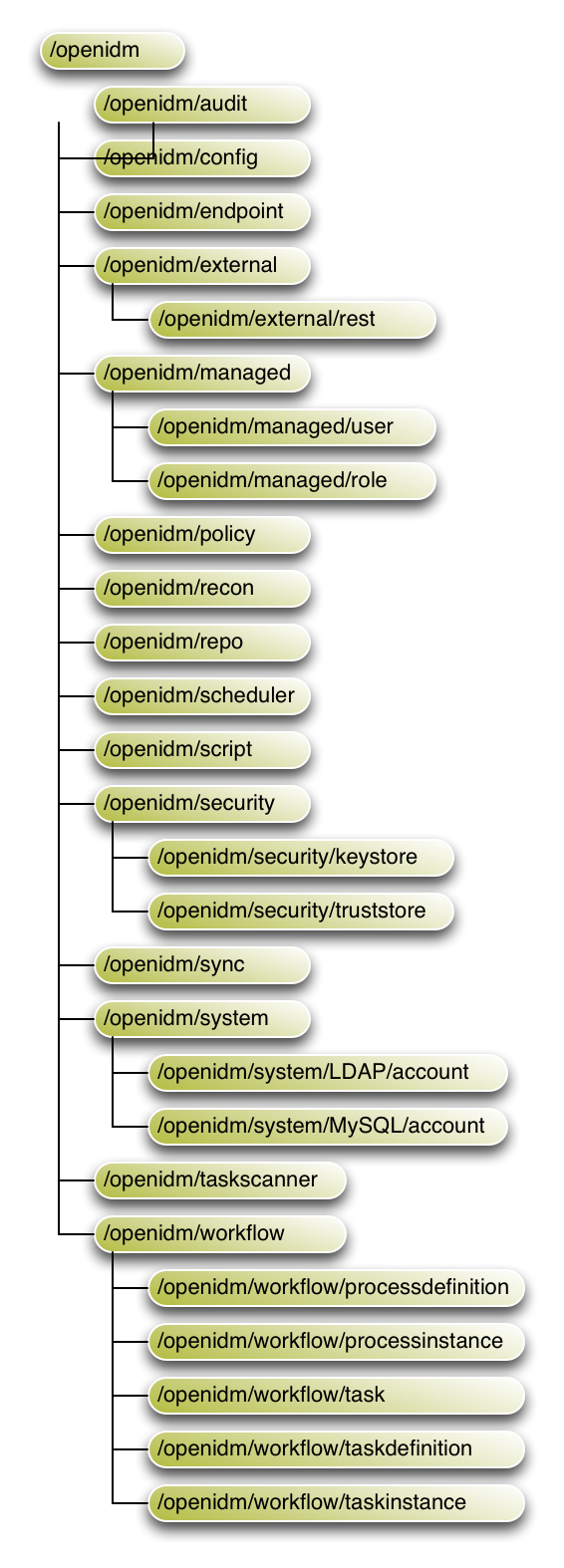

OpenIDM provides REST APIs for CRUD operations and invoking synchronization and reconciliation for both HTTP and Java.

For details, see Appendix E, "REST API Reference".

- User Interfaces

User interfaces provide password management, registration, self-service, and workflow services.

Chapter 2. Starting and Stopping OpenIDM

This chapter covers the scripts provided for starting and stopping OpenIDM, and describes how to verify the health of a system, that is, that all requirements are met for a successful system startup.

2.1. To Start and Stop OpenIDM

By default you start and stop OpenIDM in interactive mode.

To start OpenIDM interactively, open a terminal or command window,

change to the openidm directory, and run the startup

script:

startup.sh (UNIX)

startup.bat (Windows)

The startup script starts OpenIDM, and opens an OSGi console with a

-> prompt where you can issue console commands.

To stop OpenIDM interactively in the OSGi console, enter the shutdown command.

-> shutdown

You can also start OpenIDM as a background process on UNIX, Linux, and Mac OS X. Follow these steps before starting OpenIDM for the first time.

If you have already started OpenIDM, then shut down OpenIDM and remove the Felix cache files under

openidm/felix-cache/.-> shutdown ... $ rm -rf felix-cache/*

Start OpenIDM in the background.

$ ./startup.sh &

Alternatively, use the nohup command to keep OpenIDM running after you log out.

$ nohup ./startup.sh & [2] 394 $ appending output to nohup.out $

To stop OpenIDM running as a background process, use the shutdown.sh script.

$ ./shutdown.sh ./shutdown.sh Stopping OpenIDM (454)

If you start OpenIDM in the background, and the job stops immediately after startup, see Section 23.1, "OpenIDM Stopped in Background".

To disable ConsoleHandler logging, see

Chapter 10, "Configuring Server Logs".

2.2. Specifying the OpenIDM Startup Configuration

By default, OpenIDM starts up with the configuration and script files

that are located in the openidm/conf and

openidm/script directories, and with the binaries that

are in the default install location. You can launch OpenIDM with a different

configuration and set of script files, and even with a different set of

binaries, in order to test a new configuration, manage multiple different

OpenIDM projects, or to run one of the included samples.

The startup.sh script enables you to specify the

following elements of a running OpenIDM instance.

project location (

-p)The project location specifies the configuration and default scripts with which OpenIDM will run.

If you specify the project location, OpenIDM does not try to locate configuration objects in the default location. All configuration objects and any artifacts that are not in the bundled defaults (such as custom scripts) must be provided in the project location. This includes everything that is in the default

openidm/confandopenidm/scriptdirectories.The following command starts OpenIDM with the configuration of sample 1:

$ ./startup.sh -p /path/to/openidm/samples/sample1

If an absolute path is not provided, the path is relative to the system property,

user.dir. If no project location is specified, OpenIDM is launched with the default configuration in/path/to/openidm/conf.working location (

-w)The working location specifies the directory to which OpenIDM writes its database cache and audit logs. The working location includes everything that is in the default

dbandauditdirectories.The following command specifies that OpenIDM writes its database cache and audit data to

/Users/admin/openidm/storage:$ ./startup.sh -w /Users/admin/openidm/storage

If an absolute path is not provided, the path is relative to the system property,

user.dir. If no working location is specified, OpenIDM writes this data to theopenidm/dbandopenidm/auditdirectories.Note that this property does not affect the location of the OpenIDM system logs, or the Felix cache. To change the location of the OpenIDM logs, edit the

conf/logging.propertiesfile. To change the location of the Felix cache, edit theconf/config.propertiesfile. Instructions are available in those respective files.startup configuration file (

-c)A customizable startup configuration file (named

launcher.json) enables you to specify how the OSGi Framework is started.Unless you are working with a highly customized deployment, you should not modify the default framework configuration. This option is therefore described in more detail in Chapter 24, "Advanced Configuration".

By default, properties files are loaded in the following order, and property values are resolved in the reverse order:

system.propertiesconfig.propertiesboot.properties

If both system and boot properties define the same attribute, the

property substitution process locates the attribute in

boot.properties and does not attempt to locate the

property in system.properties.

You can use variable substitution in any .json

configuration file with the install, working and project locations

described previously. The following properties can be substituted:

install.location |

install.url |

working.location |

working.url |

project.location |

project.url |

Property substitution takes the following syntax:

&{launcher.property}For example, to specify the location of the OrientDB database, you

can set the dbUrl property in repo.orientdb.json

as follows:

"dbUrl" : "local:&{launcher.working.location}/db/openidm",

The database location is then relative to a working location defined in the startup configuration.

Note that property substitution does not work for connector reference properties. So, for example, the following configuration would not be valid:

"connectorRef" : {

"connectorName" : "&{connectorName}",

"bundleName" : "org.forgerock.openicf.connectors.ldap-connector",

"bundleVersion" : "&{LDAP.BundleVersion}"

...

The "connectorName" must be the precise string from the

connector configuration. If you need to specify multiple connector version

numbers, use a range of versions, for example:

"connectorRef" : {

"connectorName" : "org.identityconnectors.ldap.LdapConnector",

"bundleName" : "org.forgerock.openicf.connectors.ldap-connector",

"bundleVersion" : "[1.4.0.0,2.0.0.0)",

...

2.3. Obtaining Information About an OpenIDM Instance

OpenIDM includes a customizable information service that provides detailed

information about a running OpenIDM instance. The information can be accessed

over the REST interface, under the context

https://localhost:8443/openidm/info.

By default, OpenIDM provides the following information:

Basic information about the health of the system.

This information can be accessed over REST at

https://localhost:8443/openidm/info/ping. For example:$ curl \ --cacert self-signed.crt \ --header "X-OpenIDM-Username: openidm-admin" \ --header "X-OpenIDM-Password: openidm-admin" \ --request GET \ "https://localhost:8443/openidm/info/ping" {"state":"ACTIVE_READY","shortDesc":"OpenIDM ready"}The information is provided by the script

openidm/bin/defaults/script/info/ping.js.Information about the current OpenIDM session.

This information can be accessed over REST at

https://localhost:8443/openidm/info/login. For example:$ curl \ --cacert self-signed.crt \ --header "X-OpenIDM-Username: openidm-admin" \ --header "X-OpenIDM-Password: openidm-admin" \ --request GET \ "https://localhost:8443/openidm/info/login" { "authenticationId": "openidm-admin", "class": "org.forgerock.json.resource.SecurityContext", "parent": { "class": "org.forgerock.json.resource.RootContext", "parent": null, "id": "6f1709ce-75bd-4f9b-b1ad-d4592be37361" }, "authorizationId": { "roles": [ "openidm-admin", "openidm-authorized" ], "component": "repo/internal/user", "id": "openidm-admin" } }The information is provided by the script

openidm/bin/defaults/script/info/login.js.

You can extend or override the default information that is provided by

creating your own script file and its corresponding configuration file in

openidm/conf/info-name.json.

Custom script files can be located anywhere, although a best practice is to

place them in openidm/script/info. A sample customized

script file for extending the default ping service is provided in

openidm/samples/infoservice/script/info/customping.js.

The corresponding configuration file is provided in

openidm/samples/infoservice/conf/info-customping.json.

The configuration file has the following syntax:

{

"infocontext" : "ping",

"type" : "text/javascript",

"file" : "script/info/customping.js"

}

The parameters in the configuration file are as follows:

"infocontext"specifies the relative name of the info endpoint under the info context. The information can be accessed over REST at this endpoint, for example, setting"infocontext"to"mycontext/myendpoint"would make the information accessible over REST athttps://localhost:8443/openidm/info/mycontext/myendpoint."type"specifies the type of the information source. Javascript ("type" : "text/javascript") and Groovy ("type" : "groovy") are supported."file"specifies the path to the Javascript or Groovy file, if you do not provide a"source"parameter."source"specifies the actual Javascript or Groovy script, if you have not provided a"file"parameter.

Additional properties can be passed to the script as depicted in this

configuration file

(openidm/samples/infoservice/conf/info-name.json).

Script files in openidm/samples/infoservice/script/info/

have access to the following objects:

request- the request details, including the method called and any parameters passed.healthinfo- the current health status of the system.openidm- access to the JSON resource API.Any additional properties that are depicted in the configuration file (

openidm/samples/infoservice/conf/info-name.json.)

2.4. Verifying the Health of an OpenIDM System

Due to the highly modular, configurable nature of OpenIDM, it is often difficult to assess whether a system has started up successfully, or whether the system is ready and stable after dynamic configuration changes have been made.

OpenIDM provides a configurable health check service that verifies that the required modules and services for an operational system are up and running. During system startup, OpenIDM checks that these modules and services are available and reports on whether any requirements for an operational system have not been met. If dynamic configuration changes are made, OpenIDM rechecks that the required modules and services are functioning so that system operation is monitored on an ongoing basis.

The health check service reports on the state of the OpenIDM system and outputs this state to the console and to the log files. The system can be in one of the following states:

STARTING - OpenIDM is starting up |

ACTIVE_READY - all of the specified requirements

have been met to consider the OpenIDM system ready |

ACTIVE_NOT_READY - one or more of the specified

requirements have not been met and the OpenIDM system is not considered ready

|

STOPPING - OpenIDM is shutting down |

OpenIDM checks all required modules and services. Examples of those services are shown here.

Required Modules (examples)

"org.forgerock.openicf.framework.connector-framework" "org.forgerock.openicf.framework.connector-framework-internal" "org.forgerock.openicf.framework.connector-framework-osgi" "org.forgerock.openidm.audit" "org.forgerock.openidm.core" "org.forgerock.openidm.enhanced-config" "org.forgerock.openidm.external-email" ... "org.forgerock.openidm.system" "org.forgerock.openidm.ui" "org.forgerock.openidm.util" "org.forgerock.commons.org.forgerock.json.resource" "org.forgerock.commons.org.forgerock.json.resource.restlet" "org.forgerock.commons.org.forgerock.restlet" "org.forgerock.commons.org.forgerock.util" "org.forgerock.openidm.security-jetty" "org.forgerock.openidm.jetty-fragment" "org.forgerock.openidm.quartz-fragment" "org.ops4j.pax.web.pax-web-extender-whiteboard" "org.forgerock.openidm.scheduler" "org.ops4j.pax.web.pax-web-jetty-bundle" "org.forgerock.openidm.repo-jdbc" "org.forgerock.openidm.repo-orientdb" "org.forgerock.openidm.config" "org.forgerock.openidm.crypto"

Required Services (examples)

"org.forgerock.openidm.config"

"org.forgerock.openidm.provisioner"

"org.forgerock.openidm.provisioner.openicf.connectorinfoprovider"

"org.forgerock.openidm.external.rest"

"org.forgerock.openidm.audit"

"org.forgerock.openidm.policy"

"org.forgerock.openidm.managed"

"org.forgerock.openidm.script"

"org.forgerock.openidm.crypto"

"org.forgerock.openidm.recon"

"org.forgerock.openidm.info"

"org.forgerock.openidm.router"

"org.forgerock.openidm.scheduler"

"org.forgerock.openidm.scope"

"org.forgerock.openidm.taskscanner"

You can replace this list, or add to it, by adding the following lines

to the openidm/conf/boot/boot.properties file:

"openidm.healthservice.reqbundles" - overrides

the default required bundles. Bundles are specified as a list of symbolic

names, separated by commas. |

"openidm.healthservice.reqservices" - overrides

the default required services. Services are specified as a list of symolic

names, separated by commas. |

"openidm.healthservice.additionalreqbundles" -

specifies required bundles (in addition to the default list). Bundles are

specified as a list of symbolic names, separated by commas. |

"openidm.healthservice.additionalreqservices" -

specifies required services (in addition to the default list). Services are

specified as a list of symbolic names, separated by commas. |

By default, OpenIDM gives the system ten seconds to start up all

the required bundles and services, before the system readiness is assessed.

Note that this is not the total start time, but the time required to complete

the service startup after the framework has started. You can change this

default by setting the value of the servicestartmax

property (in miliseconds) in the

openidm/conf/boot/boot.properties file. This example

sets the startup time to five seconds.

openidm.healthservice.servicestartmax=5000

The health check service works in tandem with the scriptable information service. For more information see Section 2.3, "Obtaining Information About an OpenIDM Instance".

Do not use the health check service to monitor the status of external resources, such as LDAP servers, or external databases. Rather, monitor these resources over the REST interface, as described in Section 11.7, "Checking the Status of External Systems Over REST".

2.5. Displaying Information About Installed Modules

On a running OpenIDM instance, you can list the installed modules and their states by typing the following command in the Felix administration console. (The output will vary by configuration.)

-> scr list

Id State Name

[ 12] [active ] org.forgerock.openidm.endpoint

[ 13] [active ] org.forgerock.openidm.endpoint

[ 14] [active ] org.forgerock.openidm.endpoint

[ 15] [active ] org.forgerock.openidm.endpoint

[ 16] [active ] org.forgerock.openidm.endpoint

...

[ 34] [active ] org.forgerock.openidm.taskscanner

[ 20] [active ] org.forgerock.openidm.external.rest

[ 6] [active ] org.forgerock.openidm.router

[ 33] [active ] org.forgerock.openidm.scheduler

[ 19] [unsatisfied ] org.forgerock.openidm.external.email

[ 11] [active ] org.forgerock.openidm.sync

[ 25] [active ] org.forgerock.openidm.policy

[ 8] [active ] org.forgerock.openidm.script

[ 10] [active ] org.forgerock.openidm.recon

[ 4] [active ] org.forgerock.openidm.http.contextregistrator

[ 1] [active ] org.forgerock.openidm.config

[ 18] [active ] org.forgerock.openidm.endpointservice

[ 30] [unsatisfied ] org.forgerock.openidm.servletfilter

[ 24] [active ] org.forgerock.openidm.infoservice

[ 21] [active ] org.forgerock.openidm.authentication

->To display additional information about a particular module or

service, run the following command, substituting the Id

of that module from the preceding list.

-> scr info Id

The following example displays additional information about the router service:

-> scr info 6

ID: 6

Name: org.forgerock.openidm.router

Bundle: org.forgerock.openidm.core (41)

State: active

Default State: enabled

Activation: immediate

Configuration Policy: optional

Activate Method: activate (declared in the descriptor)

Deactivate Method: deactivate (declared in the descriptor)

Modified Method: modified

Services: org.forgerock.json.resource.JsonResource

Service Type: service

Reference: ref_JsonResourceRouterService_ScopeFactory

Satisfied: satisfied

Service Name: org.forgerock.openidm.scope.ScopeFactory

Multiple: single

Optional: mandatory

Policy: dynamic

Properties:

component.id = 6

component.name = org.forgerock.openidm.router

felix.fileinstall.filename = file:/openidm/samples/sample1/conf/router.json

jsonconfig = {

"filters" : [

{

"onRequest" : {

"type" : "text/javascript",

"file" : "bin/defaults/script/router-authz.js"

}

},

{

"onRequest" : {

"type" : "text/javascript",

"file" : "bin/defaults/script/policyFilter.js"

},

"methods" : [

"create",

"update"

]

}

]

}

openidm.restlet.path = /

service.description = OpenIDM internal JSON resource router

service.pid = org.forgerock.openidm.router

service.vendor = ForgeRock AS

->2.6. Starting OpenIDM in Debug Mode

To debug custom libraries, you can start OpenIDM with the option to use the Java Platform Debugger Architecture (JPDA).

Start OpenIDM with the

jpdaoption:$ cd /path/to/openidm $ ./startup.sh jpda Executing ./startup.sh... Using OPENIDM_HOME: /path/to/openidm Using OPENIDM_OPTS: -Xmx1024m -Xms1024m -Denvironment=PROD -Djava.compiler=NONE -Xnoagent -Xdebug -Xrunjdwp:transport=dt_socket,address=5005,server=y,suspend=n Using LOGGING_CONFIG: -Djava.util.logging.config.file=/path/to/openidm/conf/logging.properties Listening for transport dt_socket at address: 5005 Using boot properties at /path/to/openidm/conf/boot/boot.properties -> OpenIDM version "3.1.0-6" (revision: xxxx) OpenIDM readyThe relevant JPDA options are outlined in the startup script (

startup.sh).In your IDE, attach a Java debugger to the JVM via socket, on port 5005.

Caution

This interface is internal and subject to change. If you depend on this interface, contact ForgeRock support.

2.7. Running OpenIDM as a Service on Linux Systems

OpenIDM provides a script that generates an initialization script to run OpenIDM as a service on Linux systems. You can start the script as the root user, or configure it to start during the boot process.

When OpenIDM runs as a service, logs are written to the directory in which OpenIDM was installed.

To run OpenIDM as a service, take the following steps:

If you have not already done so, install and set up OpenIDM, as described in Chapter 1, "Installing OpenIDM Services" in the Installation Guide.

Run the RC script.

$ cd /path/to/openidm/bin $ ./create-openidm-rc.sh

As a user with administrative privileges, copy the

openidmscript to the/etc/init.ddirectory.$ sudo cp openidm /etc/init.d/

If you run Linux with SELinux enabled, change the file context of the newly copied script with the following command:

$ sudo restorecon /etc/init.d/openidmYou can verify the change to SELinux contexts with the

ls -Z /etc/init.dcommand. For consistency, change the user context to match other scripts in the same directory with thesudo chcon -u system_u /etc/init.d/openidmcommand.On Red Hat-based systems, run the following commands to add OpenIDM to the list of RC services, in appropriate runlevels:

$ sudo chkconfig --add openidm

$ sudo chkconfig openidm on

On Debian/Ubuntu systems, run the following command. Note the output, as Debian/Ubuntu adds start and kill scripts to appropriate runlevels.

$ sudo update-rc.d openidm defaults Adding system startup for /etc/init.d/openidm ... /etc/rc0.d/K20openidm -> ../init.d/openidm /etc/rc1.d/K20openidm -> ../init.d/openidm /etc/rc6.d/K20openidm -> ../init.d/openidm /etc/rc2.d/S20openidm -> ../init.d/openidm /etc/rc3.d/S20openidm -> ../init.d/openidm /etc/rc4.d/S20openidm -> ../init.d/openidm /etc/rc5.d/S20openidm -> ../init.d/openidmWhen you run the command, you may get the following warning message:

update-rc.d: warning: /etc/init.d/openidm missing LSB information. You can safely ignore that message.

As an administrative user, start the OpenIDM service.

$ sudo /etc/init.d/openidm start

Alternatively, reboot the system to start the OpenIDM service automatically.

(Optional) The following commands stops and restarts the service:

$ sudo /etc/init.d/openidm stop

$ sudo /etc/init.d/openidm restart

Chapter 3. OpenIDM Command-Line Interface

OpenIDM includes a basic command-line interface that provides a number of utilities for managing the OpenIDM instance.

All of the utilities are subcommands of the cli.sh

(UNIX) or cli.bat (Windows) scripts. To use the utilities,

you can either run them as subcommands, or launch the cli

script first, and then run the utility. For example, to run the

encrypt utility on a UNIX system:

$ cd /path/to/openidm $ ./cli.sh Using boot properties at /path/to/openidm/conf/boot/boot.properties openidm# encrypt ....

or

$ cd /path/to/openidm $ ./cli.sh encrypt ...

By default, the command-line utilities run with the properties defined

in /path/to/openidm/conf/boot/boot.properties.

If you run the cli.sh command by itself, it opens an OpenIDM-specific shell prompt:

openidm#

The startup and shutdown scripts are not discussed in this chapter. For information about these scripts, see Chapter 2, "Starting and Stopping OpenIDM".

The following sections describe the subcommands and their use. Examples assume that you are running the commands on a UNIX system. For Windows systems, use cli.bat instead of cli.sh.

For a list of subcommands available from the openidm#

prompt, run the cli.sh help command. The

help and exit options shown below are

self-explanatory. The other subcommands are explained in the subsections

that follow.

local:keytool Export or import a SecretKeyEntry.

The Java Keytool does not allow for exporting or importing SecretKeyEntries.

local:encrypt Encrypt the input string.

local:validate Validates all json configuration files in the configuration

(default: /conf) folder.

basic:help Displays available commands.

basic:exit Exit from the console.

remote:configureconnector Generate connector configuration.

remote:configexport Exports all configurations.

remote:configimport Imports the configuration set from local file/directory.The configexport, configimport, and configconnector subcommands support up to four options:

- -u or --user USER[:PASSWORD]

Allows you to specify the server user and password. Specifying a username is mandatory. If you do not specify a username, the following error is output to the console:

Remote operation failed: Unauthorized. If you do not specify a password, you are prompted for one. This option is used by all three subcommands.- --url URL

The URL of the OpenIDM REST service. The default URL is

http://localhost:8080/openidm/. This can be used to import configuration files from a remote running instance of OpenIDM. This option is used by all three subcommands. commands.- -P or --port PORT

The port number associated with the OpenIDM REST service. If specified, this option overrides any port number specified with the --url option. The default port is 8080. This option is used by all three subcommands.

- -r or --replaceall or --replaceAll

Replaces the entire list of configuration files with the files in the specified backup directory. This option is used with only the configimport command.

3.1. configexport

The configexport subcommand exports all configuration objects to a specified location, enabling you to reuse a system configuration in another environment. For example, you can test a configuration in a development environment, then export it and import it into a production environment. This subcommand also enables you to inspect the active configuration of an OpenIDM instance.

OpenIDM must be running when you execute this command.

Usage is as follows:

$ ./cli.sh configexport --user username:passsword export-location

For example:

$ ./cli.sh configexport --user openidm-admin:openidm-admin /tmp/conf

On Windows systems, the export-location must be provided in quotation marks, for example:

C:\openidm\cli.bat configexport --user openidm-admin:openidm-admin "C:\temp\openidm"

Configuration objects are exported, as .json

files, to the specified directory. The command creates the directory if

needed. Configuration files that are present in this directory are renamed as

backup files, with a timestamp, for example,

audit.json.2014-02-19T12-00-28.bkp, and are not

overwritten. The following configuration objects are exported:

The internal repository configuration (

repo.orientdb.jsonorrepo.jdbc.json)Default and custom configuration directories (

script.json)The log configuration (

audit.json)The authentication configuration (

authentication.json)The cluster configuration (

cluster.json)The configuration of a connected SMTP email server (

external.email.json)Custom configuration information (

info-name.json)The managed object configuration (

managed.json)The connector configuration (

provisioner.openicf-*.json)The router service configuration (

router.json)The scheduler service configuration (

scheduler.json)Any configured schedules (

schedule-*.json)The synchronization mapping configuration (

sync.json)If workflows are defined, the configuration of the workflow engine (

workflow.json) and the workflow access configuration (process-access.json)Any configuration files related to the user interface (

ui-*.json)The configuration of any custom endpoints (

endpoint-*.json)The configuration of servlet filters (

servletfilter-*.json)The policy configuration (

policy.json)

3.2. configimport

The configimport subcommand imports configuration objects from the specified directory, enabling you to reuse a system configuration from another environment. For example, you can test a configuration in a development environment, then export it and import it into a production environment.

The command updates the existing configuration from the import-location over the OpenIDM REST interface. By default, if configuration objects are present in the import-location and not in the existing configuration, these objects are added. If configuration objects are present in the existing location but not in the import-location, these objects are left untouched in the existing configuration.

If you include the --replaceAll parameter, the

command wipes out the existing configuration and replaces it with the

configuration in the import-location. Objects in

the existing configuration that are not present in the

import-location are deleted.

Usage is as follows:

$ ./cli.sh configimport --user username:password [--replaceAll] import-location

For example:

$ ./cli.sh configimport --user openidm-admin:openidm-admin --replaceAll /tmp/conf

On Windows systems, the import-location must be provided in quotation marks, for example:

C:\openidm\cli.bat configimport --user openidm-admin:openidm-admin --replaceAll "C:\temp\openidm"

Configuration objects are imported, as .json files,

from the specified directory to the conf directory. The

configuration objects that are imported are outlined in the corresponding

export command, described in the previous section.

3.3. configureconnector

The configureconnector subcommand generates a configuration for an OpenICF connector.

Usage is as follows:

$ ./cli.sh configureconnector --user username:password connector-name

Select the type of connector that you want to configure. The following example configures a new XML connector.

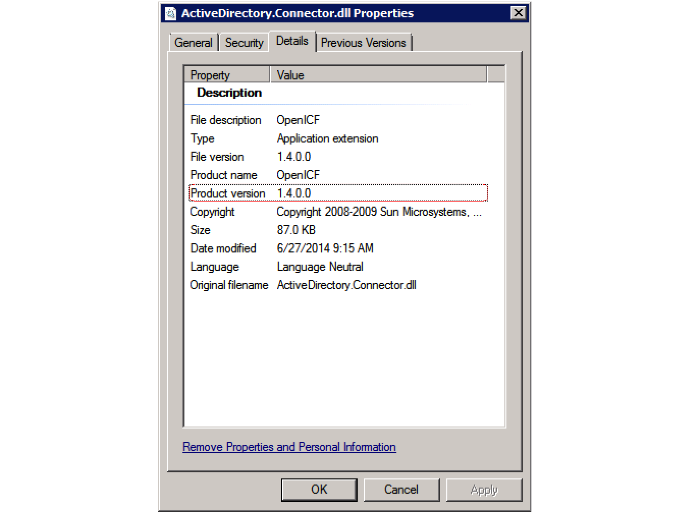

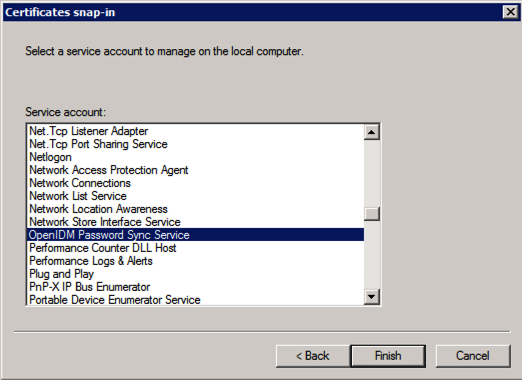

$ ./cli.sh configureconnector --user openidm-admin:openidm-admin myXmlConnector Starting shell in /path/to/openidm Using boot properties at /path/to/openidm/conf/boot/boot.properties 0. CSV File Connector version 1.1.0.2 1. Database Table Connector version 1.1.0.1 2. Scripted Poolable Groovy Connector version 1.4.1.0 3. Scripted Groovy Connector version 1.4.1.0 4. Scripted CREST Connector version 1.4.1.0 5. Scripted SQL Connector version 1.4.1.0 6. Scripted REST Connector version 1.4.1.0 7. LDAP Connector version 1.4.0.1 8. XML Connector version 1.1.0.2 9. Exit Select [0..9]: 8 Edit the configuration file and run the command again. The configuration was saved to /openidm/temp/provisioner.openicf-myXmlConnector.json

The basic configuration is saved in a file named

/openidm/temp/provisioner.openicf-connector-name.json.

Edit the configurationProperties parameter in this file to

complete the connector configuration. For an XML connector, you can use the

schema definitions in sample 1 for an example configuration.

"configurationProperties" : {

"xmlFilePath" : "samples/sample1/data/resource-schema-1.xsd",

"createFileIfNotExists" : false,

"xsdFilePath" : "samples/sample1/data/resource-schema-extension.xsd",

"xsdIcfFilePath" : "samples/sample1/data/xmlConnectorData.xml"

},

For more information about the connector configuration properties, see Section 11.3, "Configuring Connectors".

When you have modified the file, run the configureconnector command again so that OpenIDM can pick up the new connector configuration.

$ ./cli.sh configureconnector --user openidm-admin:openidm-admin myXmlConnector Executing ./cli.sh... Starting shell in /path/to/openidm Using boot properties at /path/to/openidm/conf/boot/boot.properties Configuration was found and read from: /path/to/openidm/temp/provisioner.openicf-myXmlConnector.json

You can now copy the new

provisioner.openicf-myXmlConnector.json file

to the conf/ subdirectory.

You can also configure connectors over the REST interface. For more information, see Section 11.6, "Creating Default Connector Configurations".

3.4. encrypt

The encrypt subcommand encrypts an input string, or JSON object, provided at the command line. This subcommand can be used to encrypt passwords, or other sensitive data, to be stored in the OpenIDM repository. The encrypted value is output to standard output and provides details of the cryptography key that is used to encrypt the data.

Usage is as follows:

$ ./cli.sh encrypt [-j] string

The -j option specifies that the string to be

encrypted is a JSON object. If you do not enter the string as part of the

command, the command prompts for the string to be encrypted. If you enter

the string as part of the command, any special characters, for example

quotation marks, must be escaped.

The following example encrypts a normal string value:

$ ./cli.sh encrypt mypassword

Executing ./cli.sh

Starting shell in /path/to/openidm

Using boot properties at /path/to/openidm/conf/boot/boot.properties

Activating cryptography service of type: JCEKS provider: location: security/keystore.jceks

Available cryptography key: openidm-sym-default

Available cryptography key: openidm-localhost

CryptoService is initialized with 2 keys.

-----BEGIN ENCRYPTED VALUE-----

{

"$crypto" : {

"value" : {

"iv" : "M2913T5ZADlC2ip2imeOyg==",

"data" : "DZAAAM1nKjQM1qpLwh3BgA==",

"cipher" : "AES/CBC/PKCS5Padding",

"key" : "openidm-sym-default"

},

"type" : "x-simple-encryption"

}

}

------END ENCRYPTED VALUE------

The following example encrypts a JSON object. The input string must be a valid JSON object.

$ ./cli.sh encrypt -j {\"password\":\"myPassw0rd\"}

Starting shell in /path/to/openidm

Using boot properties at /path/to/openidm/conf/boot/boot.properties

Activating cryptography service of type: JCEKS provider: location: security/keystore.jceks

Available cryptography key: openidm-sym-default

Available cryptography key: openidm-localhost

CryptoService is initialized with 2 keys.

-----BEGIN ENCRYPTED VALUE-----

{

"$crypto" : {

"value" : {

"iv" : "M2913T5ZADlC2ip2imeOyg==",

"data" : "DZAAAM1nKjQM1qpLwh3BgA==",

"cipher" : "AES/CBC/PKCS5Padding",

"key" : "openidm-sym-default"

},

"type" : "x-simple-encryption"

}

}

------END ENCRYPTED VALUE------

The following example prompts for a JSON object to be encrypted. In this case, you need not escape the special characters.

$ ./cli.sh encrypt -j

Using boot properties at /path/to/openidm/conf/boot/boot.properties

Enter the Json value

> Press ctrl-D to finish input

Start data input:

{"password":"myPassw0rd"}

^D

Activating cryptography service of type: JCEKS provider: location: security/keystore.jceks

Available cryptography key: openidm-sym-default

Available cryptography key: openidm-localhost

CryptoService is initialized with 2 keys.

-----BEGIN ENCRYPTED VALUE-----

{

"$crypto" : {

"value" : {

"iv" : "6e0RK8/4F1EK5FzSZHwNYQ==",

"data" : "gwHSdDTmzmUXeD6Gtfn6JFC8cAUiksiAGfvzTsdnAqQ=",

"cipher" : "AES/CBC/PKCS5Padding",

"key" : "openidm-sym-default"

},

"type" : "x-simple-encryption"

}

}

------END ENCRYPTED VALUE------

3.5. keytool

The keytool subcommand exports or imports secret key values.

The Java keytool command enables you to export and import public keys and certificates, but not secret or symmetric keys. The OpenIDM keytool subcommand provides this functionality.

Usage is as follows:

./cli.sh keytool [--export, --import] alias

For example, to export the default OpenIDM symmetric key, run the following command:

$ ./cli.sh keytool --export openidm-sym-default Using boot properties at /openidm/conf/boot/boot.properties Use KeyStore from: /openidm/security/keystore.jceks Please enter the password: [OK] Secret key entry with algorithm AES AES:606d80ae316be58e94439f91ad8ce1c0

The default keystore password is changeit. You

should change this password after installation.

To import a new secret key named my-new-key, run the following command:

$ ./cli.sh keytool --import my-new-key Using boot properties at /openidm/conf/boot/boot.properties Use KeyStore from: /openidm/security/keystore.jceks Please enter the password: Enter the key: AES:606d80ae316be58e94439f91ad8ce1c0

If a secret key of that name already exists, OpenIDM returns the following error:

"KeyStore contains a key with this alias"

3.6. validate

The validate subcommand validates all .json

configuration files in the openidm/conf/ directory.

Usage is as follows:

$ ./cli.sh validate

Executing ./cli.sh

Starting shell in /path/to/openidm

Using boot properties at /path/to/openidm/conf/boot/boot.properties

...................................................................

[Validating] Load JSON configuration files from:

[Validating] /path/to/openidm/conf

[Validating] audit.json .................................. SUCCESS

[Validating] authentication.json ......................... SUCCESS

...

[Validating] sync.json ................................... SUCCESS

[Validating] ui-configuration.json ....................... SUCCESS

[Validating] ui-countries.json ........................... SUCCESS

[Validating] ui-secquestions.json ........................ SUCCESS

[Validating] workflow.json ............................... SUCCESS

Chapter 4. OpenIDM Web-based User Interfaces

OpenIDM provides a customizable, browser-based user interface, known as the

User View UI. To take full advantage of this interface, you can configure

OpenIDM managed objects (see Section C.1, "Managed Objects") under

managed/user. Most of the OpenIDM samples demonstrate how

you can deploy OpenIDM with managed users.

The User View UI interface enables administrative users to create, modify,

and delete user accounts. It provides role-based access to tasks based on

BPMN2 workflows, and allows users to manage certain aspects of their own

accounts, including configurable self-service registration. When OpenIDM

starts, you can access the User View UI at

https://localhost:8443/openidmui.

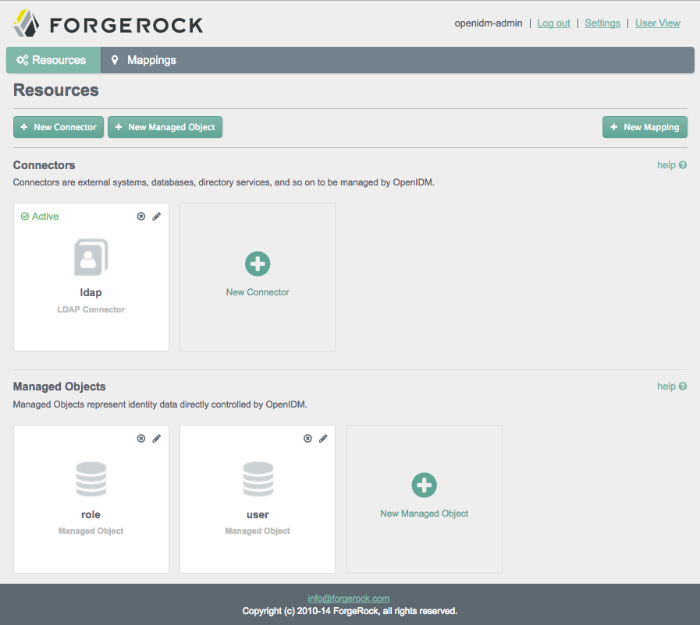

OpenIDM also provides a configurable administrative user interface (Admin UI)

that allows you to configure connectors, customize managed objects, set up

attribute mappings, and more. When OpenIDM starts, you can access the Admin UI

at https://localhost:8443/admin.

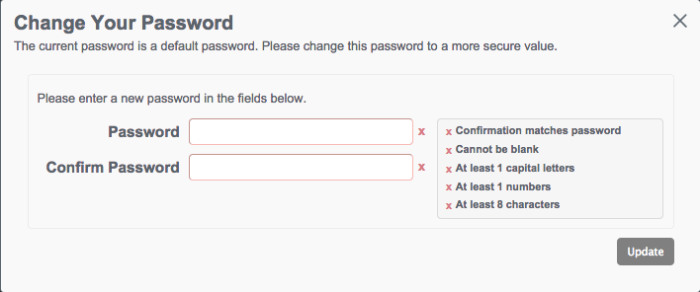

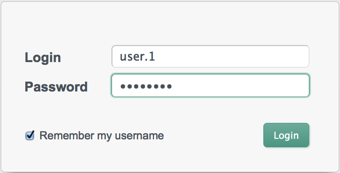

The first time you log into either UI as the openidm-admin

administrative user, the default password is openidm-admin.

As that default password is not secure, we recommend that you change that

password in production. However, you can bypass that window, by clicking the

X in the upper right corner.

4.1. Configuring OpenIDM from the Admin UI

You can set up a basic configuration for OpenIDM with the Administrative User Interface (Admin UI).

Through the Admin UI, you can connect to resources, configure attribute mapping, and set up managed objects, reconciled on a defined schedule.

You can customize the configuration of connectors, managed objects, mapping between resources, and more. You can add and edit properties to be synchronized, configure correlation queries, and enable LiveSync.

To access the initial Admin UI screen, navigate to

https://localhost:8443/admin.

The information that appears in the initial Admin UI screen depends on how you started OpenIDM. For example, if you start OpenIDM with one of the OpenIDM samples (see Chapter 3, "More OpenIDM Samples" in the Installation Guide), your first Resources screen will display connectors and managed objects as configured in the selected sample.

As shown in the initial screen, the Admin UI supports connecting to external resources (see Chapter 11, "Connecting to External Resources") and managing users, groups, and roles (see Chapter 8, "Managing Users, Groups, and Roles").

Scroll up and down the Admin UI screen. Review configured connectors and managed objects. The screenshots in this section assume that you have started OpenIDM with the configuration for Sample 2b (Section 3.4, "Sample 2b - LDAP Two Way" in the Installation Guide).

Note

While a connector is not a resource, it provides a connection to remote resources such as LDAP data stores, XML files, and other databases.

Connectors are used to communicate with remote resources, such as a database, an identity store, or another organized data store.

Once you connect one or more data stores and managed objects, you can create a mapping between two resources. You can then configure property mapping between those resources, for later synchronization.

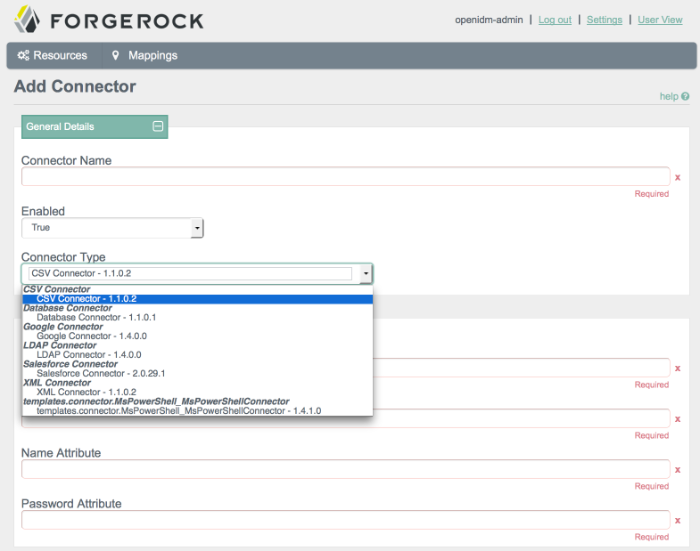

4.1.1. Administering Connectors from the UI

You can include several different connectors in an OpenIDM configuration. Select the option to create a new connector. Try some of the different connector types in the screen that appears. Observe as the Admin UI changes the configuration options to match the requirements of the connector type.

Remember, every connector serves as a conduit to an external data store.

If you are not sure what to enter in a specific Add Connector text box, review the list of connectors supported with OpenIDM (Section 11.5, "Connectors Supported With OpenIDM 3.1"). You should be able to find guidance and or examples on how each supported connector is configured.

For additional guidance, review sample connector files in the

samples/provisioners/ subdirectory.

Once you fill in all required text boxes, the Admin UI allows you to validate the connector configuration.

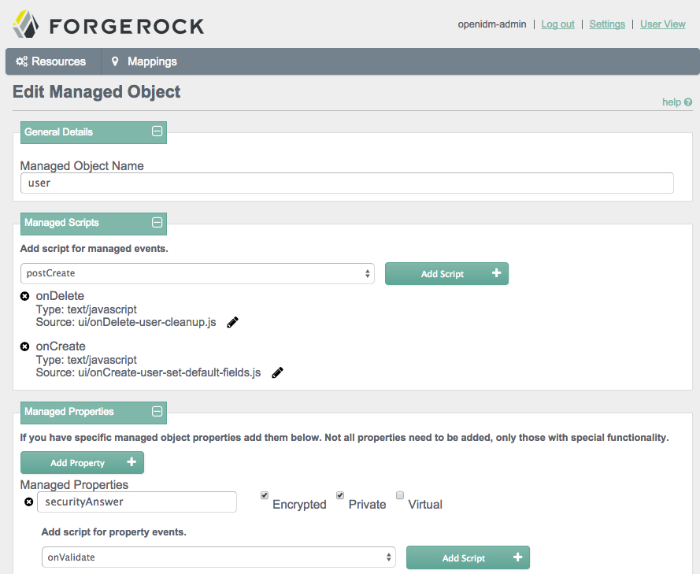

4.1.2. Administering Managed Objects from the UI

You can set up Managed Objects in a similar way to how you set up connectors. Typically, OpenIDM uses managed objects as described in Chapter 8, "Managing Users, Groups, and Roles".

To access the details of a Managed Object, go to the Resources screen. Select the Edit icon associated with the Managed Object that you want to change, such as user, group, or role.

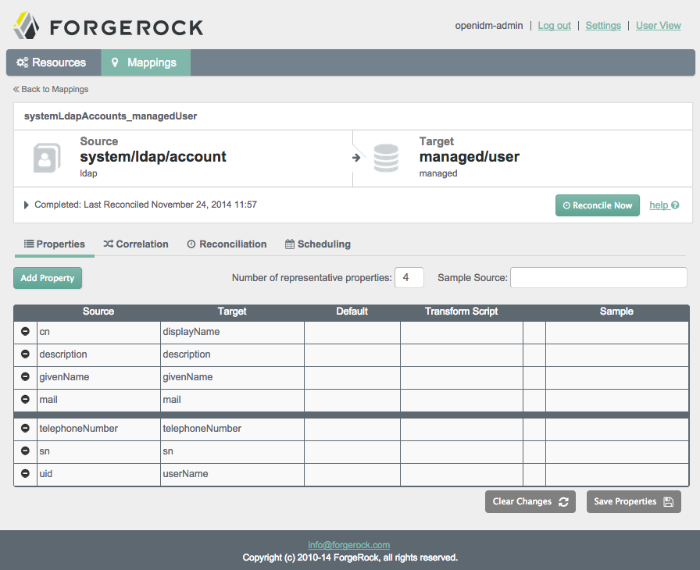

4.1.3. Configuring a Resource Mapping from the UI

You may have multiple connectors and managed objects. You can configure a mapping between any two of these resources. Connectors represent an external data store, as specified in Section 11.3, "Configuring Connectors". In contrast, managed objects represents a data store internal to OpenIDM.

Resource mapping requires a source and a target. Generally, each resource includes properties such as username, address, surname, and title. Resource mapping can go beyond user information to other types of data.

Once you create a mapping, you can identify properties that you wish to control in the target. With this part of the Admin UI, you can configure how OpenIDM uses matching properties in the source.

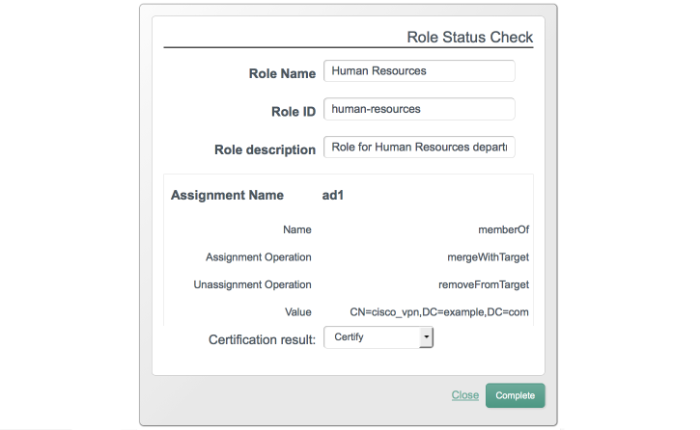

The following screenshot illustrates a property mapping between two resources. Every resource includes identifiers for each attribute. For example, different resources may use one of the following identifiers for usernames: un, uid, user, sAMAccountName, and account. For more information on property mappings, see Section 12.3.2, "Synchronization Mappings File".

The bold black line is used solely by the UI to determine what data is shown from these data stores. It does not affect reconciliation. In the Admin UI, you can change the location of the line, or move a property above or below the line.

4.1.4. Configuring Reconciliation from the UI

Reconciliation changes data on a target system to match the corresponding data on a source system. You can customize how OpenIDM performs reconciliation in several ways.

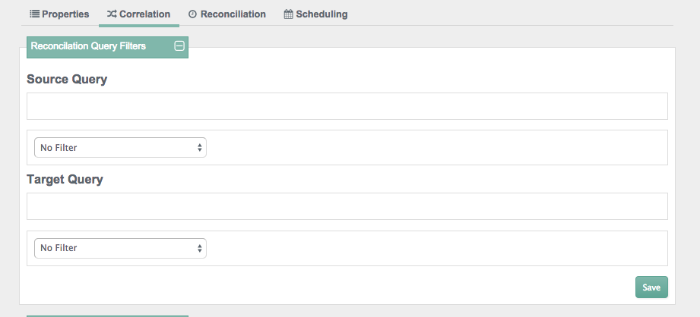

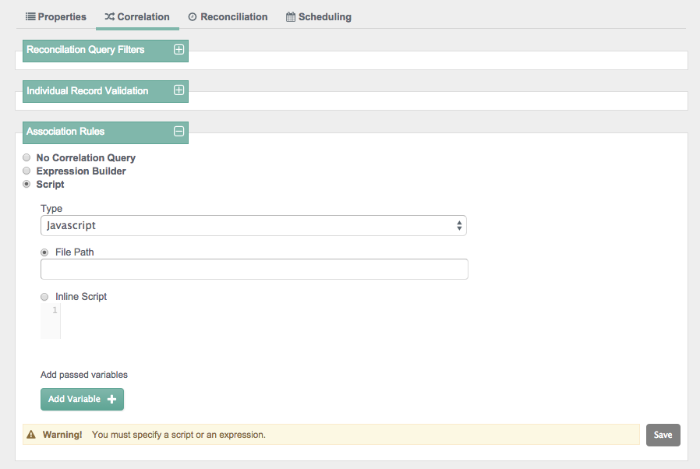

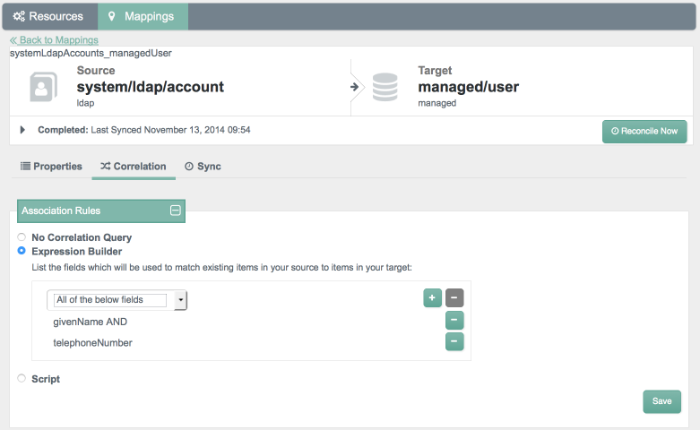

4.1.4.1. Correlation Options

OpenIDM can match existing records on the target system to records on the source system. This matching is known as correlation.

Before activating reconciliation, you may choose to configure various correlation options, including reconciliation query filters, individual record validation, association rules, and data association management.

For more information on correlation, see Section 12.17, "Correlation Queries".

You can add reconciliation query filters, with queries on the source and

target objects. For more information, see Section 7.3.4, "Constructing Queries".

You can set up queries on properties such as userName

and parameters such as Smith.

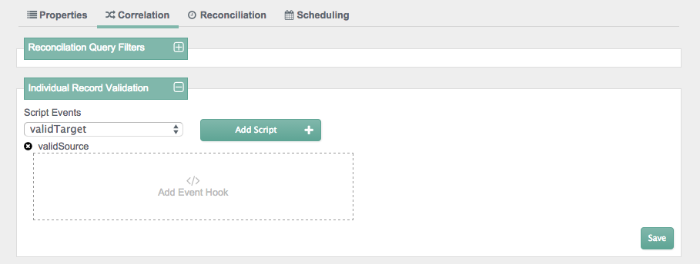

You can add individual record validation scripts, based on the

validSource and validTarget

script objects. For more information on these objects, see

Section D.1, "Object-Mapping Objects". With these scripts, you can set up

criteria for OpenIDM to validate source and target objects for reconciliation.

You can set up correlation queries (Section 12.17, "Correlation Queries") with an expression builder or with a JavaScript or Groovy-based script.

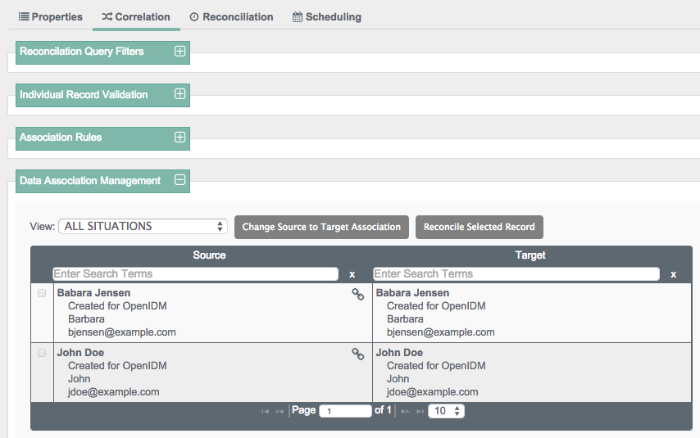

When a reconciliation operation finds a matching target entry, the process creates a link between the source and the target entries. This is also known as a Data Association. Data associations serve two purposes - they speed up future reconciliation operations, and they serve as a record of the relationship between a source and a target entry.

You can set up such data associations for individual entries, as well as different situations shown in Section 12.13.1, "Synchronization Situations".

4.1.4.2. Reconciliation Options

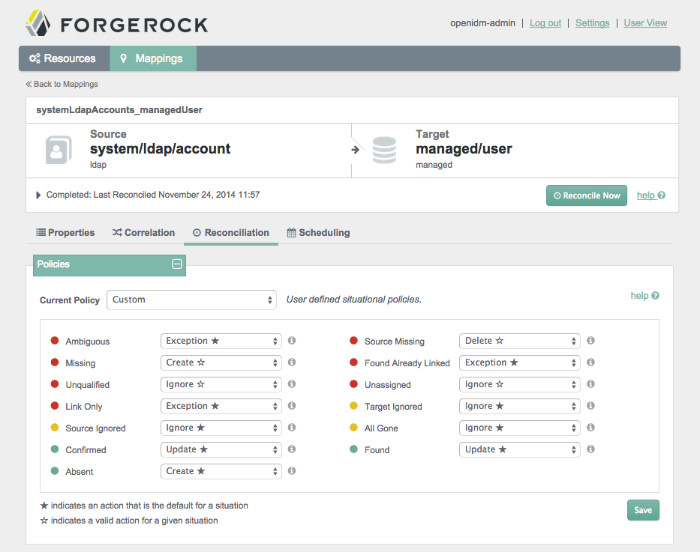

Before activating reconciliation, you may choose to configure situational policies, situational event scripts, and reconciliation scripts.

You may want to configure situational policies. By default, the policies associated with mappings are set to "read-only". As such, OpenIDM does not change anything on the target system, unless you make changes to situational policies.

With situational policies, you can define actions relevant for situations shown in the screenshot below. For a full list of available policies, see Section 12.13, "Synchronization Situations and Actions".

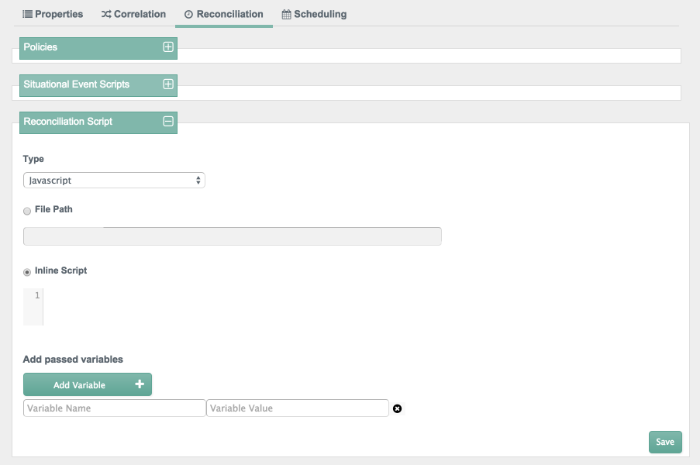

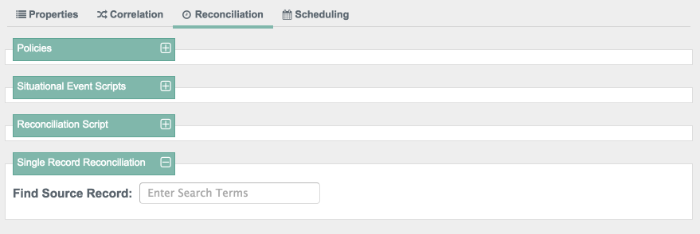

To access the following screen, select the Mapping of your choice and select edit. In the sub-tabs that appear, select Reconciliation.

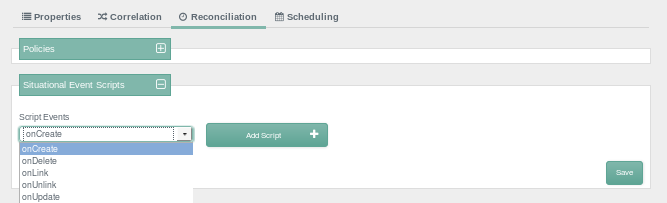

You can configure situational event scripts. Each of the script events

shown onCreate, onDelete,

onLink, onUnlink, and

onUpdate, can help you with constructing attributes. For

more information, see Section 12.3.5, "Constructing and Manipulating Attributes".

You can configure scripts that are triggered on reconciliation, as part of the dataflow configuration (see Section 12.18, "Advanced Data Flow Configuration".

You can choose to test the overall process with a single record reconciliation. In other words, you need not test these policies and scripts against the entire data set. For example, you can restrict reconciliation to a specific ID (see Section 12.6, "Restricting Reconciliation to a Specific ID").

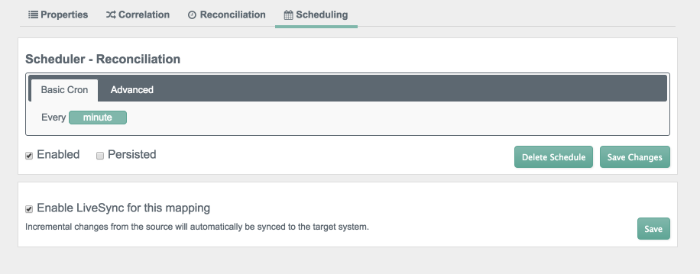

Finally, you should set up a schedule, as described in Section 12.19.1, "Configuring Scheduled Synchronization".

You can also configure LiveSync, which captures the changes that happen on a remote system, and then pushes those changes to OpenIDM.

For more information on Reconciliation and LiveSync, see Section 12.1, "Types of Synchronization".

You can manage reconciliation and liveSync with the embedded Quartz scheduler. For more information, see Chapter 13, "Scheduling Tasks and Events".

Once you've set up Reconciliation based on configured properties, correlation queries, and a synchronization schedule, you can start the synchronization process. Try it out! You'll see the results in the target data store.

Once you have taken the steps required to configure OpenIDM through the Admin UI, you can start the synchronization process from a source to a target. Once a synchronization is complete, you can review the results in this window.

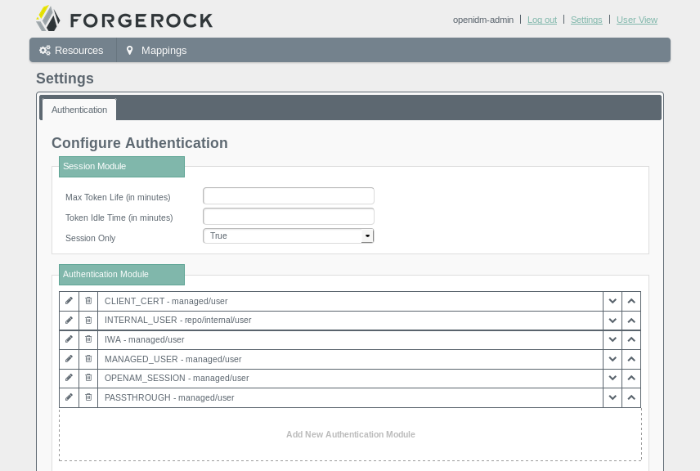

4.1.5. Configuring Authentication Modules from the UI

You can also configure authentication modules from the Admin UI. To access those modules, click Settings.

The page shown here displays several available modules, based on the ForgeRock Common Authentication Framework. For more information on each module, see Section 15.3, "Supported Authentication Modules".

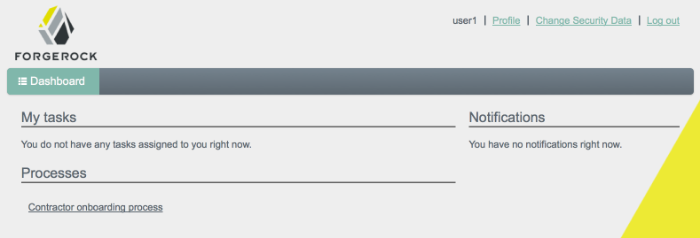

4.2. Overview of the User View UI

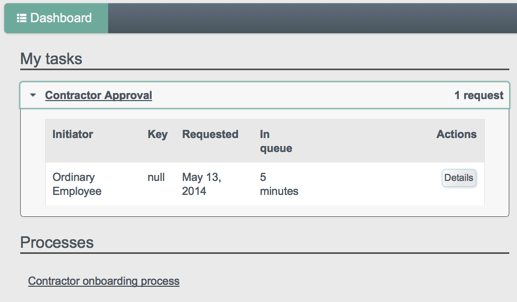

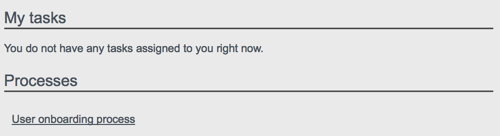

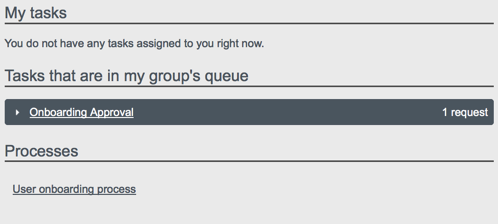

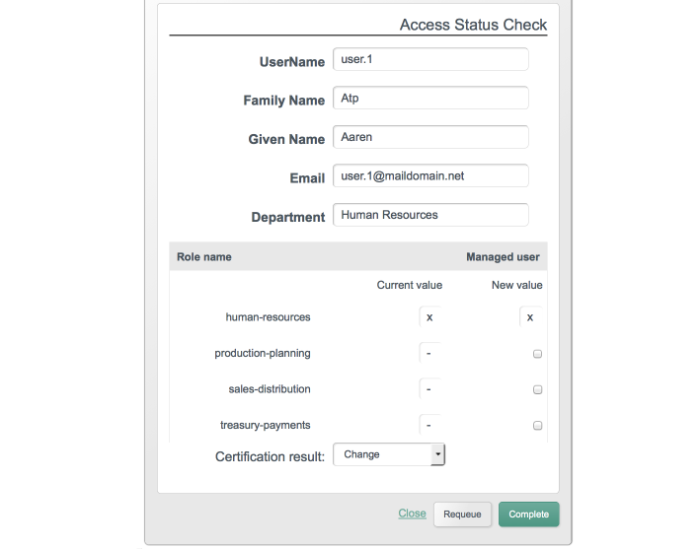

For all users, the User View UI includes a Dashboard tab, which lists any tasks assigned to the user who as logged in, processes available to be invoked, and any notifications for that user.

For the administrative user, (role openidm-admin), the

User View UI also includes a Users tab, which provides an interface for

user entries, if you have configured managed users in the OpenIDM repository.

To access the User View UI, install and start OpenIDM, then point your browser to https://localhost:8443/openidmui. If you have not installed a certificate that is trusted by a certificate authority, you are prompted with an "Untrusted Connection" warning the first time you log in to the UI.

The following image shows the Dashboard tab for the administrative user when no tasks, processes, or notifications are available.

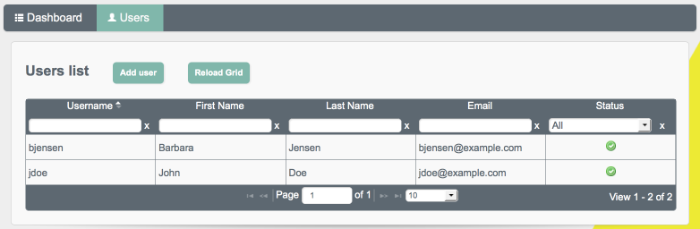

The following image shows the Users tab, populated with two sample users, after a reconciliation associated with Sample 2b.

You can sort the list of users alphabetically, by any of the column values. Click on the column title to sort.

The Profile link enables the user to modify his username

or password. The Change Security Data link, accessed from

the top of the screen, or from the user's Profile page enables the user to

change his password and, if this functionality has been enabled, to select a

new security question.

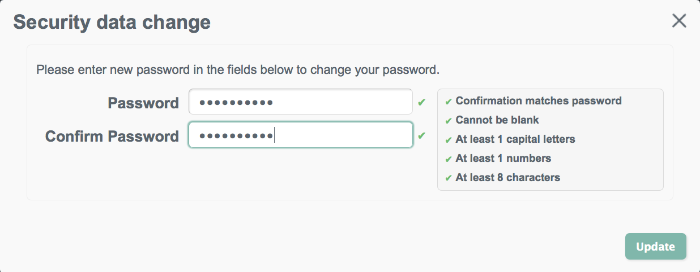

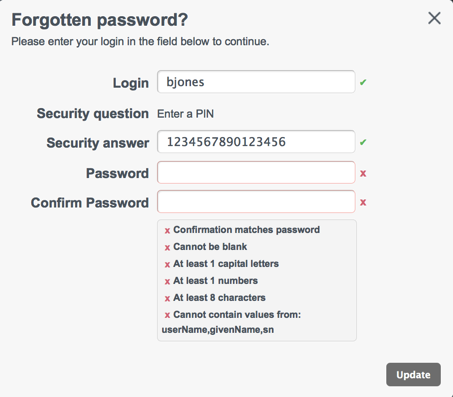

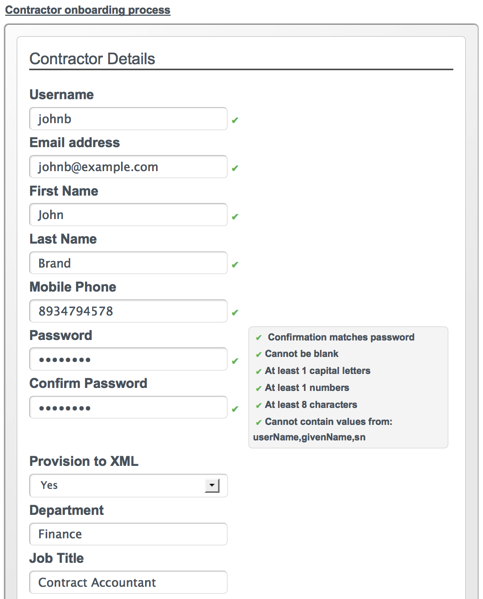

Password changes are subject to the default password policy, as shown in the following password update screen.

For a regular user (role openidm-authorized), the Users

tab is not displayed. By default, regular users cannot manage user accounts,

except for certain aspects of their own accounts.

4.3. Configuring the User View UI

The following sections outline the configurable aspects of the User View UI.

4.3.1. Enabling Self-Registration

Self-registration (the ability for new users to create their own accounts)

is disabled by default. To enable self-registration, set

"selfRegistration" to true in the UI

configuration file (conf/ui-configuration.json).

{

"configuration" : {

"selfRegistration" : true,

...

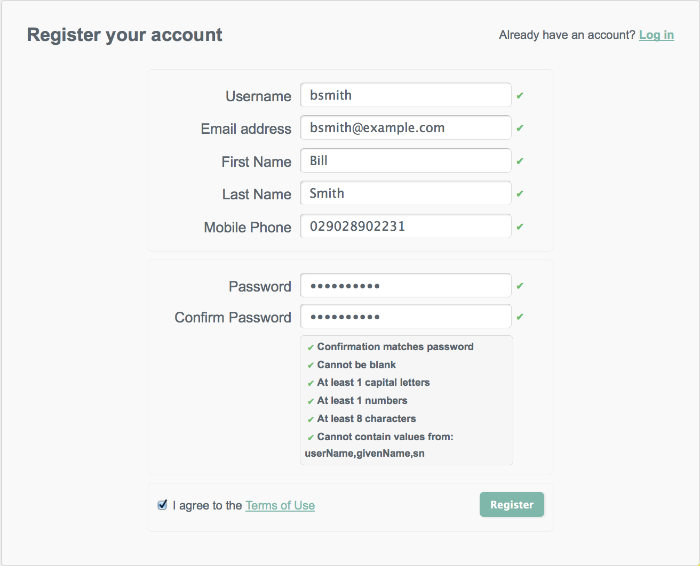

When self-registration is enabled, a "Register your account" link is provided on the login page. When a user creates an account on the account registration page, a managed object is created in the OpenIDM repository. The default policies for managed objects are applied during account creation.

User objects created using self-registration automatically have the role

openidm-authorized.

4.3.2. Configuring Security Questions

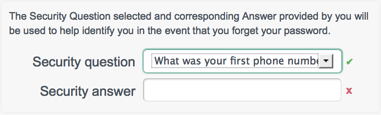

In the event that a user forgets his password, a password reset function enables registered users to reset their own passwords. To guard against unauthorized access, you can specify that users be prompted with one or more security questions when they request a password reset.

Security questions are disabled by default. To enable them, set

"securityQuestions" to true in the UI

configuration file (conf/ui-configuration.json).

{

"configuration" : {

"securityQuestions" : true,

...

A default set of questions is provided, but you can add to these, or

overwrite them. Specify the list of questions to be asked in the

conf/ui-secquestions.json file.

Refresh your browser after this configuration change for the change to be picked up by the UI.

When security questions are enabled, the following panel is included on the self registration page.

In addition, a "Reset your password" link is provided on the login page. When a user attempts to reset her password, she is prompted for the response to the security question that she set up during registration.

Note

If security questions are enabled after a specific user has registered, that particular user will be unable to use the password reset functionality.

4.3.3. Minimum Length Security Answers

The password, passphrase, and security answer are all associated with

some minimum length. To change that minimum, edit the

conf/policy.json file, setting the

minLength property to the required minimum.

For example, the following excerpt from the

conf/policy.json file shows a default

minLength of 16 associated with the security answer.

{

"policyId" : "minimum-length",

"params" : {

"minLength" : 16

}

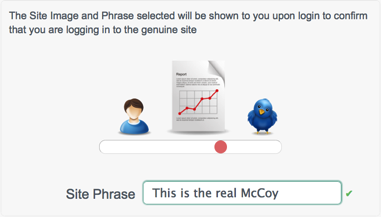

},4.3.4. Enabling Site Identification

To ensure that users are entering their details onto the correct site, you can enable site identification. Site identification provides a preventative measure against phishing.

With site identification enabled, a user is presented with a range of images from which he can select when he registers his account, and prompted to specify his own site phrase. The selected site image and phrase are displayed on login, to confirm that the user is logging in to the legitimate site.

To enable site identification, set "siteIdentification"

to true in the UI configuration file

(conf/ui-configuration.json).

{

"configuration" : {

"siteIdentification" : true,

...

Refresh your browser after this configuration change for the change to be picked up by the UI.

When site identification is enabled, the following panel is included on the self registration page.

A default list of four images is presented for site identification. The

images are defined in the siteImages property in the

conf/ui-configuration.json file:

"siteImages" : [ "images/passphrase/mail.png", "images/passphrase/user.png", "images/passphrase/report.png", "images/passphrase/twitter.png" ], ...

You can change the default images, and include additional images, by

placing image files in the ui/extension/images folder

and modifying the siteImages property in the

ui-configuration.json file to point to the new images.

Refresh your browser for the change to take effect.

The following example assumes an image file named

my-new-image.jpg, located in

ui/extension/images.

"siteImages" : [ "images/passphrase/mail.png", "images/passphrase/user.png", "images/passphrase/report.png", "images/passphrase/twitter.png", "images/my-new-image.jpg" ], ...

Note that the default image files are located in

ui/default/enduser/public/images/passphrase.

4.3.5. Configuring the Country List

The default user profile includes the ability to select the user's country

and state or province. To specify the countries, and the associated states

or provinces, that appear in these drop down lists, edit the

conf/ui-countries.json file. For example, to add

Norway to the list of countries, you would add the following to the

conf/ui-countries.json file:

{

"key" : "norway",

"value" : "Norway",

"states" : [

{

"key" : "akershus",

"value" : "Akershus"

},

{

"key" : "aust-agder",

"value" : "Aust-Agder"

},

{

"key" : "buskerud",

"value" : "Buskerud"

},

...

Refresh your browser after this configuration change for the change to be picked up by the UI.

4.4. Managing User Accounts With the User View UI

Only administrative users (with the role openidm-admin)

can add, modify, and delete user accounts. Regular users can modify certain

aspects of their own accounts.

Log into the user interface as an administrative user.

Select the Users tab.

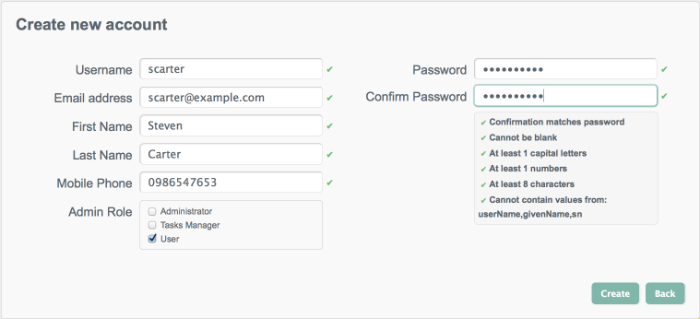

Click Add User.

Complete the fields on the Create new account page.

Most of these fields are self-explanatory. Be aware that the user interface is subject to policy validation, as described in Chapter 9, "Using Policies to Validate Data". So, for example, the Email address must be of valid email address format, and the Password must comply with the password validation settings that are indicated in the panel to the right.

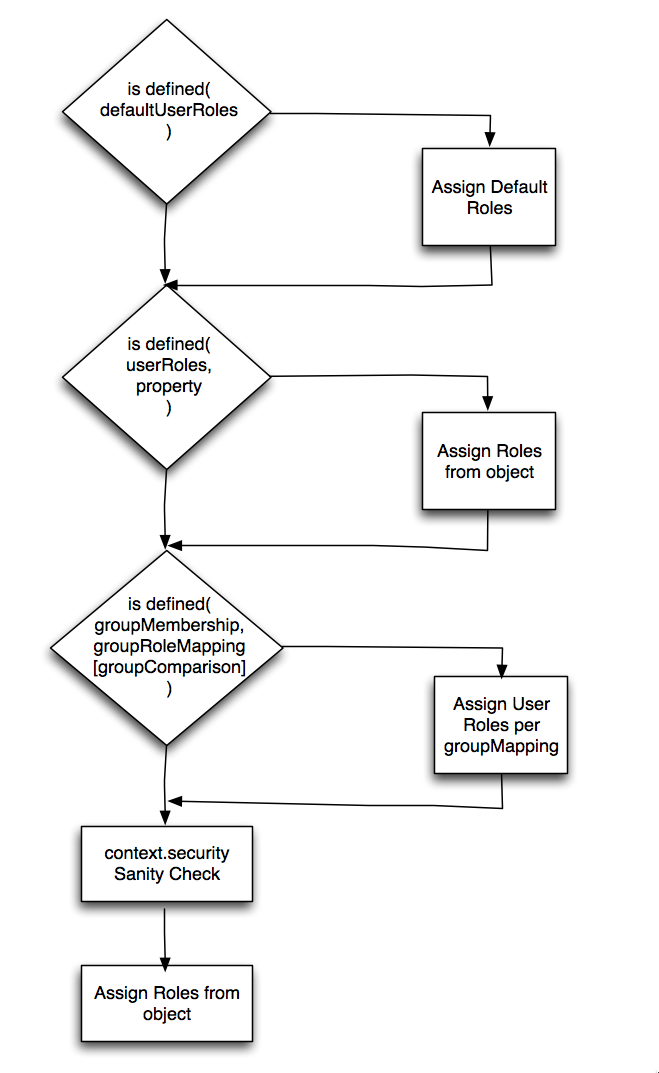

The Admin Role field reflects the roles that are defined in the

ui-configuration.jsonfile, as well as any managed roles that have been added. By default, the roles are mapped as follows:"roles" : { "openidm-admin" : "Administrator", "openidm-authorized" : "User", "openidm-tasks-manager" : "Tasks Manager" },A user can be assigned more than one role. Only users with the

tasks-managerrole can assign tasks to any candidate user for that task.

Log into the User View UI at

https://localhost:8443/openidmuias an administrative user.Select the Users tab.

Click the Username of the user that you want to update.

On the user's profile page, modify the fields you want to change and click Update.

The user account is updated in the OpenIDM repository.

Follow steps 1-3 in Procedure 4.2, "To Update a User Account".

On the user's profile page, select Inactive from the Account status list.

Click Update.

The user account is deactivated and the user can no longer log in to the system.

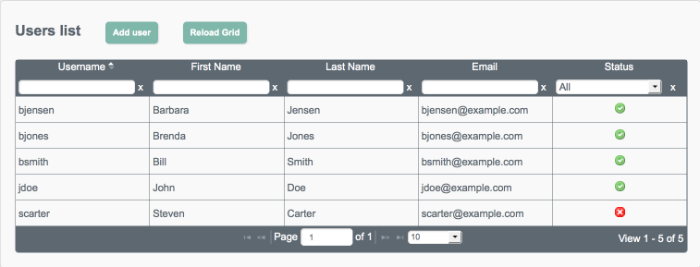

Inactive users are indicated with a X icon in the Status column of the Users page. The following image shows that Steven Carter's account has been deactivated.

Users can change their own passwords by following the Change Security Data link in their profiles. This process requires that users know their existing passwords.

In a situation where a user forgets his password, an administrator can reset the password of that user without knowing the user's existing password.

Follow steps 1-3 in Procedure 4.2, "To Update a User Account".

On the user's profile page, click Change password.

Enter a new password that conforms to the password policy and click Update.

The user password is updated in the repository.

Log into the user interface as an administrative user.

Select the Users tab.

Click the Username of the user that you want to delete.

On the user's profile page, click Delete.

Click OK to confirm the deletion.

The user is deleted from the internal repository.

The User View UI displays the details of the user account in the OpenIDM repository (managed/user). When a mapping has been configured between the repository and one or more external resources, you can view what that user account looks like in any of the systems to which it is linked. Note that this view is read-only - you cannot update a user record in a linked system from within the User View UI.

By default, implicit synchronization is enabled for

mappings from the managed/user

repository to any external resource. This means that

when you update a managed object, any mappings defined in the

sync.json file that have the managed object as the

source are automatically executed to update the target system. You can see

these changes in the Linked Systems section of a user's profile.

To view a user's linked accounts:

Log into the User View UI as an administrative user.

Select the Users tab.

Click the Username of the user whose accounts you want to view.

At the bottom of the user profile, the Linked Systems panel indicates the external resource or resources to which this user entry is mapped. .

Select the resource in which you want to view the account, from the Linked Resource list.

The user record in the linked resource is displayed.

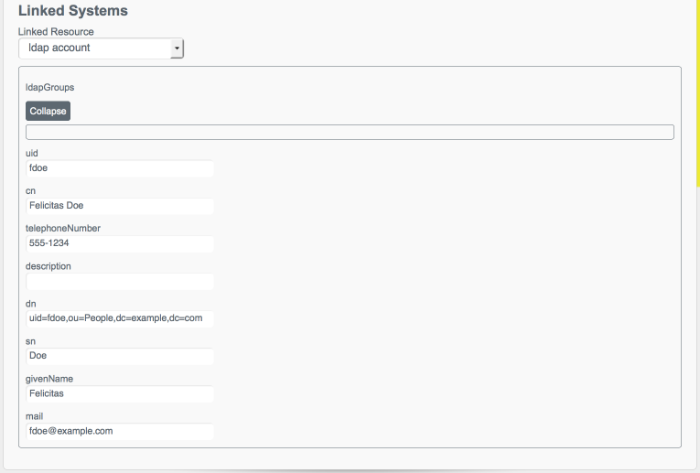

The following image shows the user account for a user

fdoe, as it exists in the LDAP directory to which the managed user repository is mapped.

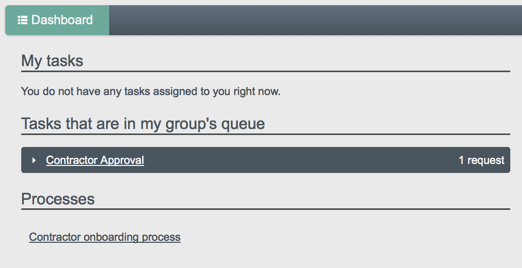

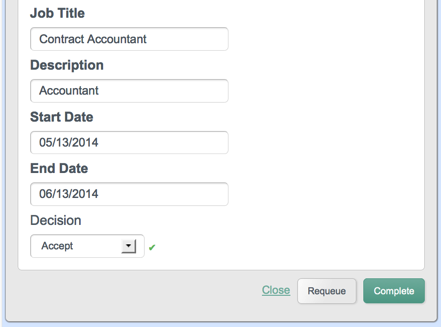

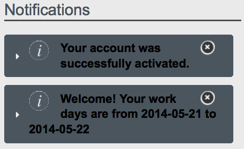

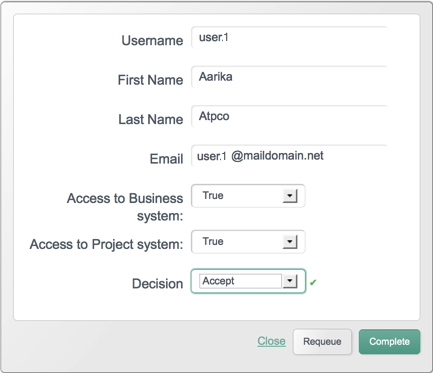

4.5. Managing Workflows From the User View UI

The User View UI is integrated with the embedded Activiti worfklow engine,

enabling users to interact with workflows. Available workflows are displayed

under the Processes item on the Dashboard. In order for a workflow to be

displayed here, the workflow definition file must be present in the

openidm/workflow directory.

A sample workflow integration with the User View UI is provided in

openidm/samples/workflow, and documented in

Section 17.5.2, "Sample Workflow - Provisioning User Accounts". Follow the steps in that

sample for an understanding of how the workflow integration works.

Access to workflows is based on OpenIDM roles, and is configured in the file

conf/process-access.json. By default all users with the

role openidm-authorized or

openidm-admin can invoke any available workflow. The

default process-access.json file is as follows:

{

"workflowAccess" : [

{

"propertiesCheck" : {

"property" : "_id",

"matches" : ".*",

"requiresRole" : "openidm-authorized"

}

},

{

"propertiesCheck" : {

"property" : "_id",

"matches" : ".*",

"requiresRole" : "openidm-admin"

}

}

]

}

"property"Specifies the property used to identify the process definition. By default, process definitions are identified by their

_id."matches"A regular expression match is performed on the process definitions, according to the specified property. The default (

"matches" : ".*") implies that all process definition IDs match."requiresRole"Specifies the OpenIDM role that is required for users to have access to the matched process definition IDs. In the default file, users with the role

openidm-authorizedoropenidm-adminhave access.

To extend the process action definition file, identify the processes to which

users should have access, and specify the qualifying user roles. For example,

if you wanted to restrict access to a process definition whose ID was

567, to users with the role ldap you

would add the following to the process-access.json file:

{

"propertiesCheck" : {

"property" : "_id",

"matches" : "567",

"requiresRole" : "ldap"

}

}

4.6. Changing the UI Theme

You can customize the theme of the user interface with your own branding. One

way to adjust the UI theme, is to edit the properties in the UI theme

configuration file

(/path/to/openidm/conf/ui-themeconfig.json). This file

stores detailed color values, background image paths, and a number of other

common styling options. Because the UI theme configuration file is part of

the configuration store, it is shared by all nodes in a cluster. Changes made

to this file do not have to be replicated manually across nodes.

To change theme elements that are not included in the UI theme configuration

file, you can create a custom theme in the

openidm/ui/extension directory. By default the user

interface reads the stylesheets and images from the

openidm/ui/default directory. Do not modify the files in

this default directory as there is no guarantee that your changes will not be

overwritten in the next OpenIDM release. Modifications made in the

openidm/ui/extension directory can be maintained across

product upgrades. The UI searches the extension directory

first and applies any styles or images located in this directory. Note that

files added to the extension directory must be manually

copied between every node in a cluster.

If you want to update the view logic of the UI, you cannot simply add files to the extensions folder. It is assumed that if your deployment requires that level of control of the user interface, you are no longer going to want to be automatically upgraded with subsequent releases. As such, you need to take on the task of maintaining a fork of the UI.

4.6.1. Changing the Default Stylesheet

Most changes to the UI stylesheets can be made in the UI theme configuration

file (conf/ui-themeconfig.json).

If you make the following change to that file, that changes the background color of the UI to dark grey.

$ grep "background-color" /path/to/openidm/conf/ui-themeconfig.json "background-color" : "#ababab",

Refresh your browser window for the change to appear.

The default stylesheets are located in the

openidm/ui/default/enduser/public/css directory. To

customize the stylesheets beyond the properties available in the UI theme

configuration file, copy the default stylesheets to

openidm/ui/extension/css, and edit them according to

your requirements.

4.6.2. Changing the Default Logo

The default logo is located in the

openidm/ui/default/enduser/public/images directory.

Any file named logo.png, added to the directory

openidm/ui/extension/images, will replace the default

logo when the browser is refreshed.

To specify a different file name, or to control the size, and other

properties of the image file that is used for the logo, adjust the

logo property in the UI theme configuration file

(conf/themeconfig.json).

The following change to the UI theme configuration file points to an image

file named example-logo.png, in the

openidm/ui/extension/images directory.

...

"logo" : {

"src" : "images/example-logo.png",

"title" : "Example.com",

"alt" : "Example.com",

"height" : "80",

"width" : "120"

},

...Refresh your browser window for the new logo to appear.

4.6.3. Changing the Language of the UI

Currently, the UI is provided only in US English. You can translate the UI and specify that your own locale is used. The following example shows how to translate the UI into French.

Copy the default locale to a new folder in the same location (

openidm/ui/default/enduser/public/locales):$ cd /path/to/openidm/ui/default/enduser/public/locales $ cp -R en/ fr/

The new locale (

fr) now contains the defaulttranslation.jsonfile.$ ls fr/ translation.json

Translate the values of the properties in the

fr/translate.jsonfile. Do not translate the property names. For example:... "UserMessages" : { "changedPassword" : "Mot de passe a été modifié", "profileUpdateFailed" : "Problème lors de la mise à jour du profil", "profileUpdateSuccessful" : "Profil a été mis à jour", "userNameUpdated" : "Nom d'utilisateur a été modifié", ....Change the UI configuration to use the new locale by setting the value of the

langproperty in the/path/to/openidm/conf/ui-configuration.jsonfile, as follows:"lang" : "fr",

Refresh your browser window for the modification to be applied.

4.6.4. Creating a Project-Specific UI Theme

You can create specific UI themes for different projects and then point a particular UI instance to use a defined theme on startup. To create a complete custom theme, follow these steps:

Shut down the OpenIDM instance, if it is running. In the Felix administration console, type:

shutdown ->

Clear the

felix-cachedirectory.$ rm -rf felix-cache

Copy the entire default UI theme to an accessible location. For example:

$ cd /path/to/openidm/ui $ cp -r default ../new-project-theme

In the copied theme, modify the required elements, as described in the previous sections. Note that nothing is copied to the extension folder in this case - changes are made in the copied theme.

In the

openidm/conf/boot/boot.propertiesfile, add the following line, specifying the location of the new theme. The path is relative to the installation root of the OpenIDM instance.openidm.ui.fileinstall.dir=new-project-theme

Restart OpenIDM.

$ cd /path/to/openidm $ ./startup.sh

Relaunch the UI in your browser. The UI is displayed with the new custom theme.

4.7. Using an External System for Password Reset

By default, the password reset mechanism is handled internally, in OpenIDM. You can reroute password reset in the event that a user has forgotten his password, by specifying an external URL to which password reset requests are sent. Note that this URL applies to the password reset link on the login page only, not to the security data change facility that is available after a user has logged in.

To set an external URL to handle password reset, set the

passwordResetLink parameter in the UI configuration file

(conf/ui-configuration.json) file. The following example

sets the passwordResetLink to

https://accounts.example.com/account/reset-password.

passwordResetLink: "https://accounts.example.com/reset-password"

The passwordResetLink parameter takes either an empty

string as a value (which indicates that no external link is used) or a full

URL to the external system that handles password reset requests.

Note

External password reset and security questions for internal password reset

are mutually exclusive. Therefore, if you set a value for the