Guide to configuring and integrating OpenIDM into identity management solutions. OpenIDM identity management software offers flexible, open source services for automating management of the identity life cycle.

Preface

In this guide you will learn how to integrate OpenIDM as part of a complete identity management solution.

1. Who Should Use This Guide

This guide is written for systems integrators building identity management solutions based on OpenIDM services. This guide describes OpenIDM, and shows you how to set up OpenIDM as part of your identity management solution.

You do not need to be an OpenIDM wizard to learn something from this guide, though a background in identity management and building identity management solutions can help.

2. Formatting Conventions

Most examples in the documentation are created in GNU/Linux or Mac OS X

operating environments.

If distinctions are necessary between operating environments,

examples are labeled with the operating environment name in parentheses.

To avoid repetition file system directory names are often given

only in UNIX format as in /path/to/server,

even if the text applies to C:\path\to\server as well.

Absolute path names usually begin with the placeholder

/path/to/.

This path might translate to /opt/,

C:\Program Files\, or somewhere else on your system.

Command-line, terminal sessions are formatted as follows:

$ echo $JAVA_HOME /path/to/jdk

Command output is sometimes formatted for narrower, more readable output even though formatting parameters are not shown in the command.

Program listings are formatted as follows:

class Test {

public static void main(String [] args) {

System.out.println("This is a program listing.");

}

}3. Accessing Documentation Online

ForgeRock publishes comprehensive documentation online:

The ForgeRock Knowledge Base offers a large and increasing number of up-to-date, practical articles that help you deploy and manage ForgeRock software.

While many articles are visible to community members, ForgeRock customers have access to much more, including advanced information for customers using ForgeRock software in a mission-critical capacity.

ForgeRock product documentation, such as this document, aims to be technically accurate and complete with respect to the software documented. It is visible to everyone and covers all product features and examples of how to use them.

4. Using the ForgeRock.org Site

The ForgeRock.org site has links to source code for ForgeRock open source software, as well as links to the ForgeRock forums and technical blogs.

If you are a ForgeRock customer, raise a support ticket instead of using the forums. ForgeRock support professionals will get in touch to help you.

Chapter 1. Architectural Overview

This chapter introduces the OpenIDM architecture, and describes the modules and services that make up the OpenIDM product.

In this chapter you will learn:

How OpenIDM uses the OSGi framework as a basis for its modular architecture

How the infrastructure modules provide the features required for OpenIDM's core services

What those core services are and how they fit in to the overall architecture

How OpenIDM provides access to the resources it manages

1.1. OpenIDM Modular Framework

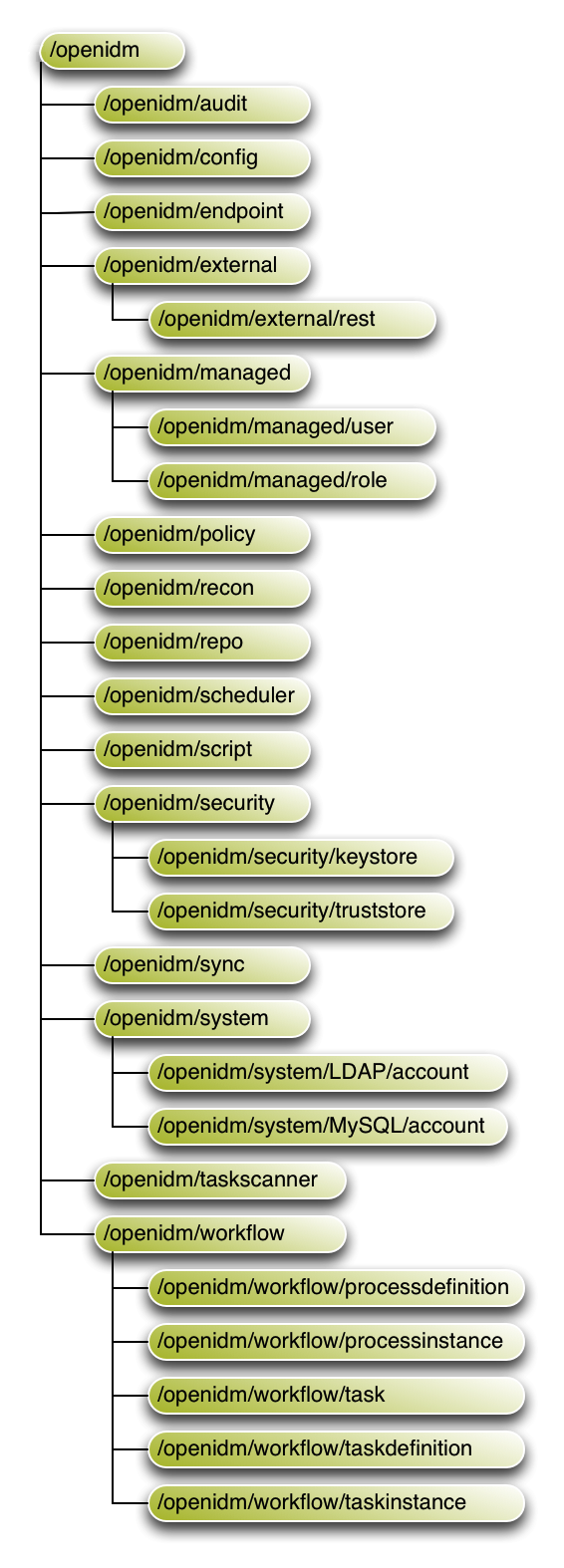

OpenIDM implements infrastructure modules that run in an OSGi framework. It exposes core services through RESTful APIs to client applications.

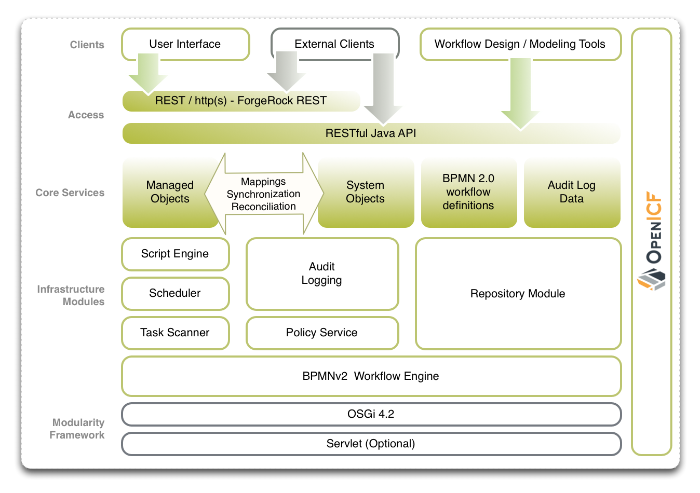

The following figure provides an overview of the OpenIDM architecture, which is covered in more detail in subsequent sections of this chapter.

The OpenIDM framework is based on OSGi:

- OSGi

OSGi is a module system and service platform for the Java programming language that implements a complete and dynamic component model. For a good introduction to OSGi, see the OSGi site. OpenIDM currently runs in Apache Felix, an implementation of the OSGi Framework and Service Platform.

- Servlet

The Servlet layer provides RESTful HTTP access to the managed objects and services. OpenIDM embeds the Jetty Servlet Container, which can be configured for either HTTP or HTTPS access.

1.2. Infrastructure Modules

OpenIDM infrastructure modules provide the underlying features needed for core services:

- BPMN 2.0 Workflow Engine

OpenIDM provides an embedded workflow and business process engine based on Activiti and the Business Process Model and Notation (BPMN) 2.0 standard.

For more information, see "Integrating Business Processes and Workflows".

- Task Scanner

OpenIDM provides a task-scanning mechanism that performs a batch scan for a specified property in OpenIDM data, on a scheduled interval. The task scanner then executes a task when the value of that property matches a specified value.

For more information, see "Scanning Data to Trigger Tasks".

- Scheduler

The scheduler provides a cron-like scheduling component implemented using the Quartz library. Use the scheduler, for example, to enable regular synchronizations and reconciliations.

For more information, see "Scheduling Tasks and Events".

- Script Engine

The script engine is a pluggable module that provides the triggers and plugin points for OpenIDM. OpenIDM currently supports JavaScript and Groovy.

- Policy Service

OpenIDM provides an extensible policy service that applies validation requirements to objects and properties, when they are created or updated.

For more information, see "Using Policies to Validate Data".

- Audit Logging

Auditing logs all relevant system activity to the configured log stores. This includes the data from reconciliation as a basis for reporting, as well as detailed activity logs to capture operations on the internal (managed) and external (system) objects.

For more information, see "Using Audit Logs".

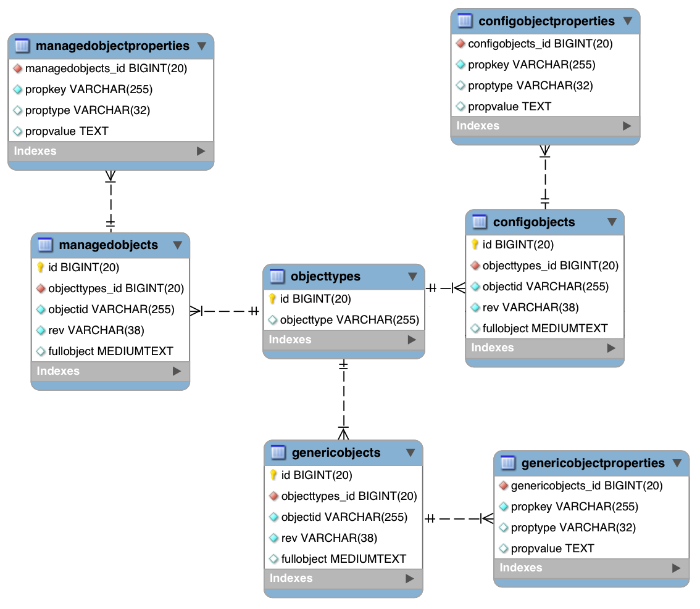

- Repository

The repository provides a common abstraction for a pluggable persistence layer. OpenIDM 4 supports reconciliation and synchronization with several major external repositories in production, including relational databases, LDAP servers, and even flat CSV and XML files.

The repository API uses a JSON-based object model with RESTful principles consistent with the other OpenIDM services. To facilitate testing, OpenIDM includes an embedded instance of OrientDB, a NoSQL database. You can then incorporate a supported internal repository, as described in "Installing a Repository For Production" in the Installation Guide.

1.3. Core Services

The core services are the heart of the OpenIDM resource-oriented unified object model and architecture:

- Object Model

Artifacts handled by OpenIDM are Java object representations of the JavaScript object model as defined by JSON. The object model supports interoperability and potential integration with many applications, services, and programming languages.

OpenIDM can serialize and deserialize these structures to and from JSON as required. OpenIDM also exposes a set of triggers and functions that system administrators can define, in either JavaScript or Groovy, which can natively read and modify these JSON-based object model structures.

- Managed Objects

A managed object is an object that represents the identity-related data managed by OpenIDM. Managed objects are configurable, JSON-based data structures that OpenIDM stores in its pluggable repository. The default configuration of a managed object is that of a user, but you can define any kind of managed object, for example, groups or roles.

You can access managed objects over the REST interface with a query similar to the following:

$ curl \ --cacert self-signed.crt \ --header "X-OpenIDM-Username: openidm-admin" \ --header "X-OpenIDM-Password: openidm-admin" \ --request GET \ "https://localhost:8443/openidm/managed/..."

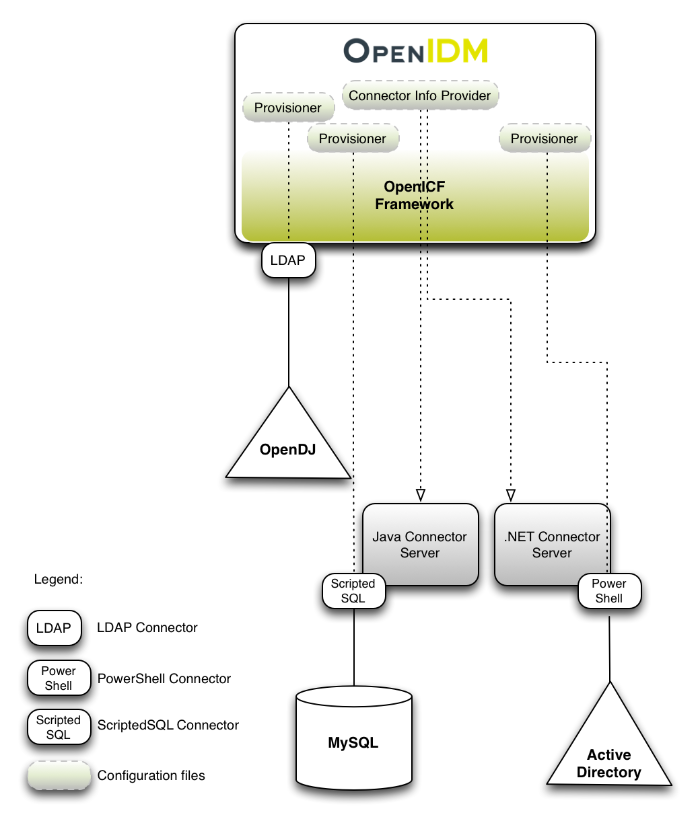

- System Objects

System objects are pluggable representations of objects on external systems. For example, a user entry that is stored in an external LDAP directory is represented as a system object in OpenIDM.

System objects follow the same RESTful resource-based design principles as managed objects. They can be accessed over the REST interface with a query similar to the following:

$ curl \ --cacert self-signed.crt \ --header "X-OpenIDM-Username: openidm-admin" \ --header "X-OpenIDM-Password: openidm-admin" \ --request GET \ "https://localhost:8443/openidm/system/..."

There is a default implementation for the OpenICF framework, that allows any connector object to be represented as a system object.

- Mappings

Mappings define policies between source and target objects and their attributes during synchronization and reconciliation. Mappings can also define triggers for validation, customization, filtering, and transformation of source and target objects.

For more information, see "Synchronizing Data Between Resources".

- Synchronization and Reconciliation

Reconciliation enables on-demand and scheduled resource comparisons between the OpenIDM managed object repository and source or target systems. Comparisons can result in different actions, depending on the mappings defined between the systems.

Synchronization enables creating, updating, and deleting resources from a source to a target system, either on demand or according to a schedule.

For more information, see "Synchronizing Data Between Resources".

1.4. Secure Commons REST Commands

Representational State Transfer (REST) is a software architecture style for exposing resources, using the technologies and protocols of the World Wide Web. For more information on the ForgeRock REST API, see "REST API Reference".

REST interfaces are commonly tested with a curl command. Many of these commands are used in this document. They work with the standard ports associated with Java EE communications, 8080 and 8443.

To run curl over the secure port, 8443, you must include

either the --insecure option, or follow the instructions

shown in "Restrict REST Access to the HTTPS Port". You can use those

instructions with the self-signed certificate generated when OpenIDM

starts, or with a *.crt file provided by a

certificate authority.

In many examples in this guide, curl commands to the

secure port are shown with a --cacert self-signed.crt

option. Instructions for creating that self-signed.crt

file are shown in "Restrict REST Access to the HTTPS Port".

1.5. Access Layer

The access layer provides the user interfaces and public APIs for accessing and managing the OpenIDM repository and its functions:

- RESTful Interfaces

OpenIDM provides REST APIs for CRUD operations, for invoking synchronization and reconciliation, and to access several other services.

For more information, see "REST API Reference".

- User Interfaces

User interfaces provide password management, registration, self-service, and workflow services.

Chapter 2. Starting and Stopping OpenIDM

This chapter covers the scripts provided for starting and stopping OpenIDM, and describes how to verify the health of a system, that is, that all requirements are met for a successful system startup.

2.1. To Start and Stop OpenIDM

By default you start and stop OpenIDM in interactive mode.

To start OpenIDM interactively, open a terminal or command window,

change to the openidm directory, and run the startup

script:

startup.sh (UNIX)

startup.bat (Windows)

The startup script starts OpenIDM, and opens an OSGi console with a

-> prompt where you can issue console commands.

To stop OpenIDM interactively in the OSGi console, run the shutdown command:

-> shutdown

You can also start OpenIDM as a background process on UNIX and Linux. Follow these steps before starting OpenIDM for the first time.

If you have already started OpenIDM, shut down OpenIDM and remove the Felix cache files under

openidm/felix-cache/:-> shutdown ... $ rm -rf felix-cache/*

Start OpenIDM in the background. The nohup survives a logout and the 2>&1& redirects standard output and standard error to the noted

console.outfile:$ nohup ./startup.sh > logs/console.out 2>&1& [1] 2343

To stop OpenIDM running as a background process, use the shutdown.sh script:

$ ./shutdown.sh ./shutdown.sh Stopping OpenIDM (2343)

Incidentally, the process identifier (PID) shown during startup should match the PID shown during shutdown.

Note

Although installations on OS X systems are not supported in production, you might want to run OpenIDM on OS X in a demo or test environment. To run OpenIDM in the background on an OS X system, take the following additional steps:

Remove the

org.apache.felix.shell.tui-*.jarbundle from theopenidm/bundledirectory.Disable

ConsoleHandlerlogging, as described in "Disabling Logs".

2.2. Specifying the OpenIDM Startup Configuration

By default, OpenIDM starts with the configuration, script, and binary files

in the openidm/conf, openidm/script,

and openidm/bin directories. You can launch OpenIDM with

a different set of configuration, script, and binary files for test purposes,

to manage different OpenIDM projects, or to run one of the included samples.

The startup.sh script enables you to specify the following elements of a running OpenIDM instance:

--project-locationor-p/path/to/project/directoryThe project location specifies the directory with OpenIDM configuration and script files.

All configuration objects and any artifacts that are not in the bundled defaults (such as custom scripts) must be included in the project location. These objects include all files otherwise included in the

openidm/confandopenidm/scriptdirectories.For example, the following command starts OpenIDM with the configuration of Sample 1, with a project location of

/path/to/openidm/samples/sample1:$ ./startup.sh -p /path/to/openidm/samples/sample1

If you do not provide an absolute path, the project location path is relative to the system property,

user.dir. OpenIDM then setslauncher.project.locationto that relative directory path. Alternatively, if you start OpenIDM without the -p option, OpenIDM setslauncher.project.locationto/path/to/openidm/conf.Note

When we refer to "your project" in ForgeRock's OpenIDM documentation, we're referring to the value of

launcher.project.location.--working-locationor-w/path/to/working/directoryThe working location specifies the directory to which OpenIDM writes its database cache, audit logs, and felix cache. The working location includes everything that is in the default

db/andaudit/, andfelix-cache/subdirectories.The following command specifies that OpenIDM writes its database cache and audit data to

/Users/admin/openidm/storage:$ ./startup.sh -w /Users/admin/openidm/storage

If you do not provide an absolute path, the path is relative to the system property,

user.dir. If you do not specify a working location, OpenIDM writes this data to theopenidm/db,openidm/felix-cacheandopenidm/auditdirectories.Note that this property does not affect the location of the OpenIDM system logs. To change the location of the OpenIDM logs, edit the

conf/logging.propertiesfile.You can also change the location of the Felix cache, by editing the

conf/config.propertiesfile, or by starting OpenIDM with the-soption, described later in this section.--configor-c/path/to/config/fileA customizable startup configuration file (named

launcher.json) enables you to specify how the OSGi Framework is started.Unless you are working with a highly customized deployment, you should not modify the default framework configuration. This option is therefore described in more detail in "Advanced Configuration".

--storageor-s/path/to/storage/directorySpecifies the OSGi storage location of the cached configuration files.

You can use this option to redirect output if you are installing OpenIDM on a read-only filesystem volume. For more information, see "Installing OpenIDM on a Read-Only Volume" in the Installation Guide. This option is also useful when you are testing different configurations. Sometimes when you start OpenIDM with two different sample configurations, one after the other, the cached configurations are merged and cause problems. Specifying a storage location creates a separate

felix-cachedirectory in that location, and the cached configuration files remain completely separate.

By default, properties files are loaded in the following order, and property values are resolved in the reverse order:

system.propertiesconfig.propertiesboot.properties

If both system and boot properties define the same attribute, the

property substitution process locates the attribute in

boot.properties and does not attempt to locate the

property in system.properties.

You can use variable substitution in any .json

configuration file with the install, working and project locations

described previously. You can substitute the following properties:

install.location |

install.url |

working.location |

working.url |

project.location |

project.url |

Property substitution takes the following syntax:

&{launcher.property}For example, to specify the location of the OrientDB database, you

can set the dbUrl property in repo.orientdb.json

as follows:

"dbUrl" : "local:&{launcher.working.location}/db/openidm",

The database location is then relative to a working location defined in the startup configuration.

You can find more examples of property substitution in many other files in

your project's conf/ subdirectory.

Note that property substitution does not work for connector reference properties. So, for example, the following configuration would not be valid:

"connectorRef" : {

"connectorName" : "&{connectorName}",

"bundleName" : "org.forgerock.openicf.connectors.ldap-connector",

"bundleVersion" : "&{LDAP.BundleVersion}"

...

The "connectorName" must be the precise string from the

connector configuration. If you need to specify multiple connector version

numbers, use a range of versions, for example:

"connectorRef" : {

"connectorName" : "org.identityconnectors.ldap.LdapConnector",

"bundleName" : "org.forgerock.openicf.connectors.ldap-connector",

"bundleVersion" : "[1.4.0.0,2.0.0.0)",

...

2.3. Monitoring the Basic Health of an OpenIDM System

Due to the highly modular, configurable nature of OpenIDM, it is often difficult to assess whether a system has started up successfully, or whether the system is ready and stable after dynamic configuration changes have been made.

OpenIDM includes a health check service, with options to monitor the status of internal resources.

To monitor the status of external resources such as LDAP servers and external databases, use the commands described in "Checking the Status of External Systems Over REST".

2.3.1. Basic Health Checks

The health check service reports on the state of the OpenIDM system and outputs this state to the OSGi console and to the log files. The system can be in one of the following states:

STARTING- OpenIDM is starting upACTIVE_READY- all of the specified requirements have been met to consider the OpenIDM system readyACTIVE_NOT_READY- one or more of the specified requirements have not been met and the OpenIDM system is not considered readySTOPPING- OpenIDM is shutting down

You can verify the current state of an OpenIDM system with the following REST call:

$ curl \

--cacert self-signed.crt \

--header "X-OpenIDM-Username: openidm-admin" \

--header "X-OpenIDM-Password: openidm-admin" \

--request GET \

"https://localhost:8443/openidm/info/ping"

{

"_id" : "",

"state" : "ACTIVE_READY",

"shortDesc" : "OpenIDM ready"

}

The information is provided by the following script:

openidm/bin/defaults/script/info/ping.js.

2.3.2. Getting Current OpenIDM Session Information

You can get more information about the current OpenIDM session, beyond basic health checks, with the following REST call:

$ curl \

--cacert self-signed.crt \

--header "X-OpenIDM-Username: openidm-admin" \

--header "X-OpenIDM-Password: openidm-admin" \

--request GET \

"https://localhost:8443/openidm/info/login"

{

"_id" : "",

"class" : "org.forgerock.services.context.SecurityContext",

"name" : "security",

"authenticationId" : "openidm-admin",

"authorization" : {

"id" : "openidm-admin",

"component" : "repo/internal/user",

"roles" : [ "openidm-admin", "openidm-authorized" ],

"ipAddress" : "127.0.0.1"

},

"parent" : {

"class" : "org.forgerock.caf.authentication.framework.MessageContextImpl",

"name" : "jaspi",

"parent" : {

"class" : "org.forgerock.services.context.TransactionIdContext",

"id" : "2b4ab479-3918-4138-b018-1a8fa01bc67c-288",

"name" : "transactionId",

"transactionId" : {

"value" : "2b4ab479-3918-4138-b018-1a8fa01bc67c-288",

"subTransactionIdCounter" : 0

},

"parent" : {

"class" : "org.forgerock.services.context.ClientContext",

"name" : "client",

"remoteUser" : null,

"remoteAddress" : "127.0.0.1",

"remoteHost" : "127.0.0.1",

"remotePort" : 56534,

"certificates" : "",

...

The information is provided by the following script:

openidm/bin/defaults/script/info/login.js.

2.3.3. Monitoring OpenIDM Tuning and Health Parameters

You can extend OpenIDM monitoring beyond what you can check on the

openidm/info/ping and openidm/info/login

endpoints. Specifically, you can get more detailed information about the

state of the:

Operating Systemon theopenidm/health/osendpointMemoryon theopenidm/health/memoryendpointJDBC Pooling, based on theopenidm/health/jdbcendpointReconciliation, on theopenidm/health/reconendpoint.

You can regulate access to these endpoints as described in the following

section: "access.js".

2.3.3.1. Operating System Health Check

With the following REST call, you can get basic information about the host operating system:

$ curl \

--cacert self-signed.crt \

--header "X-OpenIDM-Username: openidm-admin" \

--header "X-OpenIDM-Password: openidm-admin" \

--request GET \

"https://localhost:8443/openidm/health/os"

{

"_id" : "",

"_rev" : "",

"availableProcessors" : 1,

"systemLoadAverage" : 0.06,

"operatingSystemArchitecture" : "amd64",

"operatingSystemName" : "Linux",

"operatingSystemVersion" : "2.6.32-504.30.3.el6.x86_64"

}

From the output, you can see that this particular system has one 64-bit

CPU, with a load average of 6 percent, on a Linux system with the noted

kernel operatingSystemVersion number.

2.3.3.2. Memory Health Check

With the following REST call, you can get basic information about overall JVM memory use:

$ curl \

--cacert self-signed.crt \

--header "X-OpenIDM-Username: openidm-admin" \

--header "X-OpenIDM-Password: openidm-admin" \

--request GET \

"https://localhost:8443/openidm/health/memory"

{

"_id" : "",

"_rev" : "",

"objectPendingFinalization" : 0,

"heapMemoryUsage" : {

"init" : 1073741824,

"used" : 88538392,

"committed" : 1037959168,

"max" : 1037959168

},

"nonHeapMemoryUsage" : {

"init" : 24313856,

"used" : 69255024,

"committed" : 69664768,

"max" : 224395264

}

}The output includes information on JVM Heap and Non-Heap memory, in bytes. Briefly,

JVM Heap memory is used to store Java objects.

JVM Non-Heap Memory is used by Java to store loaded classes and related meta-data

2.3.3.3. JDBC Health Check

With the following REST call, you can get basic information about the status of the configured internal JDBC database:

$ curl \

--cacert self-signed.crt \

--header "X-OpenIDM-Username: openidm-admin" \

--header "X-OpenIDM-Password: openidm-admin" \

--request GET \

"https://localhost:8443/openidm/health/jdbc"

{

"_id" : "",

"_rev" : "",

"com.jolbox.bonecp:type=BoneCP-547b64b7-6765-4915-937b-e940cf74ed82" : {

"connectionWaitTimeAvg" : 0.010752126251079611,

"statementExecuteTimeAvg" : 0.8933237895474139,

"statementPrepareTimeAvg" : 8.45602988656923,

"totalLeasedConnections" : 0,

"totalFreeConnections" : 7,

"totalCreatedConnections" : 7,

"cacheHits" : 0,

"cacheMiss" : 0,

"statementsCached" : 0,

"statementsPrepared" : 27840,

"connectionsRequested" : 19683,

"cumulativeConnectionWaitTime" : 211,

"cumulativeStatementExecutionTime" : 24870,

"cumulativeStatementPrepareTime" : 3292,

"cacheHitRatio" : 0.0,

"statementsExecuted" : 27840

},

"com.jolbox.bonecp:type=BoneCP-856008a7-3553-4756-8ae7-0d3e244708fe" : {

"connectionWaitTimeAvg" : 0.015448195945945946,

"statementExecuteTimeAvg" : 0.6599738874458875,

"statementPrepareTimeAvg" : 1.4170901010615866,

"totalLeasedConnections" : 0,

"totalFreeConnections" : 1,

"totalCreatedConnections" : 1,

"cacheHits" : 0,

"cacheMiss" : 0,

"statementsCached" : 0,

"statementsPrepared" : 153,

"connectionsRequested" : 148,

"cumulativeConnectionWaitTime" : 2,

"cumulativeStatementExecutionTime" : 152,

"cumulativeStatementPrepareTime" : 107,

"cacheHitRatio" : 0.0,

"statementsExecuted" : 231

}

}The statistics shown relate to the time and connections related to SQL statements.

Note

To check the health of a JDBC repository, you need to make two changes to your configuration:

Install a JDBC repository, as described in "Installing a Repository For Production" in the Installation Guide.

Open the

boot.propertiesfile in yourproject-dir/conf/bootdirectory, and enable the statistics MBean for the BoneCP JDBC connection pool:openidm.bonecp.statistics.enabled=true

2.3.3.4. Reconciliation Health Check

With the following REST call, you can get basic information about the system demands related to reconciliation:

$ curl \

--cacert self-signed.crt \

--header "X-OpenIDM-Username: openidm-admin" \

--header "X-OpenIDM-Password: openidm-admin" \

--request GET \

"https://localhost:8443/openidm/health/recon"

{

"_id" : "",

"_rev" : "",

"activeThreads" : 1,

"corePoolSize" : 10,

"largestPoolSize" : 1,

"maximumPoolSize" : 10,

"currentPoolSize" : 1

}From the output, you can review the number of active threads used by the reconciliation, as well as the available thread pool.

2.3.4. Customizing Health Check Scripts

You can extend or override the default information that is provided by

creating your own script file and its corresponding configuration file in

openidm/conf/info-name.json.

Custom script files can be located anywhere, although a best practice is to

place them in openidm/script/info. A sample customized

script file for extending the default ping service is provided in

openidm/samples/infoservice/script/info/customping.js.

The corresponding configuration file is provided in

openidm/samples/infoservice/conf/info-customping.json.

The configuration file has the following syntax:

{

"infocontext" : "ping",

"type" : "text/javascript",

"file" : "script/info/customping.js"

}The parameters in the configuration file are as follows:

infocontextspecifies the relative name of the info endpoint under the info context. The information can be accessed over REST at this endpoint, for example, settinginfocontexttomycontext/myendpointwould make the information accessible over REST athttps://localhost:8443/openidm/info/mycontext/myendpoint.typespecifies the type of the information source. JavaScript ("type" : "text/javascript") and Groovy ("type" : "groovy") are supported.filespecifies the path to the JavaScript or Groovy file, if you do not provide a"source"parameter.sourcespecifies the actual JavaScript or Groovy script, if you have not provided a"file"parameter.

Additional properties can be passed to the script as depicted in this

configuration file

(openidm/samples/infoservice/conf/info-name.json).

Script files in openidm/samples/infoservice/script/info/

have access to the following objects:

request- the request details, including the method called and any parameters passed.healthinfo- the current health status of the system.openidm- access to the JSON resource API.Any additional properties that are depicted in the configuration file (

openidm/samples/infoservice/conf/info-name.json.)

2.3.5. Verifying the State of Health Check Service Modules

The configurable OpenIDM health check service can verify the status of required modules and services for an operational system. During system startup, OpenIDM checks that these modules and services are available and reports on whether any requirements for an operational system have not been met. If dynamic configuration changes are made, OpenIDM rechecks that the required modules and services are functioning, to allow ongoing monitoring of system operation.

OpenIDM checks all required modules. Examples of those modules are shown here:

"org.forgerock.openicf.framework.connector-framework"

"org.forgerock.openicf.framework.connector-framework-internal"

"org.forgerock.openicf.framework.connector-framework-osgi"

"org.forgerock.openidm.audit"

"org.forgerock.openidm.core"

"org.forgerock.openidm.enhanced-config"

"org.forgerock.openidm.external-email"

...

"org.forgerock.openidm.system"

"org.forgerock.openidm.ui"

"org.forgerock.openidm.util"

"org.forgerock.commons.org.forgerock.json.resource"

"org.forgerock.commons.org.forgerock.json.resource.restlet"

"org.forgerock.commons.org.forgerock.restlet"

"org.forgerock.commons.org.forgerock.util"

"org.forgerock.openidm.security-jetty"

"org.forgerock.openidm.jetty-fragment"

"org.forgerock.openidm.quartz-fragment"

"org.ops4j.pax.web.pax-web-extender-whiteboard"

"org.forgerock.openidm.scheduler"

"org.ops4j.pax.web.pax-web-jetty-bundle"

"org.forgerock.openidm.repo-jdbc"

"org.forgerock.openidm.repo-orientdb"

"org.forgerock.openidm.config"

"org.forgerock.openidm.crypto"OpenIDM checks all required services. Examples of those services are shown here:

"org.forgerock.openidm.config"

"org.forgerock.openidm.provisioner"

"org.forgerock.openidm.provisioner.openicf.connectorinfoprovider"

"org.forgerock.openidm.external.rest"

"org.forgerock.openidm.audit"

"org.forgerock.openidm.policy"

"org.forgerock.openidm.managed"

"org.forgerock.openidm.script"

"org.forgerock.openidm.crypto"

"org.forgerock.openidm.recon"

"org.forgerock.openidm.info"

"org.forgerock.openidm.router"

"org.forgerock.openidm.scheduler"

"org.forgerock.openidm.scope"

"org.forgerock.openidm.taskscanner"

You can replace the list of required modules and services, or add to it, by

adding the following lines to your project's

conf/boot/boot.properties file. Bundles and services

are specified as a list of symbolic names, separated by commas:

openidm.healthservice.reqbundles- overrides the default required bundles.openidm.healthservice.reqservices- overrides the default required services.openidm.healthservice.additionalreqbundles- specifies required bundles (in addition to the default list).openidm.healthservice.additionalreqservices- specifies required services (in addition to the default list).

By default, OpenIDM gives the system 15 seconds to start up all the required

bundles and services, before the system readiness is assessed. Note that this

is not the total start time, but the time required to complete the service

startup after the framework has started. You can change this default by

setting the value of the servicestartmax property (in

milliseconds) in your project's conf/boot/boot.properties

file. This example sets the startup time to five seconds:

openidm.healthservice.servicestartmax=5000

2.4. Displaying Information About Installed Modules

On a running OpenIDM instance, you can list the installed modules and their states by typing the following command in the OSGi console. (The output will vary by configuration):

-> scr list

Id State Name

[ 12] [active ] org.forgerock.openidm.endpoint

[ 13] [active ] org.forgerock.openidm.endpoint

[ 14] [active ] org.forgerock.openidm.endpoint

[ 15] [active ] org.forgerock.openidm.endpoint

[ 16] [active ] org.forgerock.openidm.endpoint

...

[ 34] [active ] org.forgerock.openidm.taskscanner

[ 20] [active ] org.forgerock.openidm.external.rest

[ 6] [active ] org.forgerock.openidm.router

[ 33] [active ] org.forgerock.openidm.scheduler

[ 19] [unsatisfied ] org.forgerock.openidm.external.email

[ 11] [active ] org.forgerock.openidm.sync

[ 25] [active ] org.forgerock.openidm.policy

[ 8] [active ] org.forgerock.openidm.script

[ 10] [active ] org.forgerock.openidm.recon

[ 4] [active ] org.forgerock.openidm.http.contextregistrator

[ 1] [active ] org.forgerock.openidm.config

[ 18] [active ] org.forgerock.openidm.endpointservice

[ 30] [unsatisfied ] org.forgerock.openidm.servletfilter

[ 24] [active ] org.forgerock.openidm.infoservice

[ 21] [active ] org.forgerock.openidm.authentication

->

To display additional information about a particular module or service, run

the following command, substituting the Id of that module

from the preceding list:

-> scr info Id

The following example displays additional information about the router service:

-> scr info 6

ID: 6

Name: org.forgerock.openidm.router

Bundle: org.forgerock.openidm.core (41)

State: active

Default State: enabled

Activation: immediate

Configuration Policy: optional

Activate Method: activate (declared in the descriptor)

Deactivate Method: deactivate (declared in the descriptor)

Modified Method: modified

Services: org.forgerock.json.resource.JsonResource

Service Type: service

Reference: ref_JsonResourceRouterService_ScopeFactory

Satisfied: satisfied

Service Name: org.forgerock.openidm.scope.ScopeFactory

Multiple: single

Optional: mandatory

Policy: dynamic

Properties:

component.id = 6

component.name = org.forgerock.openidm.router

felix.fileinstall.filename = file:/openidm/samples/sample1/conf/router.json

jsonconfig = {

"filters" : [

{

"onRequest" : {

"type" : "text/javascript",

"file" : "bin/defaults/script/router-authz.js"

}

},

{

"onRequest" : {

"type" : "text/javascript",

"file" : "bin/defaults/script/policyFilter.js"

},

"methods" : [

"create",

"update"

]

}

]

}

openidm.restlet.path = /

service.description = OpenIDM internal JSON resource router

service.pid = org.forgerock.openidm.router

service.vendor = ForgeRock AS

->2.5. Starting OpenIDM in Debug Mode

To debug custom libraries, you can start OpenIDM with the option to use the Java Platform Debugger Architecture (JPDA):

Start OpenIDM with the

jpdaoption:$ cd /path/to/openidm $ ./startup.sh jpda Executing ./startup.sh... Using OPENIDM_HOME: /path/to/openidm Using OPENIDM_OPTS: -Xmx1024m -Xms1024m -Denvironment=PROD -Djava.compiler=NONE -Xnoagent -Xdebug -Xrunjdwp:transport=dt_socket,address=5005,server=y,suspend=n Using LOGGING_CONFIG: -Djava.util.logging.config.file=/path/to/openidm/conf/logging.properties Listening for transport dt_socket at address: 5005 Using boot properties at /path/to/openidm/conf/boot/boot.properties -> OpenIDM version "4.0.0" (revision: xxxx) OpenIDM ready

The relevant JPDA options are outlined in the startup script (

startup.sh).In your IDE, attach a Java debugger to the JVM via socket, on port 5005.

Caution

This interface is internal and subject to change. If you depend on this interface, contact ForgeRock support.

2.6. Running OpenIDM As a Service on Linux Systems

OpenIDM provides a script that generates an initialization script to run OpenIDM as a service on Linux systems. You can start the script as the root user, or configure it to start during the boot process.

When OpenIDM runs as a service, logs are written to the directory in which OpenIDM was installed.

To run OpenIDM as a service, take the following steps:

If you have not yet installed OpenIDM, follow the procedure described in "Installing OpenIDM Services" in the Installation Guide.

Run the RC script:

$ cd /path/to/openidm/bin $ ./create-openidm-rc.sh

As a user with administrative privileges, copy the

openidmscript to the/etc/init.ddirectory:$ sudo cp openidm /etc/init.d/

If you run Linux with SELinux enabled, change the file context of the newly copied script with the following command:

$ sudo restorecon /etc/init.d/openidm

You can verify the change to SELinux contexts with the

ls -Z /etc/init.dcommand. For consistency, change the user context to match other scripts in the same directory with thesudo chcon -u system_u /etc/init.d/openidmcommand.Run the appropriate commands to add OpenIDM to the list of RC services:

On Red Hat-based systems, run the following commands:

$ sudo chkconfig --add openidm

$ sudo chkconfig openidm on

On Debian/Ubuntu systems, run the following command:

$ sudo update-rc.d openidm defaults Adding system startup for /etc/init.d/openidm ... /etc/rc0.d/K20openidm -> ../init.d/openidm /etc/rc1.d/K20openidm -> ../init.d/openidm /etc/rc6.d/K20openidm -> ../init.d/openidm /etc/rc2.d/S20openidm -> ../init.d/openidm /etc/rc3.d/S20openidm -> ../init.d/openidm /etc/rc4.d/S20openidm -> ../init.d/openidm /etc/rc5.d/S20openidm -> ../init.d/openidm

Note the output, as Debian/Ubuntu adds start and kill scripts to appropriate runlevels.

When you run the command, you may get the following warning message:

update-rc.d: warning: /etc/init.d/openidm missing LSB information. You can safely ignore that message.

As an administrative user, start the OpenIDM service:

$ sudo /etc/init.d/openidm start

Alternatively, reboot the system to start the OpenIDM service automatically.

(Optional) The following commands stops and restarts the service:

$ sudo /etc/init.d/openidm stop

$ sudo /etc/init.d/openidm restart

If you have set up a deployment of OpenIDM in a custom directory, such as

/path/to/openidm/production, you can modify the

/etc/init.d/openidm script.

Open the openidm script in a text editor and navigate to

the START_CMD line.

At the end of the command, you should see the following line:

org.forgerock.commons.launcher.Main -c bin/launcher.json > logs/server.out 2>&1 &"

Include the path to the production directory. In this case, you would add -p production as shown:

org.forgerock.commons.launcher.Main -c bin/launcher.json -p production > logs/server.out 2>&1 &

Save the openidm script file in the

/etc/init.d directory. The

sudo /etc/init.d/openidm start command should now start

OpenIDM with the files in your production subdirectory.

Chapter 3. OpenIDM Command-Line Interface

This chapter describes the basic command-line interface provided with OpenIDM. The command-line interface includes a number of utilities for managing an OpenIDM instance.

All of the utilities are subcommands of the cli.sh

(UNIX) or cli.bat (Windows) scripts. To use the utilities,

you can either run them as subcommands, or launch the cli

script first, and then run the utility. For example, to run the

encrypt utility on a UNIX system:

$ cd /path/to/openidm $ ./cli.sh Using boot properties at /path/to/openidm/conf/boot/boot.properties openidm# encrypt ....

or

$ cd /path/to/openidm $ ./cli.sh encrypt ...

By default, the command-line utilities run with the properties defined in your

project's conf/boot/boot.properties file.

If you run the cli.sh command by itself, it opens an OpenIDM-specific shell prompt:

openidm#

The startup and shutdown scripts are not discussed in this chapter. For information about these scripts, see "Starting and Stopping OpenIDM".

The following sections describe the subcommands and their use. Examples assume that you are running the commands on a UNIX system. For Windows systems, use cli.bat instead of cli.sh.

For a list of subcommands available from the openidm#

prompt, run the cli.sh help command. The

help and exit options shown below are

self-explanatory. The other subcommands are explained in the subsections

that follow:

local:keytool Export or import a SecretKeyEntry.

The Java Keytool does not allow for exporting or importing SecretKeyEntries.

local:encrypt Encrypt the input string.

local:secureHash Hash the input string.

local:validate Validates all json configuration files in the configuration

(default: /conf) folder.

basic:help Displays available commands.

basic:exit Exit from the console.

remote:update Update the system with the provided update file.

remote:configureconnector Generate connector configuration.

remote:configexport Exports all configurations.

remote:configimport Imports the configuration set from local file/directory.The configexport, configimport, and configureconnector subcommands support up to four options:

- -u or --user USER[:PASSWORD]

Allows you to specify the server user and password. Specifying a username is mandatory. If you do not specify a username, the following error is output to the OSGi console:

Remote operation failed: Unauthorized. If you do not specify a password, you are prompted for one. This option is used by all three subcommands.- --url URL

The URL of the OpenIDM REST service. The default URL is

http://localhost:8080/openidm/. This can be used to import configuration files from a remote running instance of OpenIDM. This option is used by all three subcommands.- -P or --port PORT

The port number associated with the OpenIDM REST service. If specified, this option overrides any port number specified with the --url option. The default port is 8080. This option is used by all three subcommands.

- -r or --replaceall or --replaceAll

Replaces the entire list of configuration files with the files in the specified backup directory. This option is used with only the configimport command.

3.1. Using the configexport Subcommand

The configexport subcommand exports all configuration objects to a specified location, enabling you to reuse a system configuration in another environment. For example, you can test a configuration in a development environment, then export it and import it into a production environment. This subcommand also enables you to inspect the active configuration of an OpenIDM instance.

OpenIDM must be running when you execute this command.

Usage is as follows:

$ ./cli.sh configexport --user username:password export-location

For example:

$ ./cli.sh configexport --user openidm-admin:openidm-admin /tmp/conf

On Windows systems, the export-location must be provided in quotation marks, for example:

C:\openidm\cli.bat configexport --user openidm-admin:openidm-admin "C:\temp\openidm"

Configuration objects are exported as .json files to the

specified directory. The command creates the directory if needed.

Configuration files that are present in this directory are renamed as backup

files, with a timestamp, for example,

audit.json.2014-02-19T12-00-28.bkp, and are not

overwritten. The following configuration objects are exported:

The internal repository table configuration (

repo.orientdb.jsonorrepo.jdbc.json) and the datasource connection configuration, for JDBC repositories (datasource.jdbc-default.json)Default and custom configuration directories (

script.json)The log configuration (

audit.json)The authentication configuration (

authentication.json)The cluster configuration (

cluster.json)The configuration of a connected SMTP email server (

external.email.json)Custom configuration information (

info-name.json)The managed object configuration (

managed.json)The connector configuration (

provisioner.openicf-*.json)The router service configuration (

router.json)The scheduler service configuration (

scheduler.json)Any configured schedules (

schedule-*.json)Standard knowledge-based authentication questions (

selfservice.kba.json)The synchronization mapping configuration (

sync.json)If workflows are defined, the configuration of the workflow engine (

workflow.json) and the workflow access configuration (process-access.json)Any configuration files related to the user interface (

ui-*.json)The configuration of any custom endpoints (

endpoint-*.json)The configuration of servlet filters (

servletfilter-*.json)The policy configuration (

policy.json)

3.2. Using the configimport Subcommand

The configimport subcommand imports configuration objects from the specified directory, enabling you to reuse a system configuration from another environment. For example, you can test a configuration in a development environment, then export it and import it into a production environment.

The command updates the existing configuration from the import-location over the OpenIDM REST interface. By default, if configuration objects are present in the import-location and not in the existing configuration, these objects are added. If configuration objects are present in the existing location but not in the import-location, these objects are left untouched in the existing configuration.

If you include the --replaceAll parameter, the

command wipes out the existing configuration and replaces it with the

configuration in the import-location. Objects in

the existing configuration that are not present in the

import-location are deleted.

Usage is as follows:

$ ./cli.sh configimport --user username:password [--replaceAll] import-location

For example:

$ ./cli.sh configimport --user openidm-admin:openidm-admin --replaceAll /tmp/conf

On Windows systems, the import-location must be provided in quotation marks, for example:

C:\openidm\cli.bat configimport --user openidm-admin:openidm-admin --replaceAll "C:\temp\openidm"

Configuration objects are imported as .json files from the

specified directory to the conf directory. The

configuration objects that are imported are the same as those for the

export command, described in the previous section.

3.3. Using the configureconnector Subcommand

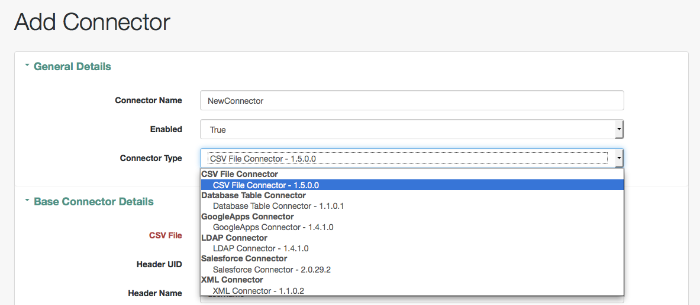

The configureconnector subcommand generates a configuration for an OpenICF connector.

Usage is as follows:

$ ./cli.sh configureconnector --user username:password --name connector-name

Select the type of connector that you want to configure. The following example configures a new XML connector:

$ ./cli.sh configureconnector --user openidm-admin:openidm-admin --name myXmlConnector Starting shell in /path/to/openidm Using boot properties at /path/to/openidm/conf/boot/boot.properties 0. CSV File Connector version 1.5.0.0 1. Database Table Connector version 1.1.0.1 2. Scripted Poolable Groovy Connector version 1.4.2.0 3. Scripted Groovy Connector version 1.4.2.0 4. Scripted CREST Connector version 1.4.2.0 5. Scripted SQL Connector version 1.4.2.0 6. Scripted REST Connector version 1.4.2.0 7. LDAP Connector version 1.4.1.0 8. XML Connector version 1.1.0.2 9. Exit Select [0..9]: 8 Edit the configuration file and run the command again. The configuration was saved to /openidm/temp/provisioner.openicf-myXmlConnector.json

The basic configuration is saved in a file named

/openidm/temp/provisioner.openicf-connector-name.json.

Edit the configurationProperties parameter in this file to

complete the connector configuration. For an XML connector, you can use the

schema definitions in Sample 1 for an example configuration:

"configurationProperties" : {

"xmlFilePath" : "samples/sample1/data/resource-schema-1.xsd",

"createFileIfNotExists" : false,

"xsdFilePath" : "samples/sample1/data/resource-schema-extension.xsd",

"xsdIcfFilePath" : "samples/sample1/data/xmlConnectorData.xml"

},

For more information about the connector configuration properties, see "Configuring Connectors".

When you have modified the file, run the configureconnector command again so that OpenIDM can pick up the new connector configuration:

$ ./cli.sh configureconnector --user openidm-admin:openidm-admin --name myXmlConnector Executing ./cli.sh... Starting shell in /path/to/openidm Using boot properties at /path/to/openidm/conf/boot/boot.properties Configuration was found and read from: /path/to/openidm/temp/provisioner.openicf-myXmlConnector.json

You can now copy the new

provisioner.openicf-myXmlConnector.json file to the

conf/ subdirectory.

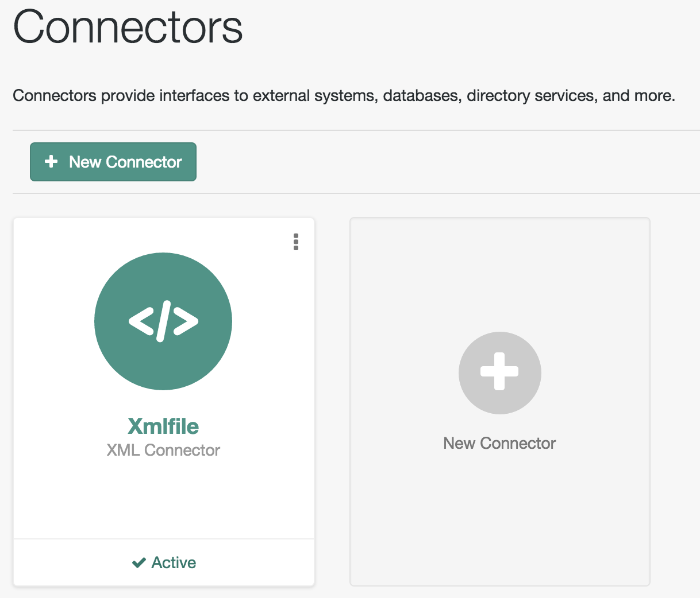

You can also configure connectors over the REST interface, or through the Admin UI. For more information, see "Creating Default Connector Configurations" and "Adding New Connectors from the Admin UI".

3.4. Using the encrypt Subcommand

The encrypt subcommand encrypts an input string, or JSON object, provided at the command line. This subcommand can be used to encrypt passwords, or other sensitive data, to be stored in the OpenIDM repository. The encrypted value is output to standard output and provides details of the cryptography key that is used to encrypt the data.

Usage is as follows:

$ ./cli.sh encrypt [-j] string

The -j option specifies that the string to be

encrypted is a JSON object. If you do not enter the string as part of the

command, the command prompts for the string to be encrypted. If you enter

the string as part of the command, any special characters, for example

quotation marks, must be escaped.

The following example encrypts a normal string value:

$ ./cli.sh encrypt mypassword

Executing ./cli.sh

Starting shell in /path/to/openidm

Using boot properties at /path/to/openidm/conf/boot/boot.properties

Activating cryptography service of type: JCEKS provider: location: security/keystore.jceks

Available cryptography key: openidm-sym-default

Available cryptography key: openidm-localhost

CryptoService is initialized with 2 keys.

-----BEGIN ENCRYPTED VALUE-----

{

"$crypto" : {

"value" : {

"iv" : "M2913T5ZADlC2ip2imeOyg==",

"data" : "DZAAAM1nKjQM1qpLwh3BgA==",

"cipher" : "AES/CBC/PKCS5Padding",

"key" : "openidm-sym-default"

},

"type" : "x-simple-encryption"

}

}

------END ENCRYPTED VALUE------ The following example encrypts a JSON object. The input string must be a valid JSON object:

$ ./cli.sh encrypt -j {\"password\":\"myPassw0rd\"}

Starting shell in /path/to/openidm

Using boot properties at /path/to/openidm/conf/boot/boot.properties

Activating cryptography service of type: JCEKS provider: location: security/keystore.jceks

Available cryptography key: openidm-sym-default

Available cryptography key: openidm-localhost

CryptoService is initialized with 2 keys.

-----BEGIN ENCRYPTED VALUE-----

{

"$crypto" : {

"value" : {

"iv" : "M2913T5ZADlC2ip2imeOyg==",

"data" : "DZAAAM1nKjQM1qpLwh3BgA==",

"cipher" : "AES/CBC/PKCS5Padding",

"key" : "openidm-sym-default"

},

"type" : "x-simple-encryption"

}

}

------END ENCRYPTED VALUE------ The following example prompts for a JSON object to be encrypted. In this case, you do not need to escape the special characters:

$ ./cli.sh encrypt -j

Using boot properties at /path/to/openidm/conf/boot/boot.properties

Enter the Json value

> Press ctrl-D to finish input

Start data input:

{"password":"myPassw0rd"}

^D

Activating cryptography service of type: JCEKS provider: location: security/keystore.jceks

Available cryptography key: openidm-sym-default

Available cryptography key: openidm-localhost

CryptoService is initialized with 2 keys.

-----BEGIN ENCRYPTED VALUE-----

{

"$crypto" : {

"value" : {

"iv" : "6e0RK8/4F1EK5FzSZHwNYQ==",

"data" : "gwHSdDTmzmUXeD6Gtfn6JFC8cAUiksiAGfvzTsdnAqQ=",

"cipher" : "AES/CBC/PKCS5Padding",

"key" : "openidm-sym-default"

},

"type" : "x-simple-encryption"

}

}

------END ENCRYPTED VALUE------3.5. Using the secureHash Subcommand

The secureHash subcommand hashes an input string, or JSON object, using the specified hash algorithm. This subcommand can be used to hash password values, or other sensitive data, to be stored in the OpenIDM repository. The hashed value is output to standard output and provides details of the algorithm that was used to hash the data.

Usage is as follows:

$ ./cli.sh secureHash --algorithm [-j] string

The -a or --algorithm option specifies the

hash algorithm to use. OpenIDM supports the following hash algorithms:

MD5, SHA-1, SHA-256,

SHA-384, and SHA-512. If you do not

specify a hash algorithm, SHA-256 is used.

The -j option specifies that the string to be hashed is a

JSON object. If you do not enter the string as part of the command, the

command prompts for the string to be hashed. If you enter the string as part

of the command, any special characters, for example quotation marks, must be

escaped.

The following example hashes a password value (mypassword)

using the SHA-1 algorithm:

$ ./cli.sh secureHash --algorithm SHA-1 mypassword

Executing ./cli.sh...

Starting shell in /path/to/openidm

Using boot properties at /path/to/openidm/conf/boot/boot.properties

Activating cryptography service of type: JCEKS provider: location: security/keystore.jceks

Available cryptography key: openidm-sym-default

Available cryptography key: openidm-localhost

CryptoService is initialized with 2 keys.

-----BEGIN HASHED VALUE-----

{

"$crypto" : {

"value" : {

"algorithm" : "SHA-1",

"data" : "YNBVgtR/jlOaMm01W8xnCBAj2J+x73iFpbhgMEXl7cOsCeWm"

},

"type" : "salted-hash"

}

}

------END HASHED VALUE------The following example hashes a JSON object. The input string must be a valid JSON object:

$ ./cli.sh secureHash --algorithm SHA-1 -j {\"password\":\"myPassw0rd\"}

Executing ./cli.sh...

Starting shell in /path/to/openidm

Using boot properties at /path/to/openidm/conf/boot/boot.properties

Activating cryptography service of type: JCEKS provider: location: security/keystore.jceks

Available cryptography key: openidm-sym-default

Available cryptography key: openidm-localhost

CryptoService is initialized with 2 keys.

-----BEGIN HASHED VALUE-----

{

"$crypto" : {

"value" : {

"algorithm" : "SHA-1",

"data" : "ztpt8rEbeqvLXUE3asgA3uf5gJ77I3cED2OvOIxd5bi1eHtG"

},

"type" : "salted-hash"

}

}

------END HASHED VALUE------The following example prompts for a JSON object to be hashed. In this case, you do not need to escape the special characters:

$ ./cli.sh secureHash --algorithm SHA-1 -j

Using boot properties at /path/to/openidm/conf/boot/boot.properties

Enter the Json value

> Press ctrl-D to finish input

Start data input:

{"password":"myPassw0rd"}

^D

Activating cryptography service of type: JCEKS provider: location: security/keystore.jceks

Available cryptography key: openidm-sym-default

Available cryptography key: openidm-localhost

CryptoService is initialized with 2 keys.

-----BEGIN HASHED VALUE-----

{

"$crypto" : {

"value" : {

"algorithm" : "SHA-1",

"data" : "ztpt8rEbeqvLXUE3asgA3uf5gJ77I3cED2OvOIxd5bi1eHtG"

},

"type" : "salted-hash"

}

}

------END HASHED VALUE------3.6. Using the keytool Subcommand

The keytool subcommand exports or imports secret key values.

The Java keytool command enables you to export and import public keys and certificates, but not secret or symmetric keys. The OpenIDM keytool subcommand provides this functionality.

Usage is as follows:

$ ./cli.sh keytool [--export, --import] alias

For example, to export the default OpenIDM symmetric key, run the following command:

$ ./cli.sh keytool --export openidm-sym-default Using boot properties at /openidm/conf/boot/boot.properties Use KeyStore from: /openidm/security/keystore.jceks Please enter the password: [OK] Secret key entry with algorithm AES AES:606d80ae316be58e94439f91ad8ce1c0

The default keystore password is changeit. For security

reasons, you must change this password in a production

environment. For information about changing the keystore password, see

"Change the Default Keystore Password".

To import a new secret key named my-new-key, run the following command:

$ ./cli.sh keytool --import my-new-key Using boot properties at /openidm/conf/boot/boot.properties Use KeyStore from: /openidm/security/keystore.jceks Please enter the password: Enter the key: AES:606d80ae316be58e94439f91ad8ce1c0

If a secret key of that name already exists, OpenIDM returns the following error:

"KeyStore contains a key with this alias"

3.7. Using the validate Subcommand

The validate subcommand validates all .json configuration

files in your project's conf/ directory.

Usage is as follows:

$ ./cli.sh validate

Executing ./cli.sh

Starting shell in /path/to/openidm

Using boot properties at /path/to/openidm/conf/boot/boot.properties

...................................................................

[Validating] Load JSON configuration files from:

[Validating] /path/to/openidm/conf

[Validating] audit.json .................................. SUCCESS

[Validating] authentication.json ......................... SUCCESS

...

[Validating] sync.json ................................... SUCCESS

[Validating] ui-configuration.json ....................... SUCCESS

[Validating] ui-countries.json ........................... SUCCESS

[Validating] ui-secquestions.json ........................ SUCCESS

[Validating] workflow.json ............................... SUCCESS

3.8. Using the update Subcommand

The update subcommand supports updates of OpenIDM 4 for patches and migrations. For an example of this process, see "Updating OpenIDM" in the Installation Guide.

Chapter 4. OpenIDM Web-Based User Interfaces

OpenIDM provides a customizable, browser-based user interface. The functionality is subdivided into Administrative and Self-Service User Interfaces.

If you are administering OpenIDM, navigate to the Administrative User

Interface, also known as the Admin UI. If OpenIDM is installed on the

local system, you can get to the Admin UI at the following URL:

https://localhost:8443/admin. In the Admin UI, you

can configure connectors, customize managed objects, set up attribute

mappings, manage accounts, and more.

The Self-Service User Interface, also known as the Self-Service UI,

provides role-based access to tasks based on BPMN2 workflows, and

allows users to manage certain aspects of their own accounts, including

configurable self-service registration. When OpenIDM starts, you can access

the Self-Service UI at

https://localhost:8443/.

Warning

The default password for the OpenIDM administrative user,

openidm-admin, is openidm-admin.

To protect your deployment in production, change this password.

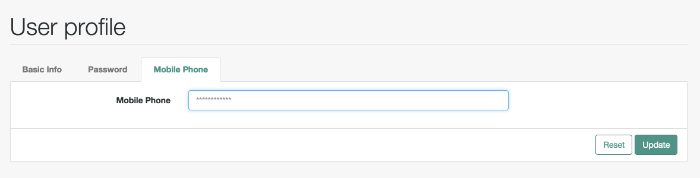

All users, including openidm-admin, can change their

password through the Self-Service UI. After you have logged in, click Change

Password.

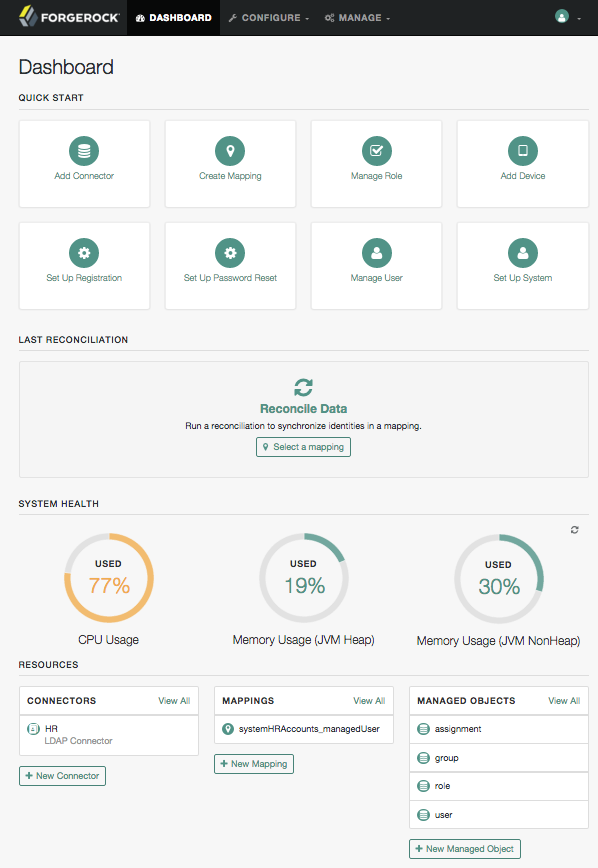

4.1. Configuring OpenIDM from the Admin UI

You can set up a basic configuration for OpenIDM with the Administrative User Interface (Admin UI).

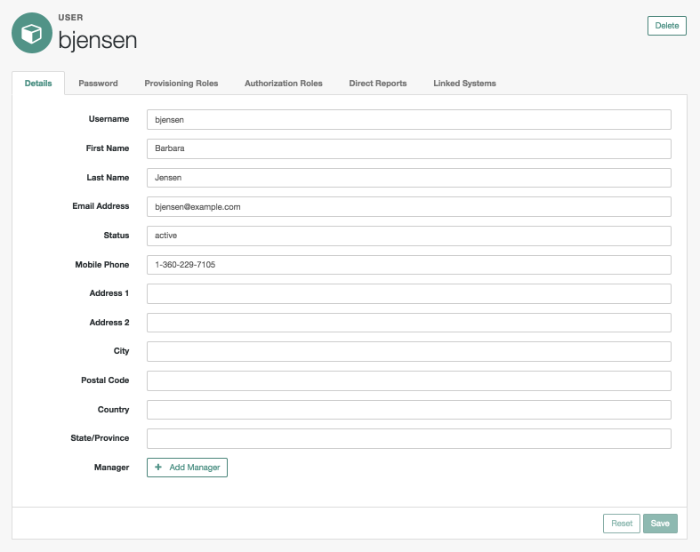

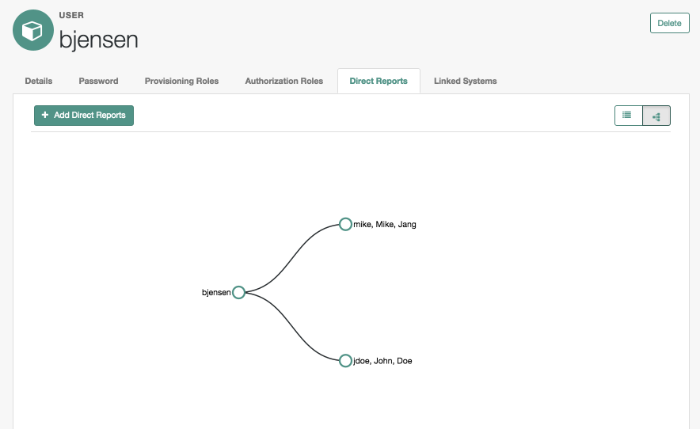

Through the Admin UI, you can connect to resources, configure attribute mapping and scheduled reconciliation, and set up and manage objects, such as users, groups, and devices.

When you log into the Admin UI, the first screen you should see is the Dashboard.

The Admin UI includes a fixed top menu bar. As you navigate around the Admin UI, you should see the same menu bar throughout. You can click the Dashboard link on the top menu bar to return to the Dashboard.

The Dashboard is split into four sections:

Quick Start cards support one-click access to common administrative tasks, and are described in detail in the following section.

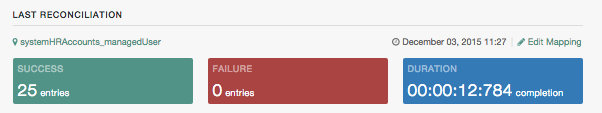

Last Reconciliation includes data from the most recent reconciliation between data stores. After you run a reconciliation, you should see data similar to:

System Health includes data on current CPU and memory usage.

Resources include an abbreviated list of configured connectors, mappings, and managed objects.

The Quick Start cards allow quick access to the labeled

configuration options, described here:

Add ConnectorUse the Admin UI to connect to external resources. For more information, see "Adding New Connectors from the Admin UI".

Create MappingConfigure synchronization mappings to map objects between resources. For more information, see "Configuring the Synchronization Mapping".

Manage RoleSet up managed provisioning or authorization roles. For more information, see "Working With Managed Roles".

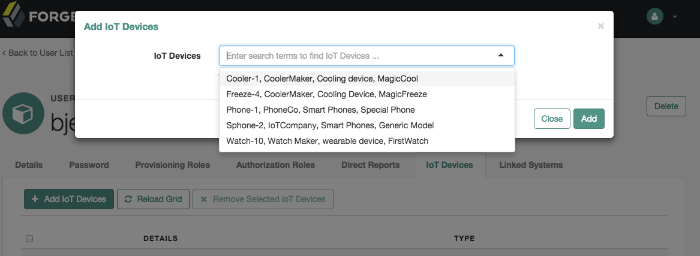

Add DeviceUse the Admin UI to set up managed objects, including users, groups, roles, or even Internet of Things (IoT) devices. For more information, see "Managing Accounts".

Set Up RegistrationConfigure User Self-Registration. You can set up the OpenIDM Self-Service UI login screen, with a link that allows new users to start a verified account registration process. For more information, see "Configuring User Self-Service".

Set Up Password ResetConfigure user self-service Password Reset. You can configure OpenIDM to allow users to reset forgotten passwords. For more information, see "Configuring User Self-Service".

Manage UserAllows management of users in the current internal OpenIDM repository. You may have to run a reconciliation from an external repository first. For more information, see "Working with Managed Users".

Set Up SystemConfigure how OpenIDM works, as it relates to:

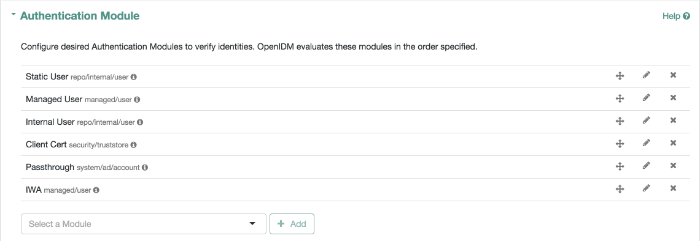

Authentication, as described in "Supported Authentication and Session Modules".

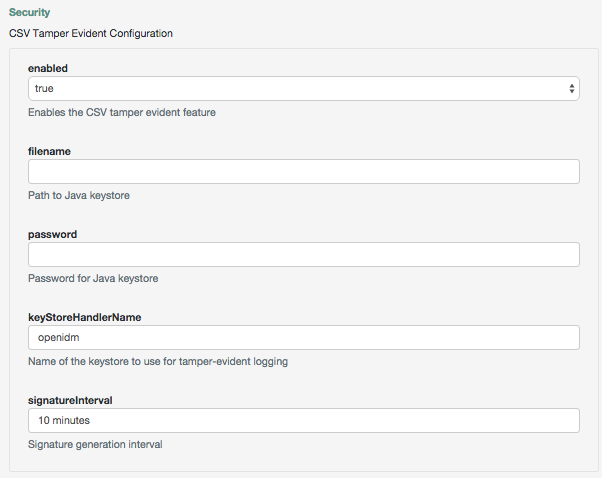

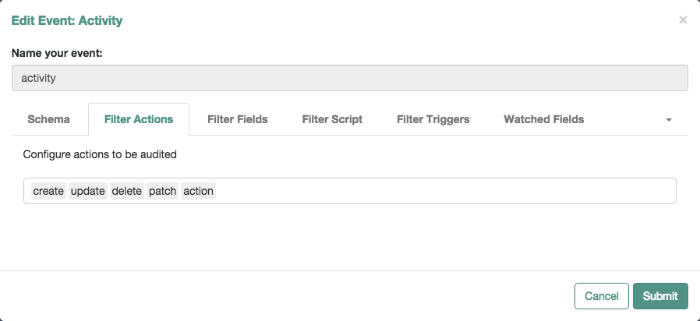

Audit, as described in "Using Audit Logs".

Self Service UI, as described in "Changing the UI Path".

Email, as described in "Sending Email".

Updates, as described in "Updating OpenIDM" in the Installation Guide.

You can configure more of OpenIDM than what is shown in the Quick Start cards. In the top menu bar, select the Configure and Manage drop-down menus and see what happens when you select each option.

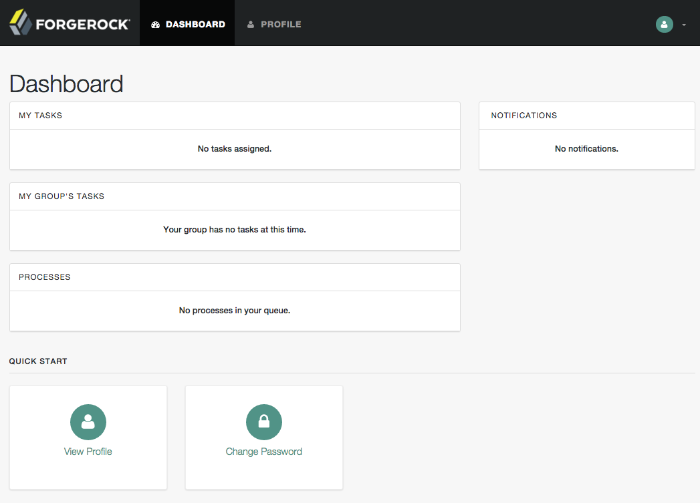

4.2. Working With the Self-Service UI

For all users, the Self-Service UI includes Dashboard and Profile links in the top menu bar.

To access the Self-Service UI, start OpenIDM, then navigate to https://localhost:8443/. If you have not installed a certificate that is trusted by a certificate authority, you are prompted with an Untrusted Connection warning the first time you log in to the UI.

The Dashboard includes a list tasks assigned to the user who has logged in, tasks assigned to the relevant group, processes available to be invoked, current notifications for that user, along with Quick Start cards for that user's profile and password.

For examples of these tasks, processes, and notifications, see "Workflow Samples" in the Samples Guide.

4.3. Configuring User Self-Service

The following sections describe how you can configure three functions of user self-service: User Registration, Forgotten Username, and Password Reset.

User Registration: You can configure limited access that allows a current anonymous user to create their own accounts. To aid in this process, you can configure reCAPTCHA, email validation, and KBA questions.

Forgotten Username: You can set up OpenIDM to allow users to recover forgotten usernames via their email addresses or first and last names. OpenIDM can then display that username on the screen, and / or email such information to that user.

Password Reset: You can set up OpenIDM to verify user identities via KBA questions. If email configuration is included, OpenIDM would email a link that allows users to reset their passwords.

If you enable email functionality, the one solution that works for all three self-service functions is to configure an outgoing email service for OpenIDM, as described in "Sending Email".

Note

If you disable email validation only for user registration, you should perform one of the following actions:

Disable validation for

mailin the managed user schema. Click Configure > Managed Objects > User > Schema. Under Schema Properties, click Mail, scroll down to Validation Policies, and set Required tofalse.Configure the User Registration template to support user email entries. To do so, use "Customizing the User Registration Page", and substitute

mailforemployeeNum.

Without these changes, users who try to register accounts will see a

Forbidden Request Error.

You can configure user self-service through the UI and through configuration files.

In the UI, log into the Admin UI. You can enable these features when you click Configure > User Registration, Configure > Forgotten Username, and Configure > Password Reset.

In the command-line interface, copy the following files from

samples/miscto your workingproject-dir/confdirectory:User Registration: selfservice-registration.jsonForgotten username: selfservice-username.jsonPassword reset: selfservice-reset.jsonExamine the

ui-configuration.jsonfile in the same directory. You can activate or deactivate User Registration and Password Reset by changing the value associated with theselfRegistrationandpasswordResetproperties:{ "configuration" : { "selfRegistration" : true, "passwordReset" : true, "forgotUsername" : true, ...

For each of these functions, you can configure several options, including:

- reCAPTCHA

Google reCAPTCHA helps prevent bots from registering users or resetting passwords on your system. For Google documentation, see Google reCAPTCHA. For directions on how to configure reCAPTCHA for user self-service, see "Configuring Google reCAPTCHA".

- Email Validation / Email Username

You can configure the email messages that OpenIDM sends to users, as a way to verify identities for user self-service. For more information, see "Configuring Self-Service Email Messages".

If you configure email validation, you must also configure an outgoing email service in OpenIDM. To do so, click Configure > System Preferences > Email. For more information, read "Sending Email".

- User Details

You can modify the Identity Email Field associated with user registration; by default, it is set to

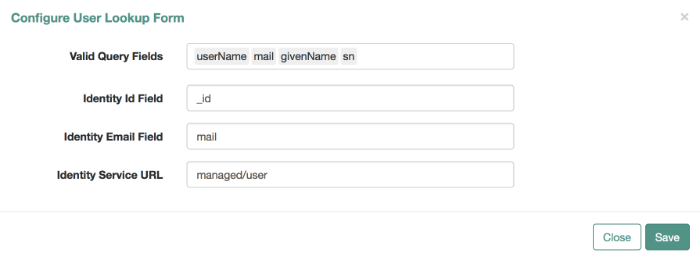

mail.- User Query

When configuring password reset and forgotten username functionality, you can modify the fields that a user is allowed to query. If you do, you may need to modify the HTML templates that appear to users who request such functionality. For more information, see "Modifying Valid Query Fields".

- Valid Query Fields

Property names that you can use to help users find their usernames or verify their identity, such as

userName,mail, orgivenName.- Identity ID Field

Property name associated with the User ID, typically

_id.- Identity Email Field

Property name associated with the user email field, typically something like

mailoremail.- Identity Service URL

The path associated with the identity data store, such as

managed/user.

- KBA Stage

You can modify Knowledge-based Authentication (KBA) questions. Users can then select the questions of their choice to help users verify their own identities. For directions on how to configure KBA questions, see "Configuring Self-Service Questions". For User Registration, you cannot configure these questions in the Admin UI.

- Registration Form / Password Reset Form

You can change the Identity Service URL for the target repository, to an entry such as

managed/user.- Password Reset Form

You can change the Identity Service URL for the target repository, to an entry such as

managed/user. You can also cite the property associated with user passwords, such aspassword.- Display Username

For forgotten username retrieval, you can configure OpenIDM to display the username on the website, instead of (or in addition to) sending that username to the associated email account.

- Snapshot Token

OpenIDM User Self-Service uses JWT tokens, with a default token lifetime of 1800 seconds.

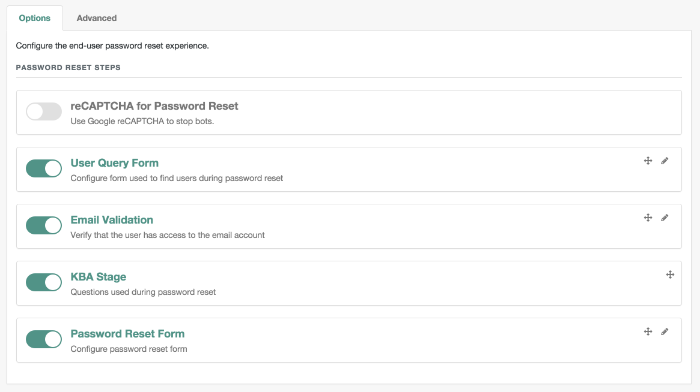

You can reorder how OpenIDM works with relevant self-service options, specifically reCAPTCHA, KBA stage questions, and email validation. Based on the following screen, users who need to reset their passwords will go through reCAPTCHA, followed by email validation, and then answer any configured KBA questions.

To reorder the steps, either "drag and drop" the options in the Admin UI, or

change the sequence in the associated configuration file, in the

project-dir/conf

directory.

OpenIDM generates a token for each process. For example, users who forget their usernames and passwords go through two steps:

The user goes through the User Registration process gets a JWT token, and has the token lifetime (default = 1800 seconds) to get to the next step in the process.

With username in hand, that user may then start the Password Reset process. That user gets a second JWT token, with the token lifetime configured for that process.

4.3.1. Common Configuration Details

This section describes configuration details common to both User Registration and Password Reset.

4.3.1.1. Configuring Self-Service Email Messages

When a user requests a new account, or a Password Reset, you can configure OpenIDM to send that user an email message, to confirm the request. That email can include a link that the user would select to continue the process.

You can configure that email message either through the UI or the

associated configuration files, as illustrated in the following excerpt of

the selfservice-registration.json file:

{

"stageConfigs" : {

{

"name" : "emailValidation",

"identityEmailField" : "mail",

"emailServiceUrl" : "external/email",

"from" : "admin@example.net",

"subject" : "Register new account",

"mimeType" : "text/html",

"subjectTranslations" : {

"en" : "Create a new username"

},

"messageTranslations" : {

"<h3>This is your confirmation email.</h3><h4><a href=\"%link%\">Click to continue</a></h4>",

"verificationLinkToken" : "%link%",

"verificationLink" : "https://openidm.example.net:8443/#register/"

}

...

Note the two languages in the subjectTranslations

and messageTranslations code blocks. You can add

translations for languages other than US English en

and French fr. Use the appropriate two-letter code

based on ISO 639. End users will see the message in the language

configured in their web browsers.

You can set up similar emails for password reset and forgotten username

functionality, in the selfservice-reset.json and

selfservice-username.json files. For templates,

see the /path/to/openidm/samples/misc directory.

One difference between User Registration and Password Reset is

in the "verificationLink"; for Password Reset,

the corresponding URL is:

...

"verificationLink" : "https://openidm.example.net:8443/#passwordReset/"

...4.3.1.2. Configuring Google reCAPTCHA

To use Google reCAPTCHA, you will need a Google account and your domain

name (RFC 2606-compliant URLs such as localhost and

example.com are acceptable for test purposes). Google

then provides a Site key and a Secret key that you can include in the

self-service function configuration.

For example, you can add the following reCAPTCHA code block (with appropriate

keys as defined by Google) into the selfservice-registration.json,

selfservice-reset.json or the

selfservice-username.json configuration files:

{

"stageConfigs" : [

{

"name" : "captcha",

"recaptchaSiteKey" : "< Insert Site Key Here >",

"recaptchaSecretKey" : "< Insert Secret Key Here >",

"recaptchaUri" : "https://www.google.com/recaptcha/api/siteverify"

},You may also add the reCAPTCHA keys through the UI.

4.3.1.3. Configuring Self-Service Questions

OpenIDM uses Knowledge-based Authentication (KBA) to help users prove

their identity when they perform the noted functions. In other words,

they get a choice of questions configured in the following file:

selfservice.kba.json.

The default version of this file is straightforward:

{

"questions" : {

"1" : {

"en" : "What's your favorite color?",

"en_GB" : "What is your favourite colour?",

"fr" : "Quelle est votre couleur préférée?"

},

"2" : {

"en" : "Who was your first employer?"

}

}

}You may change or add the questions of your choice, in JSON format.

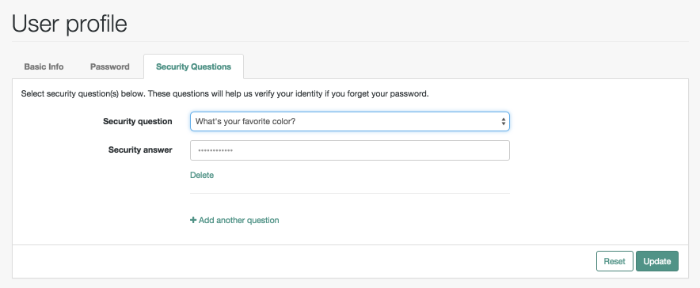

At this time, OpenIDM supports editing KBA questions only through the noted configuration file. However, individual users can configure their own questions and answers, during the User Registration process.

After a regular user logs into the Self-Service UI, that user can modify, add, and delete KBA questions under the Profile tab:

4.3.1.4. Setting a Minimum Number of Self-Service Questions

In addition, you can set a minimum number of questions that users have to

define to register for their accounts. To do so, open the associated

configuration file, selfservice-registration.json,

in your project-dir/conf

directory. Look for the code block that starts with

kbaSecurityAnswerDefinitionStage:

{

"name" : "kbaSecurityAnswerDefinitionStage",

"numberOfAnswersUserMustSet" : 1,

"kbaConfig" : null

},

In a similar fashion, you can set a minimum number of questions that users

have to answer before OpenIDM allows them to reset their passwords. The

associated configuration file is

selfservice-reset.json, and the relevant code block

is:

{

"name" : "kbaSecurityAnswerVerificationStage",

"kbaPropertyName" : "kbaInfo",

"identityServiceUrl" : "managed/user",

"numberOfQuestionsUserMustAnswer" : "1",

"kbaConfig" : null

},4.3.2. The End User and Commons User Self-Service

When all self-service features are enabled, OpenIDM includes three links on

the self-service login page: Reset your password,

Register, and Forgot Username?.

When the account registration page is used to create an account, OpenIDM normally creates a managed object in the OpenIDM repository, and applies default policies for managed objects.

4.4. Customizing a UI Template

You may want to customize information included in the Self-Service UI.

These procedures do not address actual data store requirements. If you add text boxes in the UI, it is your responsibility to set up associated properties in your repositories.

To do so, you should copy existing default template files in the

openidm/ui/selfservice/default subdirectory to

associated extension/ subdirectories.

To simplify the process, you can copy some or all of the content from the

openidm/ui/selfservice/default/templates to the

openidm/ui/selfservice/extension/templates directory.

You can use a similar process to modify what is shown in the Admin UI.

4.4.1. Customizing User Self-Service Screens

In the following procedure, you will customize the screen that users see during the User Registration process. You can use a similar process to customize what a user sees during the Password Reset and Forgotten Username processes.

For user Self-Service features, you can customize options in three files.

Navigate to the extension/templates/user/process

subdirectory, and examine the following files:

User Registration:

registration/userDetails-initial.htmlPassword Reset:

reset/userQuery-initial.htmlForgotten Username:

username/userQuery-initial.html

The following procedure demonstrates the process for User Registration.

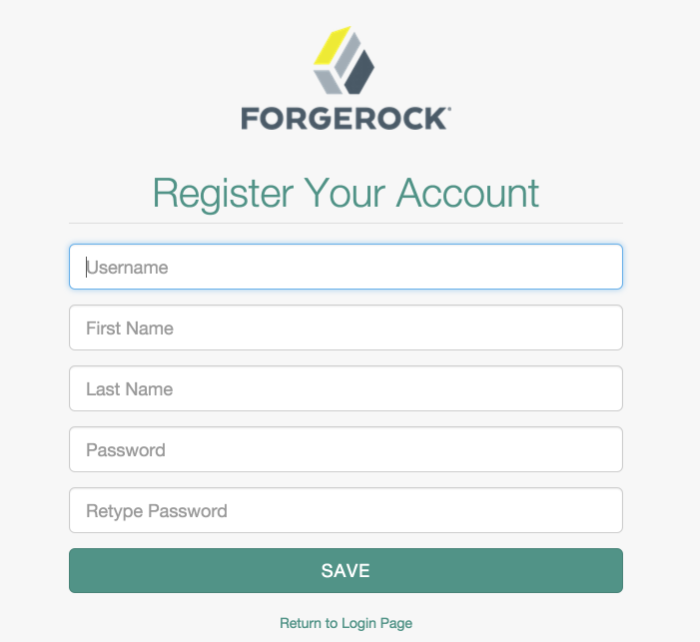

When you configure user self service, as described in "Configuring User Self-Service", anonymous users who choose to register will see a screen similar to:

The screen you see is from the following file:

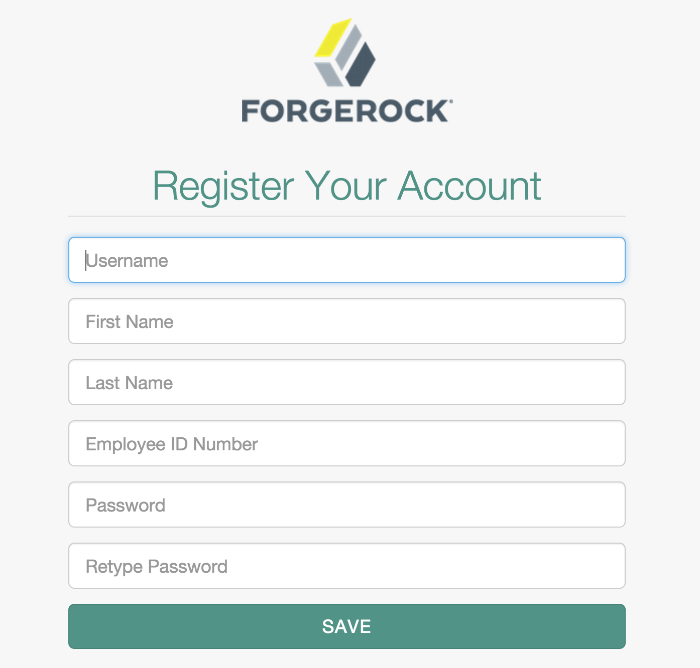

userDetails-initial.html, in theselfservice/extension/templates/user/process/registrationsubdirectory. Open that file in a text editor.Assume that you want new users to enter an employee ID number when they register.

Create a new