Guide to developing client applications, server extensions, and applications that embed servers by using ForgeRock® Directory Services software.

Preface

This guide shows you how to use ForgeRock® APIs to develop client applications, server extensions, and applications that embed servers.

In reading and following the instructions in this guide, you will learn how to:

Access directory services using REST APIs over HTTP

Access directory services using the LDAP command-line tools

Use LDAP schema

Work with standard LDAP groups and Directory Services-specific groups

Work with LDAP collective attributes and Directory Services virtual attributes

Work with LDAP referrals in search results

Develop custom directory service Java plugins

Embed the server in a Java application

ForgeRock Identity Platform™ serves as the basis for our simple and comprehensive Identity and Access Management solution. We help our customers deepen their relationships with their customers, and improve the productivity and connectivity of their employees and partners. For more information about ForgeRock and about the platform, see https://www.forgerock.com.

The ForgeRock Common REST API works across the platform to provide common ways to access web resources and collections of resources.

1. Using This Guide

This guide is intended for anyone developing applications that act as a client of directory services, or that embed or extend Directory Services software.

This guide is written with the expectation that you already have basic familiarity with the following topics:

Installing Directory Services software

If you are not yet familiar with Directory Services software installation, read the Installation Guide first.

Using command-line tools

LDAP and directory services

Basic server configuration

Some examples in this guide require server configuration steps.

HTTP, JavaScript Object Notation (JSON), and web applications

2. Accessing Documentation Online

ForgeRock publishes comprehensive documentation online:

The ForgeRock Knowledge Base offers a large and increasing number of up-to-date, practical articles that help you deploy and manage ForgeRock software.

While many articles are visible to community members, ForgeRock customers have access to much more, including advanced information for customers using ForgeRock software in a mission-critical capacity.

ForgeRock product documentation, such as this document, aims to be technically accurate and complete with respect to the software documented. It is visible to everyone and covers all product features and examples of how to use them.

3. Using the ForgeRock.org Site

The ForgeRock.org site has links to source code for ForgeRock open source software, as well as links to the ForgeRock forums and technical blogs.

If you are a ForgeRock customer, raise a support ticket instead of using the forums. ForgeRock support professionals will get in touch to help you.

Chapter 1. Understanding LDAP

This chapter introduces directory concepts and directory server features. In this chapter you will learn:

Why directory services exist and what they do well

How data is arranged in directories that support Lightweight Directory Access Protocol (LDAP)

How clients and servers communicate in LDAP

What operations are standard according to LDAP and how standard extensions to the protocol work

A directory resembles a dictionary or a phone book. If you know a word, you can look it up its entry in the dictionary to learn its definition or its pronunciation. If you know a name, you can look it up its entry in the phone book to find the telephone number and street address associated with the name. If you are bored, curious, or have lots of time, you can also read through the dictionary, phone book, or directory, entry after entry.

Where a directory differs from a paper dictionary or phone book is in how entries are indexed. Dictionaries typically have one index—words in alphabetical order. Phone books, too—names in alphabetical order. Directories' entries on the other hand are often indexed for multiple attributes, names, user identifiers, email addresses, and telephone numbers. This means you can look up a directory entry by the name of the user the entry belongs to, but also by their user identifier, their email address, or their telephone number, for example.

1.1. How Directories and LDAP Evolved

Phone companies have been managing directories for many decades. The Internet itself has relied on distributed directory services like DNS since the mid 1980s.

It was not until the late 1980s, however, that experts from what is now the International Telecommunications Union published the X.500 set of international standards, including Directory Access Protocol. The X.500 standards specify Open Systems Interconnect (OSI) protocols and data definitions for general purpose directory services. The X.500 standards were designed to meet the needs of systems built according to the X.400 standards, covering electronic mail services.

Lightweight Directory Access Protocol (LDAP) has been around since the early 1990s. LDAP was originally developed as an alternative protocol that would allow directory access over Internet protocols rather than OSI protocols, and be lightweight enough for desktop implementations. By the mid-1990s, LDAP directory servers became generally available and widely used.

Until the late 1990s, LDAP directory servers were designed primarily with quick lookups and high availability for lookups in mind. LDAP directory servers replicate data, so when an update is made, that update is applied to other peer directory servers. Thus, if one directory server goes down, lookups can continue on other servers. Furthermore, if a directory service needs to support more lookups, the administrator can simply add another directory server to replicate with its peers.

As organizations rolled out larger and larger directories serving more and more applications, they discovered that they needed high availability not only for lookups, but also for updates. Around the year 2000, directories began to support multi-master replication; that is, replication with multiple read-write servers. Soon thereafter the organizations with the very largest directories started to need higher update performance as well as availability.

The DS code base began in the mid-2000s, when engineers solving the update performance issue decided the cost of adapting the existing C-based directory technology for high-performance updates would be higher than the cost of building a new, high-performance directory using Java technology.

1.2. About Data In LDAP Directories

LDAP directory data is organized into entries, similar to the entries for words in the dictionary, or for subscriber names in the phone book. A sample entry follows:

dn: uid=bjensen,ou=People,dc=example,dc=com uid: bjensen cn: Babs Jensen cn: Barbara Jensen facsimileTelephoneNumber: +1 408 555 1992 gidNumber: 1000 givenName: Barbara homeDirectory: /home/bjensen l: San Francisco mail: bjensen@example.com objectClass: inetOrgPerson objectClass: organizationalPerson objectClass: person objectClass: posixAccount objectClass: top ou: People ou: Product Development roomNumber: 0209 sn: Jensen telephoneNumber: +1 408 555 1862 uidNumber: 1076

Barbara Jensen's entry has a number of attributes,

such as uid: bjensen,

telephoneNumber: +1 408 555 1862,

and objectClass: posixAccount.

(The objectClass attribute type indicates

which types of attributes are required and allowed for the entry.

As the entries object classes can be updated online,

and even the definitions of object classes and attributes

are expressed as entries that can be updated online,

directory data is extensible on the fly.)

When you look up her entry in the directory,

you specify one or more attributes and values to match.

The directory server then returns entries with attribute values

that match what you specified.

The attributes you search for are indexed in the directory, so the directory server can retrieve them more quickly. (Attribute values do not have to be strings. Some attribute values, like certificates and photos, are binary.

The entry also has a unique identifier, shown at the top of the entry,

dn: uid=bjensen,ou=People,dc=example,dc=com. DN is an acronym

for distinguished name. No two entries in the directory have the same

distinguished name. Yet, DNs are typically composed of case-insensitive

attributes.

Sometimes distinguished names include characters that you must escape. The following example shows an entry that includes escaped characters in the DN:

$ ldapsearch --port 1389 --baseDN dc=example,dc=com "(uid=escape)" dn: cn=DN Escape Characters \" # \+ \, \; \< = \> \\,dc=example,dc=com objectClass: person objectClass: inetOrgPerson objectClass: organizationalPerson objectClass: top givenName: DN Escape Characters uid: escape cn: DN Escape Characters " # + , ; < = > \ sn: " # + , ; < = > \ mail: escape@example.com

LDAP entries are arranged hierarchically in the directory.

The hierarchical organization resembles a file system on a PC or a web server,

often imagined as an upside down tree structure, or a pyramid.

The distinguished name consists of components separated by commas,

uid=bjensen,ou=People,dc=example,dc=com.

The names are little-endian.

The components reflect the hierarchy of directory entries.

"Directory Data" shows the hierarchy.

Barbara Jensen's entry is located under an entry with DN

ou=People,dc=example,dc=com,

an organization unit and parent entry for the people at Example.com.

The ou=People entry is located under the entry

with DN dc=example,dc=com,

the base entry for Example.com.

DC is an acronym for domain component.

The directory has other base entries, such as cn=config,

under which the configuration is accessible through LDAP.

A directory can serve multiple organizations, too.

You might find dc=example,dc=com,

dc=mycompany,dc=com,

and o=myOrganization in the same LDAP directory.

Therefore, when you look up entries, you specify the base DN to look under

in the same way you need to know whether to look

in the New York, Paris, or Tokyo phone book to find a telephone number.

(The root entry for the directory, technically the entry with DN

"" (the empty string), is called the root DSE.

It contains information about what the server supports,

including the other base DNs it serves.

A directory server stores two kinds of attributes in a directory entry:

user attributes

and operational attributes.

User attributes hold the information for users of the directory.

All of the attributes shown in the entry at the outset of this section

are user attributes.

Operational attributes hold information used by the directory itself.

Examples of operational attributes include

entryUUID, modifyTimestamp,

and subschemaSubentry.

When an LDAP search operation finds an entry in the directory,

the directory server returns all the visible user attributes

unless the search request restricts the list of attributes

by specifying those attributes explicitly.

The directory server does not, however, return any operational attributes

unless the search request specifically asks for them.

Generally speaking, applications should change only user attributes,

and leave updates of operational attributes to the server,

relying on public directory server interfaces to change server behavior.

An exception is access control instruction (aci) attributes,

which are operational attributes used to control access to directory data.

1.3. About LDAP Client and Server Communication

You may be used to web service client server communication, where each time the web client has something to request of the web server, a connection is set up and then torn down. LDAP has a different model. In LDAP the client application connects to the server and authenticates, then requests any number of operations, perhaps processing results in between requests, and finally disconnects when done.

The standard operations are as follows:

Bind (authenticate). The first operation in an LDAP session usually involves the client binding to the LDAP server with the server authenticating the client. Authentication identifies the client's identity in LDAP terms, the identity which is later used by the server to authorize (or not) access to directory data that the client wants to lookup or change.

If the client does not bind explicitly, the server treats the client as an anonymous client. An anonymous client is allowed to do anything that can be done anonymously. What can be done anonymously depends on access control and configuration settings. The client can also bind again on the same connection.

Search (lookup). After binding, the client can request that the server return entries based on an LDAP filter, which is an expression that the server uses to find entries that match the request, and a base DN under which to search. For example, to look up all entries for people with email address

bjensen@example.comin data for Example.com, you would specify a base DN such asou=People,dc=example,dc=comand the filter(mail=bjensen@example.com).Compare. After binding, the client can request that the server compare an attribute value the client specifies with the value stored on an entry in the directory.

Modify. After binding, the client can request that the server change one or more attribute values stored on an entry. Often administrators do not allow clients to change directory data, so request that your administrator set appropriate access rights for your client application if you want to update data.

Add. After binding, the client can request to add one or more new LDAP entries to the server.

Delete. After binding, the client can request that the server delete one or more entries. To delete an entry with other entries underneath, first delete the children, then the parent.

Modify DN. After binding, the client can request that the server change the distinguished name of the entry. In other words, this renames the entry or moves it to another location. For example, if Barbara changes her unique identifier from

bjensento something else, her DN would have to change. For another example, if you decide to consolidateou=Customersandou=Employeesunderou=Peopleinstead, all the entries underneath must change distinguished names.Renaming entire branches of entries can be a major operation for the directory, so avoid moving entire branches if you can.

Unbind. When done making requests, the client can request an unbind operation to end the LDAP session.

Abandon. When a request seems to be taking too long to complete, or when a search request returns many more matches than desired, the client can send an abandon request to the server to drop the operation in progress.

1.4. About LDAP Controls and Extensions

LDAP has standardized two mechanisms for extending the operations directory servers can perform beyond the basic operations listed above. One mechanism involves using LDAP controls. The other mechanism involves using LDAP extended operations.

LDAP controls are information added to an LDAP message to further specify how an LDAP operation should be processed. For example, the server-side sort request control modifies a search to request that the directory server return entries to the client in sorted order. The subtree delete request control modifies a delete to request that the server also remove child entries of the entry targeted for deletion.

The directory server can also send response controls in some cases to indicate that the response contains special information. Examples include responses for entry change notification, password policy, and paged results.

LDAP extended operations are additional LDAP operations not included in the original standard list. For example, the cancel extended operation works like an abandon operation, but finishes with a response from the server after the cancel is complete. The StartTLS extended operation allows a client to connect to a server on an insecure port, but then start Transport Layer Security negotiations to protect communications.

Both LDAP controls and extended operations are demonstrated later in this guide. DS servers support many LDAP controls and a few LDAP extended operations, controls and extended operations matching those demonstrated in this guide.

Chapter 2. Best Practices For Application Developers

Follow the advice in this chapter to write effective, maintainable, high-performance directory client applications.

2.1. Authenticate Correctly

Unless your application performs only read operations, authenticate to the directory server. Some directory services require authentication to read directory data.

Once you authenticate (bind), directory servers make authorization decisions based on your identity. With servers that support proxied authorization, once authenticated, your application can request an operation on behalf of another identity, for example, the identity of the end user.

Your application therefore should have an account used to authenticate

such as cn=My App,ou=Apps,dc=example,dc=com.

The directory administrator can then authorize appropriate access

for your application, and monitor your application's requests

to help you troubleshoot problems if they arise.

Your application can use simple, password-based authentication. When using password-based authentication, also use secure connections to avoid sending the password as cleartext over the network. If you prefer to manage certificates rather than passwords, directory servers can managed certificate-based authentication as well.

2.2. Reuse Connections

LDAP is a stateful protocol. You authenticate (bind), you do stuff, you unbind. The server maintains a context that lets it make authorization decisions concerning your requests. You should therefore reuse connections when possible.

You can make multiple requests without having to set up a new connection and authenticate for every request. You can issue a request and get results asynchronously, while you issue another request. You can even share connections in a pool, avoiding the overhead of setting up and tearing down connections if you use them often.

2.3. Health Check Connections

In a network built for HTTP applications, your long-lived LDAP connections can get cut by network equipment configured to treat idle and even just old connections as stale resources to reclaim.

When you maintain a particularly long-lived connection such as a connection for a persistent search, periodically perform a health check to make sure nothing on the network quietly decided to drop your connection without notification. A health check might involve reading an attribute on a well-known entry in the directory.

2.4. Request Exactly What You Need All At Once

By the time your application makes it to production, you should know what attributes you want. Request them explicitly and request all the attributes in the same search.

2.5. Use Specific LDAP Filters

The difference between a general filter

(mail=*@example.com) and a good, specific filter like

(mail=user@example.com) can be huge numbers of entries

and enormous amounts of processing time, both for the directory server

that has to return search results, and also for your application that has

to sort through the results. Many use cases can be handled with short,

specific filters. As a rule, prefer equality filters over substring

filters.

Some directory servers like DS servers reject unindexed searches by default, because unindexed searches are generally far more resource-intensive. If your application needs to use a filter that results in an unindexed search, then work with your directory administrator to find a solution, such as having the directory maintain the indexes required by your application.

Furthermore, always use & with

! to restrict the potential result set before returning

all entries that do not match part of the filter. For example, (&(location=Oslo)(!(mail=birthday.girl@example.com))).

2.6. Make Modifications Specific

When you modify attributes with multiple values, for example, when you modify a list of group members, replace or delete specific values individually, rather than replacing the entire list of values. Making modifications specific helps directory servers replicate your changes more effectively.

2.7. Trust Result Codes

Trust the LDAP result code that your application gets from the

directory server. For example, if you request a modify application and you

get ResultCode.SUCCESS, then consider the operation a

success rather than immediately issuing a search to get the modified

entry.

The LDAP replication model is loosely convergent. In other words,

the directory server can, and probably does, send you

ResultCode.SUCCESS before replicating your change to

every directory server instance across the network. If you issue a read

immediately after a write, and a load balancer sends your request to another

directory server instance, you could get a result that differs from what

you expect.

The loosely convergent model means that the entry could have changed since you read it. If needed, use LDAP assertions to set conditions for your LDAP operations.

2.8. Handle Input Securely

When taking input directly from a user or another program, handle the input securely by using appropriate methods to sanitize the data.

Failure to sanitize the input data can leave your application vulnerable to injection attacks.

2.9. Check Group Membership on the Account, Not the Group

If you need to determine which groups an account belongs to,

request the DS virtual attribute, isMemberOf,

when you read the account entry.

Other directory servers use other names for this attribute

that identifies the groups to an account belongs to.

2.10. Ask the Directory Server What It Supports

Directory servers expose their capabilities, suffixes they support, and other information as attribute values on the root DSE.

This allows your application to discover a variety of information at run time, rather than storing configuration separately. Thus putting effort into querying the directory about its configuration and the features it supports can make your application easier to deploy and to maintain.

For example, rather than hard-coding dc=example,dc=com as a suffix DN in your configuration,

you can search the root DSE on DS servers for namingContexts,

and then search under the naming context DNs to locate the desired entries to initialize your configuration.

Directory servers also expose their schema over LDAP.

The root DSE attribute subschemaSubentry

shows the DN of the entry holding LDAP schema definitions.

Note that LDAP object class and attribute type names are case-insensitive,

so isMemberOf and ismemberof

refer to the same attribute, for example.

2.11. Store Large Attribute Values By Reference

When you use large attribute values such as photos or audio messages, consider storing the objects themselves elsewhere and keeping only a reference to external content on directory entries. In order to serve results quickly with high availability, directory servers both cache content and also replicate it everywhere.

Textual entries with a bunch of attributes and perhaps a certificate are often no larger than a few KB. Your directory administrator might therefore be disappointed to learn that your popular application stores users' photo and .mp3 collections as attributes of their accounts.

2.12. Take Care With Persistent Search and Server-Side Sorting

A persistent search lets your application receive updates from the server as they happen by keeping the connection open and forcing the server to check whether to return additional results any time it performs a modification in the scope of your search. Directory administrators therefore might hesitate to grant persistent search access to your application. Directory servers like DS servers can let you discover updates with less overhead by searching the change log periodically. If you do have to use a persistent search instead, try to narrow the scope of your search.

Directory servers also support a resource-intensive operation called server-side sorting. When your application requests a server-side sort, the directory server retrieves all the entries matching your search, and then returns the whole set of entries in sorted order. For result sets of any size server-side sorting therefore ties up server resources that could be used elsewhere. Alternatives include both sorting the results after your application receives them, and working with the directory administrator to enable appropriate browsing (virtual list view) indexes on the directory server for applications that must regularly page through long lists of search results.

2.13. Reuse Schemas Where Possible

Directory servers like DS servers come with schema definitions for a wide range of standard object classes and attribute types. This is because directories are designed to be shared by many applications. Directories use unique, typically IANA-registered object identifiers (OID) to avoid object class and attribute type name clashes. The overall goal is Internet-wide interoperability.

You therefore should reuse schema definitions that already exist whenever you reasonably can. Reuse them as is. Do not try to redefine existing schema definitions.

If you must add schema definitions for your application, extend existing object classes with AUXILIARY classes of your own. Take care to name your definitions such that they do not clash with other names.

When you have defined schema required for your application, work with the directory administrator to add your definitions to the directory service. Directory servers like DS servers let directory administrators update schema definitions over LDAP, so there is not generally a need to interrupt the service to add your application. Directory administrators can, however, have other reasons why they hesitate to add your schema definitions. Coming to the discussion prepared with good schema definitions, explanations of why they should be added, and evident regard for interoperability makes it easier for the directory administrator to grant your request.

2.14. Handle Referrals

When a directory server returns a search result, the result is not necessarily an entry. If the result is a referral, then your application should follow up with an additional search based on the URIs provided in the result.

2.15. Troubleshooting: Check Result Codes

LDAP result codes are standard and clearly defined, and listed in "LDAP Result Codes". When your application receives a result, it must the result code value to determine what action to take. When the result is not what you expect, read or at least log the additional message information.

2.16. Troubleshooting: Check Server Log Files

If you can read the directory server access log,

then you can check what the server did with your application's request.

For example, the following access log excerpt shows a successful search

by cn=My App,ou=Apps,dc=example,dc=com.

The lines are wrapped for readability, whereas in the log each record starts

with the timestamp:

{"eventName":"DJ-LDAP","client":{"ip":"127.0.0.1","port":59891},"server":{"ip":"127.0.0.1","port":1389},"request":{"protocol":"LDAP","operation":"CONNECT","connId":0},"transactionId":"0","response":{"status":"SUCCESSFUL","statusCode":"0","elapsedTime":0,"elapsedTimeUnits":"MILLISECONDS"},"timestamp":"2016-10-20T15:48:36.933Z","_id":"11d5fdaf-79ac-4677-a640-805db1c35af0-3"}

{"eventName":"DJ-LDAP","client":{"ip":"127.0.0.1","port":59891},"server":{"ip":"127.0.0.1","port":1389},"request":{"protocol":"LDAP","operation":"EXTENDED","connId":0,"msgId":1,"name":"StartTLS","oid":"1.3.6.1.4.1.1466.20037"},"transactionId":"0","response":{"status":"SUCCESSFUL","statusCode":"0","elapsedTime":3,"elapsedTimeUnits":"MILLISECONDS"},"timestamp":"2016-10-20T15:48:36.945Z","_id":"11d5fdaf-79ac-4677-a640-805db1c35af0-5"}

{"eventName":"DJ-LDAP","client":{"ip":"127.0.0.1","port":59891},"server":{"ip":"127.0.0.1","port":1389},"request":{"protocol":"LDAP","operation":"BIND","connId":0,"msgId":2,"version":"3","authType":"Simple","dn":"cn=My App,ou=Apps,dc=example,dc=com"},"transactionId":"0","response":{"status":"SUCCESSFUL","statusCode":"0","elapsedTime":6,"elapsedTimeUnits":"MILLISECONDS"},"userId":"cn=My App,ou=Apps,dc=example,dc=com","timestamp":"2016-10-20T15:48:38.462Z","_id":"11d5fdaf-79ac-4677-a640-805db1c35af0-7"}

{"eventName":"DJ-LDAP","client":{"ip":"127.0.0.1","port":59891},"server":{"ip":"127.0.0.1","port":1389},"request":{"protocol":"LDAP","operation":"SEARCH","connId":0,"msgId":3,"dn":"dc=example,dc=com","scope":"sub","filter":"(uid=kvaughan)","attrs":["isMemberOf"]},"transactionId":"0","response":{"status":"SUCCESSFUL","statusCode":"0","elapsedTime":6,"elapsedTimeUnits":"MILLISECONDS","nentries":1},"timestamp":"2016-10-20T15:48:38.472Z","_id":"11d5fdaf-79ac-4677-a640-805db1c35af0-9"}

{"eventName":"DJ-LDAP","client":{"ip":"127.0.0.1","port":59891},"server":{"ip":"127.0.0.1","port":1389},"request":{"protocol":"LDAP","operation":"UNBIND","connId":0,"msgId":4},"transactionId":"0","timestamp":"2016-10-20T15:48:38.480Z","_id":"11d5fdaf-79ac-4677-a640-805db1c35af0-11"}

{"eventName":"DJ-LDAP","client":{"ip":"127.0.0.1","port":59891},"server":{"ip":"127.0.0.1","port":1389},"request":{"protocol":"LDAP","operation":"DISCONNECT","connId":0},"transactionId":"0","response":{"status":"SUCCESSFUL","statusCode":"0","elapsedTime":0,"elapsedTimeUnits":"MILLISECONDS","reason":"Client Unbind"},"timestamp":"2016-10-20T15:48:38.481Z","_id":"11d5fdaf-79ac-4677-a640-805db1c35af0-13"}

Notice that each operation type is shown in upper case.

The messages track the client information,

and the connection ID (connId)

and message ID (msgID) numbers for filtering messages.

The elapsedTime indicates how many milliseconds

the DS server worked on the request.

Result code 0 corresponds to a successful result, as described in

RFC 4511.

2.17. Troubleshooting: Inspect Network Traffic

If result codes and server logs are not enough, many network tools can interpret HTTP and LDAP packets. Install the necessary keys to decrypt encrypted packet content.

Chapter 3. Performing RESTful Operations

DS software lets you access directory data as JSON resources over HTTP. DS software maps JSON resources onto LDAP entries. As a result, REST clients perform many of the same operations as LDAP clients with directory data.

This chapter demonstrates RESTful client operations by using the default configuration and sample directory data imported into a directory server, as described in "To Import LDIF Data" in the Administration Guide, from the LDIF file Example.ldif.

In this chapter, you will learn how to use the DS REST API that provides access to directory data over HTTP. In particular, you will learn how to:

Before trying the examples, enable HTTP access to the DS server as described in "To Set Up REST Access to User Data" in the Administration Guide. The examples in this chapter use HTTP, but the procedure also shows how to set up HTTPS access to the server.

Interface stability: Evolving (See "ForgeRock Product Interface Stability" in the Release Notes.)

The DS REST API is built on a common ForgeRock HTTP-based REST API for interacting with JSON Resources. All APIs built on this common layer let you perform the following operations.

3.1. About ForgeRock Common REST

ForgeRock® Common REST is a common REST API framework. It works across the ForgeRock platform to provide common ways to access web resources and collections of resources. Adapt the examples in this section to your resources and deployment.

3.1.1. Common REST Resources

Servers generally return JSON-format resources, though resource formats can depend on the implementation.

Resources in collections can be found by their unique identifiers (IDs).

IDs are exposed in the resource URIs.

For example, if a server has a user collection under /users,

then you can access a user at

/users/user-id.

The ID is also the value of the _id field of the resource.

Resources are versioned using revision numbers.

A revision is specified in the resource's _rev field.

Revisions make it possible to figure out whether to apply changes

without resource locking and without distributed transactions.

3.1.2. Common REST Verbs

The Common REST APIs use the following verbs, sometimes referred to collectively as CRUDPAQ. For details and HTTP-based examples of each, follow the links to the sections for each verb.

- Create

Add a new resource.

This verb maps to HTTP PUT or HTTP POST.

For details, see "Create".

- Read

Retrieve a single resource.

This verb maps to HTTP GET.

For details, see "Read".

- Update

Replace an existing resource.

This verb maps to HTTP PUT.

For details, see "Update".

- Delete

Remove an existing resource.

This verb maps to HTTP DELETE.

For details, see "Delete".

- Patch

Modify part of an existing resource.

This verb maps to HTTP PATCH.

For details, see "Patch".

- Action

Perform a predefined action.

This verb maps to HTTP POST.

For details, see "Action".

- Query

Search a collection of resources.

This verb maps to HTTP GET.

For details, see "Query".

3.1.3. Common REST Parameters

Common REST reserved query string parameter names start with an underscore,

_.

Reserved query string parameters include, but are not limited to, the following names:

_action |

_api |

_crestapi |

_fields |

_mimeType |

_pageSize |

_pagedResultsCookie |

_pagedResultsOffset |

_prettyPrint |

_queryExpression |

_queryFilter |

_queryId |

_sortKeys |

_totalPagedResultsPolicy |

Note

Some parameter values are not safe for URLs, so URL-encode parameter values as necessary.

Continue reading for details about how to use each parameter.

3.1.4. Common REST Extension Points

The action verb is the main vehicle for extensions.

For example, to create a new user with HTTP POST rather than HTTP PUT,

you might use /users?_action=create.

A server can define additional actions.

For example, /tasks/1?_action=cancel.

A server can define stored queries to call by ID.

For example, /groups?_queryId=hasDeletedMembers.

Stored queries can call for additional parameters.

The parameters are also passed in the query string.

Which parameters are valid depends on the stored query.

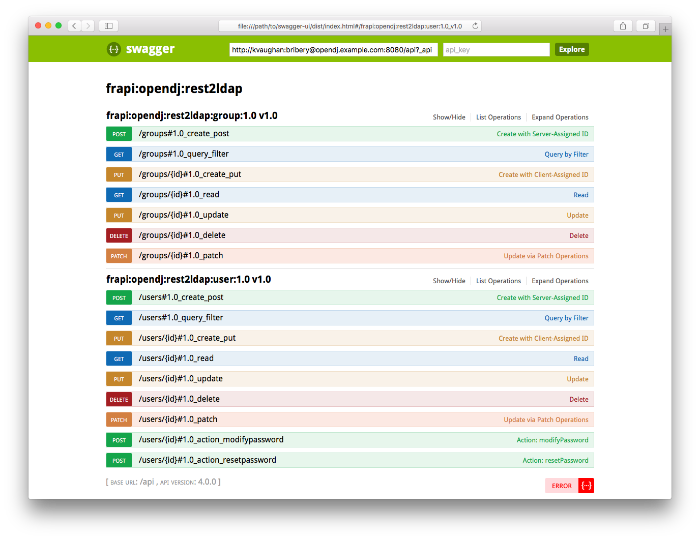

3.1.5. Common REST API Documentation

Common REST APIs often depend at least in part on runtime configuration. Many Common REST endpoints therefore serve API descriptors at runtime. An API descriptor documents the actual API as it is configured.

Use the following query string parameters to retrieve API descriptors:

_apiServes an API descriptor that complies with the OpenAPI specification.

This API descriptor represents the API accessible over HTTP. It is suitable for use with popular tools such as Swagger UI.

_crestapiServes a native Common REST API descriptor.

This API descriptor provides a compact representation that is not dependent on the transport protocol. It requires a client that understands Common REST, as it omits many Common REST defaults.

Note

Consider limiting access to API descriptors in production environments in order to avoid unnecessary traffic.

To provide documentation in production environments, see "To Publish OpenAPI Documentation" instead.

In production systems, developers expect stable, well-documented APIs. Rather than retrieving API descriptors at runtime through Common REST, prepare final versions, and publish them alongside the software in production.

Use the OpenAPI-compliant descriptors to provide API reference documentation for your developers as described in the following steps:

Configure the software to produce production-ready APIs.

In other words, the software should be configured as in production so that the APIs are identical to what developers see in production.

Retrieve the OpenAPI-compliant descriptor.

The following command saves the descriptor to a file,

myapi.json:$ curl -o myapi.json endpoint?_api(Optional) If necessary, edit the descriptor.

For example, you might want to add security definitions to describe how the API is protected.

If you make any changes, then also consider using a source control system to manage your versions of the API descriptor.

Publish the descriptor using a tool such as Swagger UI.

You can customize Swagger UI for your organization as described in the documentation for the tool.

3.1.6. Create

There are two ways to create a resource, either with an HTTP POST or with an HTTP PUT.

To create a resource using POST, perform an HTTP POST

with the query string parameter _action=create

and the JSON resource as a payload.

Accept a JSON response.

The server creates the identifier if not specified:

POST /users?_action=create HTTP/1.1

Host: example.com

Accept: application/json

Content-Length: ...

Content-Type: application/json

{ JSON resource }

To create a resource using PUT, perform an HTTP PUT

including the case-sensitive identifier for the resource in the URL path,

and the JSON resource as a payload.

Use the If-None-Match: * header.

Accept a JSON response:

PUT /users/some-id HTTP/1.1

Host: example.com

Accept: application/json

Content-Length: ...

Content-Type: application/json

If-None-Match: *

{ JSON resource }

The _id and content of the resource depend on the server implementation.

The server is not required to use the _id that the client provides.

The server response to the create request indicates the resource location

as the value of the Location header.

If you include the If-None-Match header, its value must be

*. In this case, the request creates the object if it

does not exist, and fails if the object does exist. If you include the

If-None-Match header with any value other than

*, the server returns an HTTP 400 Bad Request error. For

example, creating an object with

If-None-Match: revision returns

a bad request error. If you do not include If-None-Match: *,

the request creates the object if it does not exist, and

updates the object if it does exist.

You can use the following parameters:

_prettyPrint=trueFormat the body of the response.

_fields=field[,field...]Return only the specified fields in the body of the response.

The

fieldvalues are JSON pointers. For example if the resource is{"parent":{"child":"value"}},parent/childrefers to the"child":"value".If the

fieldis left blank, the server returns all default values.

3.1.7. Read

To retrieve a single resource, perform an HTTP GET on the resource

by its case-sensitive identifier (_id)

and accept a JSON response:

GET /users/some-id HTTP/1.1 Host: example.com Accept: application/json

You can use the following parameters:

_prettyPrint=trueFormat the body of the response.

_fields=field[,field...]Return only the specified fields in the body of the response.

The

fieldvalues are JSON pointers. For example if the resource is{"parent":{"child":"value"}},parent/childrefers to the"child":"value".If the

fieldis left blank, the server returns all default values._mimeType=mime-typeSome resources have fields whose values are multi-media resources such as a profile photo for example.

By specifying both a single field and also the mime-type for the response content, you can read a single field value that is a multi-media resource.

In this case, the content type of the field value returned matches the mime-type that you specify, and the body of the response is the multi-media resource.

The

Acceptheader is not used in this case. For example,Accept: image/pngdoes not work. Use the_mimeTypequery string parameter instead.

3.1.8. Update

To update a resource, perform an HTTP PUT

including the case-sensitive identifier (_id)

as the final element of the path to the resource,

and the JSON resource as the payload.

Use the If-Match: _rev header

to check that you are actually updating the version you modified.

Use If-Match: * if the version does not matter.

Accept a JSON response:

PUT /users/some-id HTTP/1.1

Host: example.com

Accept: application/json

Content-Length: ...

Content-Type: application/json

If-Match: _rev

{ JSON resource }

When updating a resource, include all the attributes to be retained. Omitting an attribute in the resource amounts to deleting the attribute unless it is not under the control of your application. Attributes not under the control of your application include private and read-only attributes. In addition, virtual attributes and relationship references might not be under the control of your application.

You can use the following parameters:

_prettyPrint=trueFormat the body of the response.

_fields=field[,field...]Return only the specified fields in the body of the response.

The

fieldvalues are JSON pointers. For example if the resource is{"parent":{"child":"value"}},parent/childrefers to the"child":"value".If the

fieldis left blank, the server returns all default values.

3.1.9. Delete

To delete a single resource, perform an HTTP DELETE

by its case-sensitive identifier (_id)

and accept a JSON response:

DELETE /users/some-id HTTP/1.1 Host: example.com Accept: application/json

You can use the following parameters:

_prettyPrint=trueFormat the body of the response.

_fields=field[,field...]Return only the specified fields in the body of the response.

The

fieldvalues are JSON pointers. For example if the resource is{"parent":{"child":"value"}},parent/childrefers to the"child":"value".If the

fieldis left blank, the server returns all default values.

3.1.10. Patch

To patch a resource, send an HTTP PATCH request with the following parameters:

operationfieldvaluefrom(optional with copy and move operations)

You can include these parameters in the payload for a PATCH request, or in a JSON PATCH file. If successful, you'll see a JSON response similar to:

PATCH /users/some-id HTTP/1.1

Host: example.com

Accept: application/json

Content-Length: ...

Content-Type: application/json

If-Match: _rev

{ JSON array of patch operations }

PATCH operations apply to three types of targets:

single-valued, such as an object, string, boolean, or number.

list semantics array, where the elements are ordered, and duplicates are allowed.

set semantics array, where the elements are not ordered, and duplicates are not allowed.

ForgeRock PATCH supports several different operations.

The following sections show each of these operations, along with options

for the field and value:

3.1.10.1. Patch Operation: Add

The add operation ensures that the target field contains

the value provided, creating parent fields as necessary.

If the target field is single-valued, then the value you include in the PATCH replaces the value of the target. Examples of a single-valued field include: object, string, boolean, or number.

An add operation has different results on two standard

types of arrays:

List semantic arrays: you can run any of these

addoperations on that type of array:If you

addan array of values, the PATCH operation appends it to the existing list of values.If you

adda single value, specify an ordinal element in the target array, or use the{-}special index to add that value to the end of the list.

Set semantic arrays: The list of values included in a patch are merged with the existing set of values. Any duplicates within the array are removed.

As an example, start with the following list semantic array resource:

{

"fruits" : [ "orange", "apple" ]

}

The following add operation includes the pineapple to the end of the list of

fruits, as indicated by the - at the end of the

fruits array.

{

"operation" : "add",

"field" : "/fruits/-",

"value" : "pineapple"

}The following is the resulting resource:

{

"fruits" : [ "orange", "apple", "pineapple" ]

}3.1.10.2. Patch Operation: Copy

The copy operation takes one or more existing values from the source field.

It then adds those same values on the target field. Once the values are

known, it is equivalent to performing an add operation

on the target field.

The following copy operation takes the value from a field

named mail, and then runs a replace

operation on the target field, another_mail.

[

{

"operation":"copy",

"from":"mail",

"field":"another_mail"

}

]If the source field value and the target field value are configured as arrays, the result depends on whether the array has list semantics or set semantics, as described in "Patch Operation: Add".

3.1.10.3. Patch Operation: Increment

The increment operation changes the value or values of

the target field by the amount you specify. The value that you include

must be one number, and may be positive or negative. The value of the

target field must accept numbers. The following increment

operation adds 1000 to the target value of

/user/payment.

[

{

"operation" : "increment",

"field" : "/user/payment",

"value" : "1000"

}

]

Since the value of the increment is

a single number, arrays do not apply.

3.1.10.4. Patch Operation: Move

The move operation removes existing values on the source field. It

then adds those same values on the target field. It is equivalent to

performing a remove operation on the source, followed

by an add operation with the same values, on the target.

The following move operation is equivalent to a

remove operation on the source field,

surname, followed by a replace

operation on the target field value, lastName. If the

target field does not exist, it is created.

[

{

"operation":"move",

"from":"surname",

"field":"lastName"

}

]

To apply a move operation on an array, you need a

compatible single-value, list semantic array, or set semantic array on

both the source and the target. For details, see the criteria described

in "Patch Operation: Add".

3.1.10.5. Patch Operation: Remove

The remove operation ensures that the target field no

longer contains the value provided. If the remove operation does not include

a value, the operation removes the field. The following

remove deletes the value of the

phoneNumber, along with the field.

[

{

"operation" : "remove",

"field" : "phoneNumber"

}

]

If the object has more than one phoneNumber, those

values are stored as an array.

A remove operation has different results on two standard

types of arrays:

List semantic arrays: A

removeoperation deletes the specified element in the array. For example, the following operation removes the first phone number, based on its array index (zero-based):[ { "operation" : "remove", "field" : "/phoneNumber/0" } ]Set semantic arrays: The list of values included in a patch are removed from the existing array.

3.1.10.6. Patch Operation: Replace

The replace operation removes any existing value(s) of

the targeted field, and replaces them with the provided value(s). It is

essentially equivalent to a remove followed by a

add operation. If the arrays are used, the criteria is

based on "Patch Operation: Add". However, indexed updates are

not allowed, even when the target is an array.

The following replace operation removes the existing

telephoneNumber value for the user, and then adds the

new value of +1 408 555 9999.

[

{

"operation" : "replace",

"field" : "/telephoneNumber",

"value" : "+1 408 555 9999"

}

]A PATCH replace operation on a list semantic array works in the same fashion as a PATCH remove operation. The following example demonstrates how the effect of both operations. Start with the following resource:

{

"fruits" : [ "apple", "orange", "kiwi", "lime" ],

}Apply the following operations on that resource:

[

{

"operation" : "remove",

"field" : "/fruits/0",

"value" : ""

},

{

"operation" : "replace",

"field" : "/fruits/1",

"value" : "pineapple"

}

]

The PATCH operations are applied sequentially. The remove

operation removes the first member of that resource, based on its array

index, (fruits/0), with the following result:

[

{

"fruits" : [ "orange", "kiwi", "lime" ],

}

]

The second PATCH operation, a replace, is applied on the

second member (fruits/1) of the intermediate resource,

with the following result:

[

{

"fruits" : [ "orange", "pineapple", "lime" ],

}

]3.1.10.7. Patch Operation: Transform

The transform operation changes the value of a field

based on a script or some other data transformation command. The following

transform operation takes the value from the field

named /objects, and applies the

something.js script as shown:

[

{

"operation" : "transform",

"field" : "/objects",

"value" : {

"script" : {

"type" : "text/javascript",

"file" : "something.js"

}

}

}

]3.1.10.8. Patch Operation Limitations

Some HTTP client libraries do not support the HTTP PATCH operation. Make sure that the library you use supports HTTP PATCH before using this REST operation.

For example, the Java Development Kit HTTP client does not support

PATCH as a valid HTTP method. Instead, the method

HttpURLConnection.setRequestMethod("PATCH")

throws ProtocolException.

You can use the following parameters. Other parameters might depend on the specific action implementation:

_prettyPrint=trueFormat the body of the response.

_fields=field[,field...]Return only the specified fields in the body of the response.

The

fieldvalues are JSON pointers. For example if the resource is{"parent":{"child":"value"}},parent/childrefers to the"child":"value".If the

fieldis left blank, the server returns all default values.

3.1.11. Action

Actions are a means of extending Common REST APIs and are defined by the resource provider, so the actions you can use depend on the implementation.

The standard action indicated by _action=create

is described in "Create".

You can use the following parameters. Other parameters might depend on the specific action implementation:

_prettyPrint=trueFormat the body of the response.

_fields=field[,field...]Return only the specified fields in the body of the response.

The

fieldvalues are JSON pointers. For example if the resource is{"parent":{"child":"value"}},parent/childrefers to the"child":"value".If the

fieldis left blank, the server returns all default values.

3.1.12. Query

To query a resource collection

(or resource container if you prefer to think of it that way),

perform an HTTP GET and accept a JSON response, including at least

a _queryExpression,

_queryFilter, or _queryId parameter.

These parameters cannot be used together:

GET /users?_queryFilter=true HTTP/1.1 Host: example.com Accept: application/json

The server returns the result as a JSON object including a "results" array and other fields related to the query string parameters that you specify.

You can use the following parameters:

_queryFilter=filter-expressionQuery filters request that the server return entries that match the filter expression. You must URL-escape the filter expression.

The string representation is summarized as follows. Continue reading for additional explanation:

Expr = OrExpr OrExpr = AndExpr ( 'or' AndExpr ) * AndExpr = NotExpr ( 'and' NotExpr ) * NotExpr = '!' PrimaryExpr | PrimaryExpr PrimaryExpr = '(' Expr ')' | ComparisonExpr | PresenceExpr | LiteralExpr ComparisonExpr = Pointer OpName JsonValue PresenceExpr = Pointer 'pr' LiteralExpr = 'true' | 'false' Pointer = JSON pointer OpName = 'eq' | # equal to 'co' | # contains 'sw' | # starts with 'lt' | # less than 'le' | # less than or equal to 'gt' | # greater than 'ge' | # greater than or equal to STRING # extended operator JsonValue = NUMBER | BOOLEAN | '"' UTF8STRING '"' STRING = ASCII string not containing white-space UTF8STRING = UTF-8 string possibly containing white-spaceJsonValue components of filter expressions follow RFC 7159: The JavaScript Object Notation (JSON) Data Interchange Format. In particular, as described in section 7 of the RFC, the escape character in strings is the backslash character. For example, to match the identifier

test\, use_id eq 'test\\'. In the JSON resource, the\is escaped the same way:"_id":"test\\".When using a query filter in a URL, be aware that the filter expression is part of a query string parameter. A query string parameter must be URL encoded as described in RFC 3986: Uniform Resource Identifier (URI): Generic Syntax For example, white space, double quotes (

"), parentheses, and exclamation characters need URL encoding in HTTP query strings. The following rules apply to URL query components:query = *( pchar / "/" / "?" ) pchar = unreserved / pct-encoded / sub-delims / ":" / "@" unreserved = ALPHA / DIGIT / "-" / "." / "_" / "~" pct-encoded = "%" HEXDIG HEXDIG sub-delims = "!" / "$" / "&" / "'" / "(" / ")" / "*" / "+" / "," / ";" / "="ALPHA,DIGIT, andHEXDIGare core rules of RFC 5234: Augmented BNF for Syntax Specifications:ALPHA = %x41-5A / %x61-7A ; A-Z / a-z DIGIT = %x30-39 ; 0-9 HEXDIG = DIGIT / "A" / "B" / "C" / "D" / "E" / "F"As a result, a backslash escape character in a JsonValue component is percent-encoded in the URL query string parameter as

%5C. To encode the query filter expression_id eq 'test\\', use_id+eq+'test%5C%5C', for example.A simple filter expression can represent a comparison, presence, or a literal value.

For comparison expressions use json-pointer comparator json-value, where the comparator is one of the following:

eq(equals)co(contains)sw(starts with)lt(less than)le(less than or equal to)gt(greater than)ge(greater than or equal to)For presence, use json-pointer pr to match resources where:

The JSON pointer is present.

The value it points to is not

null.

Literal values include true (match anything) and false (match nothing).

Complex expressions employ

and,or, and!(not), with parentheses,(expression), to group expressions._queryId=identifierSpecify a query by its identifier.

Specific queries can take their own query string parameter arguments, which depend on the implementation.

_pagedResultsCookie=stringThe string is an opaque cookie used by the server to keep track of the position in the search results. The server returns the cookie in the JSON response as the value of

pagedResultsCookie.In the request

_pageSizemust also be set and non-zero. You receive the cookie value from the provider on the first request, and then supply the cookie value in subsequent requests until the server returns anullcookie, meaning that the final page of results has been returned.The

_pagedResultsCookieparameter is supported when used with the_queryFilterparameter. The_pagedResultsCookieparameter is not guaranteed to work when used with the_queryExpressionand_queryIdparameters.The

_pagedResultsCookieand_pagedResultsOffsetparameters are mutually exclusive, and not to be used together._pagedResultsOffset=integerWhen

_pageSizeis non-zero, use this as an index in the result set indicating the first page to return.The

_pagedResultsCookieand_pagedResultsOffsetparameters are mutually exclusive, and not to be used together._pageSize=integerReturn query results in pages of this size. After the initial request, use

_pagedResultsCookieor_pageResultsOffsetto page through the results._totalPagedResultsPolicy=stringWhen a

_pageSizeis specified, and non-zero, the server calculates the "totalPagedResults", in accordance with thetotalPagedResultsPolicy, and provides the value as part of the response. The "totalPagedResults" is either an estimate of the total number of paged results (_totalPagedResultsPolicy=ESTIMATE), or the exact total result count (_totalPagedResultsPolicy=EXACT). If no count policy is specified in the query, or if_totalPagedResultsPolicy=NONE, result counting is disabled, and the server returns value of -1 for "totalPagedResults"._sortKeys=[+-]field[,[+-]field...]Sort the resources returned based on the specified field(s), either in

+(ascending, default) order, or in-(descending) order.Because ascending order is the default, including the

+character in the query is unnecessary. If you do include the+, it must be URL-encoded as%2B, for example:http://localhost:8080/api/users?_prettyPrint=true&_queryFilter=true&_sortKeys=%2Bname/givenName

The

_sortKeysparameter is not supported for predefined queries (_queryId)._prettyPrint=trueFormat the body of the response.

_fields=field[,field...]Return only the specified fields in each element of the "results" array in the response.

The

fieldvalues are JSON pointers. For example if the resource is{"parent":{"child":"value"}},parent/childrefers to the"child":"value".If the

fieldis left blank, the server returns all default values.

3.1.13. HTTP Status Codes

When working with a Common REST API over HTTP, client applications should expect at least the following HTTP status codes. Not all servers necessarily return all status codes identified here:

- 200 OK

The request was successful and a resource returned, depending on the request.

- 201 Created

The request succeeded and the resource was created.

- 204 No Content

The action request succeeded, and there was no content to return.

- 304 Not Modified

The read request included an

If-None-Matchheader, and the value of the header matched the revision value of the resource.- 400 Bad Request

The request was malformed.

- 401 Unauthorized

The request requires user authentication.

- 403 Forbidden

Access was forbidden during an operation on a resource.

- 404 Not Found

The specified resource could not be found, perhaps because it does not exist.

- 405 Method Not Allowed

The HTTP method is not allowed for the requested resource.

- 406 Not Acceptable

The request contains parameters that are not acceptable, such as a resource or protocol version that is not available.

- 409 Conflict

The request would have resulted in a conflict with the current state of the resource.

- 410 Gone

The requested resource is no longer available, and will not become available again. This can happen when resources expire for example.

- 412 Precondition Failed

The resource's current version does not match the version provided.

- 415 Unsupported Media Type

The request is in a format not supported by the requested resource for the requested method.

- 428 Precondition Required

The resource requires a version, but no version was supplied in the request.

- 500 Internal Server Error

The server encountered an unexpected condition that prevented it from fulfilling the request.

- 501 Not Implemented

The resource does not support the functionality required to fulfill the request.

- 503 Service Unavailable

The requested resource was temporarily unavailable. The service may have been disabled, for example.

3.2. Selecting an API Version

DS REST APIs can be versioned. If there is more than one version of the API, then you must select the version by setting a version header that specifies which version of the resource is requested:

Accept-API-Version: resource=version

Here, version is the value of

the version field

in the mapping configuration file for the API.

For details, see "Mapping Configuration File" in the Reference.

If you do not set a version header, then the latest version is returned.

The default example configuration includes only one API,

whose version is 1.0.

In this case, the header can be omitted.

If used in the examples below, the appropriate header would be

Accept-API-Version: resource=1.0.

3.3. Authenticating Over REST

When you first try to read a resource that can be read as an LDAP entry with an anonymous search, you learn that you must authenticate as shown in the following example:

$ curl http://opendj.example.com:8080/api/users/bjensen

{"code":401,"reason":"Unauthorized","message":"Invalid Credentials"}

HTTP status code 401 indicates that the request requires user authentication.

To prevent DS servers from requiring authentication,

set the Rest2ldap endpoint authorization-mechanism

to map anonymous HTTP requests to LDAP requests performed by an authorized user,

as in the following example that uses Kirsten Vaughan's identity:

$ dsconfig \ set-http-authorization-mechanism-prop \ --hostname opendj.example.com \ --port 4444 \ --bindDN "cn=Directory Manager" \ --bindPassword password \ --mechanism-name "HTTP Anonymous" \ --set enabled:true \ --set user-dn:uid=kvaughan,ou=people,dc=example,dc=com \ --trustAll \ --no-prompt $ dsconfig \ set-http-endpoint-prop \ --hostname opendj.example.com \ --port 4444 \ --bindDN "cn=Directory Manager" \ --bindPassword password \ --endpoint-name "/api" \ --set authorization-mechanism:"HTTP Anonymous" \ --trustAll \ --no-prompt

By default, both the Rest2ldap endpoint and also the REST to LDAP gateway allow HTTP Basic authentication and HTTP header-based authentication in the style of OpenIDM software. The authentication mechanisms translate HTTP authentication to LDAP authentication to the directory server.

When you set up a directory server either with generated sample user entries

or with data from

Example.ldif,

the relative distinguished name (DN) attribute for sample user entries

is the user ID (uid) attribute.

For example, the DN and user ID for Babs Jensen are:

dn: uid=bjensen,ou=People,dc=example,dc=com uid: bjensen

Given this pattern in the user entries,

the default REST to LDAP configuration translates

the HTTP user name to the LDAP user ID.

User entries are found directly under

ou=People,dc=example,dc=com.

(In general, REST to LDAP mappings require

that LDAP entries mapped to JSON resources

be immediate subordinates of the mapping's baseDN.)

In other words, Babs Jensen authenticates as bjensen

(password: hifalutin) over HTTP.

The corresponding LDAP bind DN is

uid=bjensen,ou=People,dc=example,dc=com.

HTTP Basic authentication works as shown in the following example:

$ curl \

--user bjensen:hifalutin \

http://opendj.example.com:8080/api/users/bjensen?_fields=userName

{"_id":"bjensen","_rev":"<revision>","userName":"bjensen@example.com"}

The alternative HTTP Basic username:password@ form in the URL works as shown in the following example:

$ curl \

http://bjensen:hifalutin@opendj.example.com:8080/api/users/bjensen?_fields=userName

{"_id":"bjensen","_rev":"<revision>","userName":"bjensen@example.com"}

HTTP header based authentication works as shown in the following example:

$ curl \

--header "X-OpenIDM-Username: bjensen" \

--header "X-OpenIDM-Password: hifalutin" \

http://opendj.example.com:8080/api/users/bjensen?_fields=userName

{"_id":"bjensen","_rev":"<revision>","userName":"bjensen@example.com"}

If the directory data is laid out differently or if the user names are email addresses rather than user IDs, for example, then you must update the configuration in order for authentication to work.

The REST to LDAP gateway can also translate HTTP user name and password authentication to LDAP PLAIN SASL authentication. Likewise, the gateway falls back to proxied authorization as necessary, using connections to LDAP servers on which the directory superuser has authenticated. See "REST to LDAP Configuration" in the Reference for details on all configuration choices.

3.4. Creating Resources

There are two alternative ways to create resources:

To create a resource using an ID that you specify, perform an HTTP PUT request with headers

Content-Type: application/jsonandIf-None-Match: *, and the JSON content of your resource.The following example shows you how to create a new user entry with ID

newuser:$ curl \ --request PUT \ --user kvaughan:bribery \ --header "Content-Type: application/json" \ --header "If-None-Match: *" \ --data '{ "_id": "newuser", "_schema":"frapi:opendj:rest2ldap:user:1.0", "contactInformation": { "telephoneNumber": "+1 408 555 1212", "emailAddress": "newuser@example.com" }, "name": { "familyName": "New", "givenName": "User" }, "displayName": ["New User"], "manager": { "_id": "kvaughan", "displayName": "Kirsten Vaughan" } }' \ http://opendj.example.com:8080/api/users/newuser?_prettyPrint=true { "_id" : "newuser", "_rev" : "<revision>", "_schema" : "frapi:opendj:rest2ldap:user:1.0", "_meta" : { "created" : "<datestamp>" }, "userName" : "newuser@example.com", "displayName" : [ "New User" ], "name" : { "givenName" : "User", "familyName" : "New" }, "contactInformation" : { "telephoneNumber" : "+1 408 555 1212", "emailAddress" : "newuser@example.com" }, "manager" : { "_id" : "kvaughan", "displayName" : "Kirsten Vaughan" } }To create a resource and let the server choose the ID, perform an HTTP POST with

_action=createas described in "Using Actions".

3.5. Reading a Resource

To read a resource, perform an HTTP GET as shown in the following example:

$ curl \

--request GET \

--user kvaughan:bribery \

http://opendj.example.com:8080/api/users/newuser?_prettyPrint=true

{

"_id" : "newuser",

"_rev" : "<revision>",

"_schema" : "frapi:opendj:rest2ldap:user:1.0",

"_meta" : {

"created" : "<datestamp>"

},

"userName" : "newuser@example.com",

"displayName" : [ "New User" ],

"name" : {

"givenName" : "User",

"familyName" : "New"

},

"contactInformation" : {

"telephoneNumber" : "+1 408 555 1212",

"emailAddress" : "newuser@example.com"

},

"manager" : {

"_id" : "kvaughan",

"displayName" : "Kirsten Vaughan"

}

}

3.6. Updating Resources

To update a resource, perform an HTTP PUT with the changes to the resource.

Use an If-Match header to ensure the resource already exists.

For read-only fields, either include unmodified versions,

or omit them from your updated version.

To update a resource regardless of the revision,

use an If-Match: * header.

The following example writes a new entry with an additional display name

for Sam Carter:

$ curl \

--request PUT \

--user kvaughan:bribery \

--header "Content-Type: application/json" \

--header "If-Match: *" \

--data '{

"contactInformation": {

"telephoneNumber": "+1 408 555 4798",

"emailAddress": "scarter@example.com"

},

"name": {

"familyName": "Carter",

"givenName": "Sam"

},

"userName": "scarter@example.com",

"displayName": ["Sam Carter", "Samantha Carter"],

"groups": [

{

"_id": "Accounting Managers"

}

],

"manager": {

"_id": "trigden",

"displayName": "Torrey Rigden"

},

"uidNumber": 1002,

"gidNumber": 1000,

"homeDirectory": "/home/scarter"

}' \

http://opendj.example.com:8080/api/users/scarter?_prettyPrint=true

{

"_id" : "scarter",

"_rev" : "<revision>",

"_schema" : "frapi:opendj:rest2ldap:posixUser:1.0",

"_meta" : {

"lastModified" : "<datestamp>"

},

"userName" : "scarter@example.com",

"displayName" : [ "Sam Carter", "Samantha Carter" ],

"name" : {

"givenName" : "Sam",

"familyName" : "Carter"

},

"contactInformation" : {

"telephoneNumber" : "+1 408 555 4798",

"emailAddress" : "scarter@example.com"

},

"uidNumber" : 1002,

"gidNumber" : 1000,

"homeDirectory" : "/home/scarter",

"groups" : [ {

"_id" : "Accounting Managers"

} ],

"manager" : {

"_id" : "trigden",

"displayName" : "Torrey Rigden"

}

}

To update a resource only if the resource matches a particular version,

use an If-Match: revision header

as shown in the following example:

$ export REVISION=$(cut -d \" -f 8 <(curl --silent \

--user kvaughan:bribery \

http://opendj.example.com:8080/api/users/scarter?_fields=_rev))

$ curl \

--request PUT \

--user kvaughan:bribery \

--header "If-Match: $REVISION" \

--header "Content-Type: application/json" \

--data '{

"_id" : "scarter",

"_schema" : "frapi:opendj:rest2ldap:posixUser:1.0",

"contactInformation": {

"telephoneNumber": "+1 408 555 4798",

"emailAddress": "scarter@example.com"

},

"name": {

"familyName": "Carter",

"givenName": "Sam"

},

"userName": "scarter@example.com",

"displayName": ["Sam Carter", "Samantha Carter"],

"uidNumber": 1002,

"gidNumber": 1000,

"homeDirectory": "/home/scarter"

}' \

http://opendj.example.com:8080/api/users/scarter?_prettyPrint=true

{

"_id" : "scarter",

"_rev" : "<new-revision>",

"_schema" : "frapi:opendj:rest2ldap:posixUser:1.0",

"_meta" : {

"lastModified" : "<datestamp>"

},

"userName" : "scarter@example.com",

"displayName" : [ "Sam Carter", "Samantha Carter" ],

"name" : {

"givenName" : "Sam",

"familyName" : "Carter"

},

"contactInformation" : {

"telephoneNumber" : "+1 408 555 4798",

"emailAddress" : "scarter@example.com"

},

"uidNumber" : 1002,

"gidNumber" : 1000,

"homeDirectory" : "/home/scarter",

"groups" : [ {

"_id" : "Accounting Managers"

} ]

}

3.7. Deleting Resources

To delete a resource, perform an HTTP DELETE on the resource URL. The operation returns the resource you deleted as shown in the following example:

$ curl \

--request DELETE \

--user kvaughan:bribery \

http://opendj.example.com:8080/api/users/newuser?_prettyPrint=true

{

"_id" : "newuser",

"_rev" : "<revision>",

"_schema" : "frapi:opendj:rest2ldap:user:1.0",

"_meta" : {

"created" : "<datestamp>"

},

"userName" : "newuser@example.com",

"displayName" : [ "New User" ],

"name" : {

"givenName" : "User",

"familyName" : "New"

},

"contactInformation" : {

"telephoneNumber" : "+1 408 555 1212",

"emailAddress" : "newuser@example.com"

},

"manager" : {

"_id" : "kvaughan",

"displayName" : "Kirsten Vaughan"

}

}

To delete a resource only if the resource matches a particular version,

use an If-Match: revision header

as shown in the following example:

$ export REVISION=$(cut -d \" -f 8 <(curl --silent \

--user kvaughan:bribery \

http://opendj.example.com:8080/api/users/newuser?_fields=_rev))

$ curl \

--request DELETE \

--user kvaughan:bribery \

--header "If-Match: $REVISION" \

http://opendj.example.com:8080/api/users/newuser?_prettyPrint=true

{

"_id" : "newuser",

"_rev" : "<revision>",

"_schema" : "frapi:opendj:rest2ldap:user:1.0",

"_meta" : {

"created" : "<datestamp>"

},

"userName" : "newuser@example.com",

"displayName" : [ "New User" ],

"name" : {

"givenName" : "User",

"familyName" : "New"

},

"contactInformation" : {

"telephoneNumber" : "+1 408 555 1212",

"emailAddress" : "newuser@example.com"

},

"manager" : {

"_id" : "kvaughan",

"displayName" : "Kirsten Vaughan"

}

}

To delete a resource and all of its children, you must change the configuration, get the REST to LDAP gateway or Rest2ldap endpoint to reload its configuration, and perform the operation as a user who has the access rights required. The following steps show one way to do this with the Rest2ldap endpoint.

In this example, the LDAP view of the user to delete shows two child entries as seen in the following example:

$ ldapsearch --port 1389 --baseDN uid=nbohr,ou=people,dc=example,dc=com "(&)" 1.1 dn: uid=nbohr,ou=People,dc=example,dc=com dn: cn=quantum dot,uid=nbohr,ou=People,dc=example,dc=com dn: cn=qubit generator,uid=nbohr,ou=People,dc=example,dc=com

If you are using the gateway, this requires the default setting of true for

useSubtreeDeleteinWEB-INF/classes/rest2ldap/rest2ldap.json.Note

Only users who have access to request a tree delete can delete resources with children.

Force the Rest2ldap to reread its configuration as shown in the following dsconfig commands:

$ dsconfig \ set-http-endpoint-prop \ --hostname opendj.example.com \ --port 4444 \ --bindDN "cn=Directory Manager" \ --bindPassword password \ --endpoint-name "/api" \ --set enabled:false \ --trustAll \ --no-prompt $ dsconfig \ set-http-endpoint-prop \ --hostname opendj.example.com \ --port 4444 \ --bindDN "cn=Directory Manager" \ --bindPassword password \ --endpoint-name "/api" \ --set enabled:true \ --trustAll \ --no-promptAllow the REST user to use the subtree delete control:

$ dsconfig \ set-access-control-handler-prop \ --hostname opendj.example.com \ --port 4444 \ --bindDN "cn=Directory Manager" \ --bindPassword password \ --add global-aci:"(targetcontrol=\"1.2.840.113556.1.4.805\")\ (version 3.0; acl \"Allow Subtree Delete\"; allow(read) \ userdn=\"ldap:///uid=kvaughan,ou=People,dc=example,dc=com\";)" \ --trustAll \ --no-promptRequest the delete as a user who has rights to perform a subtree delete on the resource as shown in the following example:

$ curl \ --request DELETE \ --user kvaughan:bribery \ http://opendj.example.com:8080/api/users/nbohr?_prettyPrint=true { "_id" : "nbohr", "_rev" : "<revision>", "_schema" : "frapi:opendj:rest2ldap:posixUser:1.0", "_meta" : { }, "userName" : "nbohr@example.com", "displayName" : [ "Niels Bohr" ], "name" : { "givenName" : "Niels", "familyName" : "Bohr" }, "contactInformation" : { "telephoneNumber" : "+1 408 555 1212", "emailAddress" : "nbohr@example.com" }, "uidNumber" : 1111, "gidNumber" : 1000, "homeDirectory" : "/home/nbohr" }

3.8. Patching Resources

DS software lets you patch JSON resources, updating part of the resource rather than replacing it. For example, you could change Babs Jensen's email address by issuing an HTTP PATCH request as in the following example:

$ curl \

--user kvaughan:bribery \

--request PATCH \

--header "Content-Type: application/json" \

--data '[

{

"operation": "replace",

"field": "/contactInformation/emailAddress",

"value": "babs@example.com"

}

]' \

http://opendj.example.com:8080/api/users/bjensen?_prettyPrint=true

{

"_id" : "bjensen",

"_rev" : "<revision>",

"_schema" : "frapi:opendj:rest2ldap:posixUser:1.0",

"_meta" : {

"lastModified" : "<datestamp>"

},

"userName" : "babs@example.com",

"displayName" : [ "Barbara Jensen", "Babs Jensen" ],

"name" : {

"givenName" : "Barbara",

"familyName" : "Jensen"

},

"description" : "Original description",

"contactInformation" : {

"telephoneNumber" : "+1 408 555 1862",

"emailAddress" : "babs@example.com"

},

"uidNumber" : 1076,

"gidNumber" : 1000,

"homeDirectory" : "/home/bjensen",

"manager" : {

"_id" : "trigden",

"displayName" : "Torrey Rigden"

},

"groups" : [ {

"_id" : "Carpoolers"

} ]

}

Notice in the example that the data sent specifies the type of patch operation, the field to change, and a value that depends on the field you change and on the operation. A single-valued field takes an object, boolean, string, or number depending on its type, whereas a multi-valued field takes an array of values. Getting the type wrong results in an error. Also notice that the patch data is itself an array. This makes it possible to patch more than one part of the resource by using a set of patch operations in the same request.

DS software supports four types of patch operations:

addThe add operation ensures that the target field contains the value provided, creating parent fields as necessary.